【论文推荐】最新5篇行人重识别( Person Re-ID)相关论文—样本生成、超越人类、实践指南、姿态归一化、图像生成

【导读】专知内容组整理了最近五篇行人重识别( Person Re-Identification)相关文章,为大家进行介绍,欢迎查看!

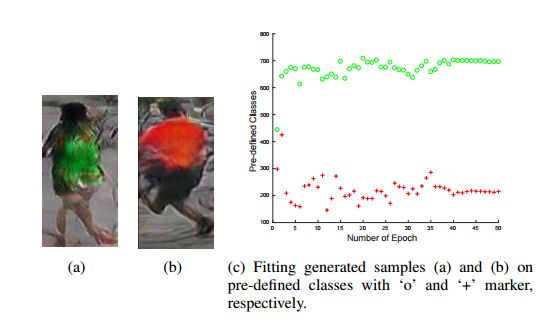

1. Multi-pseudo Regularized Label for Generated Samples in Person Re-Identification(行人重识别:基于多伪正则化标签的样本生成方法)

作者:Yan Huang,Jinsong Xu,Qiang Wu,Zhedong Zheng,Zhaoxiang Zhang,Jian Zhang

摘要:Sufficient training data is normally required to train deeply learned models. However, the number of pedestrian images per ID in person re-identification (re-ID) datasets is usually limited, since manually annotations are required for multiple camera views. To produce more data for training deeply learned models, generative adversarial network (GAN) can be leveraged to generate samples for person re-ID. However, the samples generated by vanilla GAN usually do not have labels. So in this paper, we propose a virtual label called Multi-pseudo Regularized Label (MpRL) and assign it to the generated images. With MpRL, the generated samples will be used as supplementary of real training data to train a deep model in a semi-supervised learning fashion. Considering data bias between generated and real samples, MpRL utilizes different contributions from predefined training classes. The contribution-based virtual labels are automatically assigned to generated samples to reduce ambiguous prediction in training. Meanwhile, MpRL only relies on predefined training classes without using extra classes. Furthermore, to reduce over-fitting, a regularized manner is applied to MpRL to regularize the learning process. To verify the effectiveness of MpRL, two state-of-the-art convolutional neural networks (CNNs) are adopted in our experiments. Experiments demonstrate that by assigning MpRL to generated samples, we can further improve the person re-ID performance on three datasets i.e., Market-1501, DukeMTMCreID, and CUHK03. The proposed method obtains +6.29%, +6.30% and +5.58% improvements in rank-1 accuracy over a strong CNN baseline respectively, and outperforms the state-of-the- art methods.

期刊:arXiv, 2018年1月29日

网址:

http://www.zhuanzhi.ai/document/735fe58ab843f2fb02adb71bd0dcbbb7

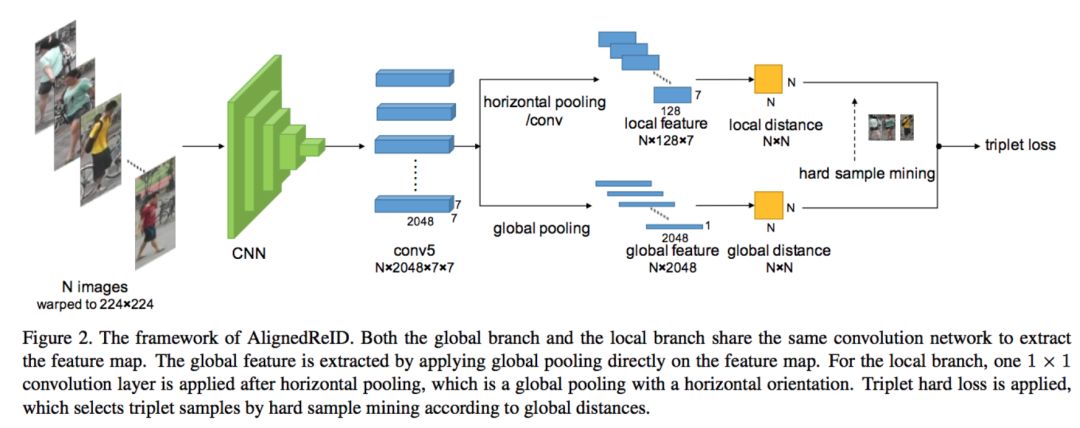

2. AlignedReID: Surpassing Human-Level Performance in Person Re-Identification(AlignedReID:在行人重识别中超越了人类水平)

作者:Xuan Zhang,Hao Luo,Xing Fan,Weilai Xiang,Yixiao Sun,Qiqi Xiao,Wei Jiang,Chi Zhang,Jian Sun

摘要:In this paper, we propose a novel method called AlignedReID that extracts a global feature which is jointly learned with local features. Global feature learning benefits greatly from local feature learning, which performs an alignment/matching by calculating the shortest path between two sets of local features, without requiring extra supervision. After the joint learning, we only keep the global feature to compute the similarities between images. Our method achieves rank-1 accuracy of 94.4% on Market1501 and 97.8% on CUHK03, outperforming state-of-the-art methods by a large margin. We also evaluate human-level performance and demonstrate that our method is the first to surpass human-level performance on Market1501 and CUHK03, two widely used Person ReID datasets.

期刊:arXiv, 2018年1月31日

网址:

http://www.zhuanzhi.ai/document/bc360742187b5572c5e07cb0a2284fe7

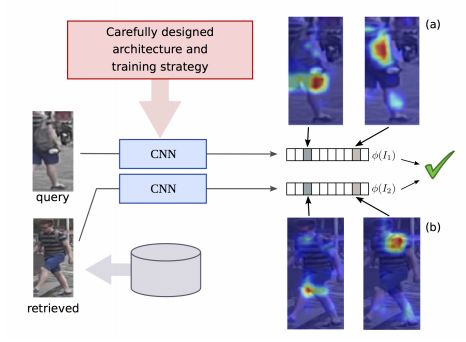

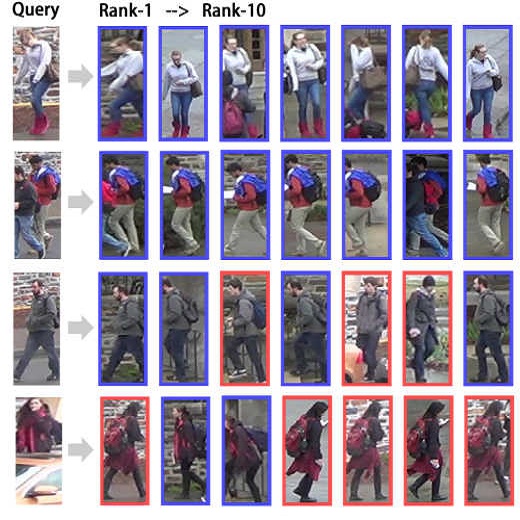

3. Re-ID done right: towards good practices for person re-identification(Re-ID:行人重识别中实践指南)

作者:Jon Almazan,Bojana Gajic,Naila Murray,Diane Larlus

摘要:Training a deep architecture using a ranking loss has become standard for the person re-identification task. Increasingly, these deep architectures include additional components that leverage part detections, attribute predictions, pose estimators and other auxiliary information, in order to more effectively localize and align discriminative image regions. In this paper we adopt a different approach and carefully design each component of a simple deep architecture and, critically, the strategy for training it effectively for person re-identification. We extensively evaluate each design choice, leading to a list of good practices for person re-identification. By following these practices, our approach outperforms the state of the art, including more complex methods with auxiliary components, by large margins on four benchmark datasets. We also provide a qualitative analysis of our trained representation which indicates that, while compact, it is able to capture information from localized and discriminative regions, in a manner akin to an implicit attention mechanism.

期刊:arXiv, 2018年1月17日

网址:

http://www.zhuanzhi.ai/document/074aefb3ce8c22258d68c3e721e21e8a

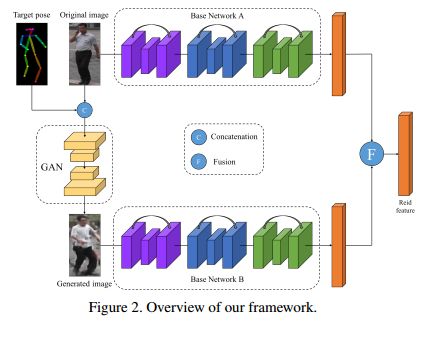

4. Pose-Normalized Image Generation for Person Re-identification(基于姿态归一化图像生成的行人重识别方法)

作者:Xuelin Qian,Yanwei Fu,Wenxuan Wang,Tao Xiang,Yang Wu,Yu-Gang Jiang,Xiangyang Xue

摘要:Person Re-identification (re-id) faces two major challenges: the lack of cross-view paired training data and learning discriminative identity-sensitive and view-invariant features in the presence of large pose variations. In this work, we address both problems by proposing a novel deep person image generation model for synthesizing realistic person images conditional on pose. The model is based on a generative adversarial network (GAN) and used specifically for pose normalization in re-id, thus termed pose-normalization GAN (PN-GAN). With the synthesized images, we can learn a new type of deep re-id feature free of the influence of pose variations. We show that this feature is strong on its own and highly complementary to features learned with the original images. Importantly, we now have a model that generalizes to any new re-id dataset without the need for collecting any training data for model fine-tuning, thus making a deep re-id model truly scalable. Extensive experiments on five benchmarks show that our model outperforms the state-of-the-art models, often significantly. In particular, the features learned on Market-1501 can achieve a Rank-1 accuracy of 68.67% on VIPeR without any model fine-tuning, beating almost all existing models fine-tuned on the dataset.

期刊:arXiv, 2018年1月18日

网址:

http://www.zhuanzhi.ai/document/7ef1e354b55bce36833394c4270ca649

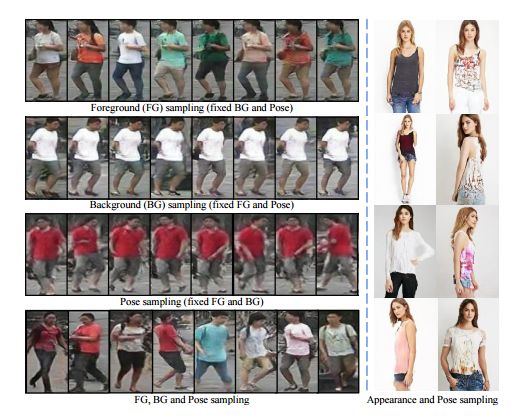

5. Disentangled Person Image Generation(分解行人图像生成方法)

作者:Liqian Ma,Qianru Sun,Stamatios Georgoulis,Luc Van Gool,Bernt Schiele,Mario Fritz

摘要:Generating novel, yet realistic, images of persons is a challenging task due to the complex interplay between the different image factors, such as the foreground, background and pose information. In this work, we aim at generating such images based on a novel, two-stage reconstruction pipeline that learns a disentangled representation of the aforementioned image factors and generates novel person images at the same time. First, a multi-branched reconstruction network is proposed to disentangle and encode the three factors into embedding features, which are then combined to re-compose the input image itself. Second, three corresponding mapping functions are learned in an adversarial manner in order to map Gaussian noise to the learned embedding feature space, for each factor respectively. Using the proposed framework, we can manipulate the foreground, background and pose of the input image, and also sample new embedding features to generate such targeted manipulations, that provide more control over the generation process. Experiments on Market-1501 and Deepfashion datasets show that our model does not only generate realistic person images with new foregrounds, backgrounds and poses, but also manipulates the generated factors and interpolates the in-between states. Another set of experiments on Market-1501 shows that our model can also be beneficial for the person re-identification task.

期刊:arXiv, 2018年1月22日

网址:

http://www.zhuanzhi.ai/document/85812c4cdf8ae54ce0e29c7ff251c2b5

-END-

专 · 知

人工智能领域主题知识资料查看获取:【专知荟萃】人工智能领域26个主题知识资料全集(入门/进阶/论文/综述/视频/专家等)

同时欢迎各位用户进行专知投稿,详情请点击:

【诚邀】专知诚挚邀请各位专业者加入AI创作者计划!了解使用专知!

请PC登录www.zhuanzhi.ai或者点击阅读原文,注册登录专知,获取更多AI知识资料!

请扫一扫如下二维码关注我们的公众号,获取人工智能的专业知识!

请加专知小助手微信(Rancho_Fang),加入专知主题人工智能群交流!

点击“阅读原文”,使用专知!