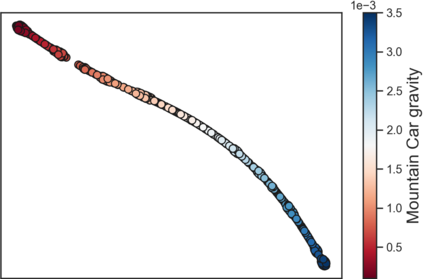

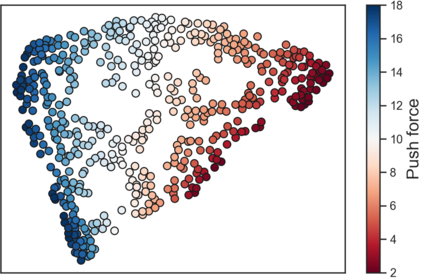

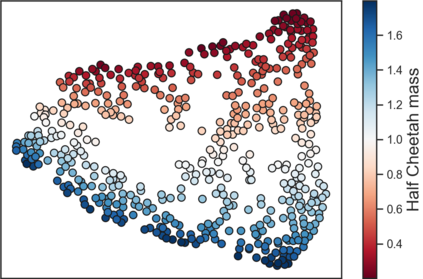

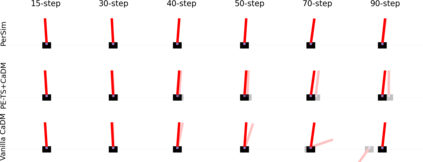

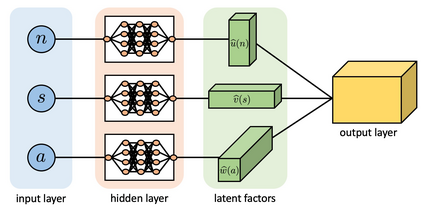

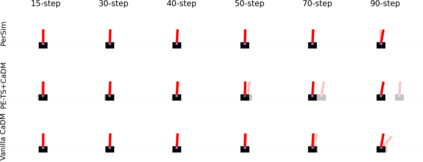

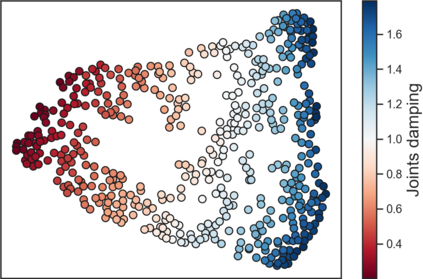

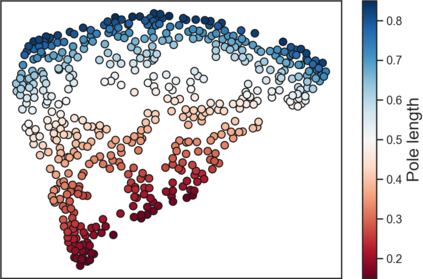

We consider offline reinforcement learning (RL) with heterogeneous agents under severe data scarcity, i.e., we only observe a single historical trajectory for every agent under an unknown, potentially sub-optimal policy. We find that the performance of state-of-the-art offline and model-based RL methods degrade significantly given such limited data availability, even for commonly perceived "solved" benchmark settings such as "MountainCar" and "CartPole". To address this challenge, we propose PerSim, a model-based offline RL approach which first learns a personalized simulator for each agent by collectively using the historical trajectories across all agents, prior to learning a policy. We do so by positing that the transition dynamics across agents can be represented as a latent function of latent factors associated with agents, states, and actions; subsequently, we theoretically establish that this function is well-approximated by a "low-rank" decomposition of separable agent, state, and action latent functions. This representation suggests a simple, regularized neural network architecture to effectively learn the transition dynamics per agent, even with scarce, offline data. We perform extensive experiments across several benchmark environments and RL methods. The consistent improvement of our approach, measured in terms of both state dynamics prediction and eventual reward, confirms the efficacy of our framework in leveraging limited historical data to simultaneously learn personalized policies across agents.

翻译:我们考虑在数据严重匮乏的情况下,与各种物剂进行离线强化学习(RL),在数据严重匮乏的情况下,我们考虑与各种物剂进行离线强化学习(RL),也就是说,我们只是在一个未知的、潜在的亚最佳政策下,观察每个物剂的单一历史轨迹。我们发现,由于数据供应有限,即使对于通常认为的“溶解”基准设置,例如“Mountaincar”和“CartPole”,最先进的离线强化学习(RL)方法也存在显著退化。为了应对这一挑战,我们建议PerSim(PerSim)是一种基于模型的离线RL方法,该方法首先通过在所有物剂之间集体使用历史轨迹来学习一个个性化模拟器。我们之所以这样做,是因为我们假设各种物剂之间的过渡动态可以作为与物剂、状态和行动相关的潜在因素的潜在功能;随后,我们理论上确定,这一功能与“低级”分解的物剂、状态和行动潜伏功能相近相近。这一表述表明,一个简单、正规的神经网络结构结构结构结构结构结构,以便有效地学习每个物剂的过渡性动态,我们测量了各种个人品力学的周期周期周期周期,甚至测量中测测测测测测测测测的个人性的个人动力。