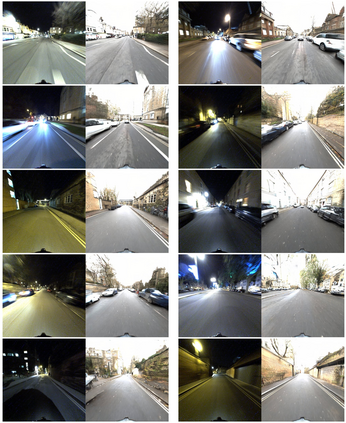

Visual localization is a key step in many robotics pipelines, allowing the robot to (approximately) determine its position and orientation in the world. An efficient and scalable approach to visual localization is to use image retrieval techniques. These approaches identify the image most similar to a query photo in a database of geo-tagged images and approximate the query's pose via the pose of the retrieved database image. However, image retrieval across drastically different illumination conditions, e.g. day and night, is still a problem with unsatisfactory results, even in this age of powerful neural models. This is due to a lack of a suitably diverse dataset with true correspondences to perform end-to-end learning. A recent class of neural models allows for realistic translation of images among visual domains with relatively little training data and, most importantly, without ground-truth pairings. In this paper, we explore the task of accurately localizing images captured from two traversals of the same area in both day and night. We propose ToDayGAN - a modified image-translation model to alter nighttime driving images to a more useful daytime representation. We then compare the daytime and translated night images to obtain a pose estimate for the night image using the known 6-DOF position of the closest day image. Our approach improves localization performance by over 250% compared the current state-of-the-art, in the context of standard metrics in multiple categories.

翻译:视觉本地化是许多机器人管道中的关键一步,让机器人(约)决定其在世界上的位置和方向。视觉本地化的一个高效且可伸缩的方法是使用图像检索技术。这些方法确定图像最类似于地理标记图像数据库中的查询照片,并且通过检索的数据库图像的外形来近似查询图像的外形。然而,在极端不同的照明条件下,例如白天和夜晚,图像的检索仍然是一个问题,即使在强大的神经模型时代,结果也不尽人意。这是因为缺少一个适当多样的数据集,并配有真实的对应来进行端对端学习。最近一类神经模型允许将图像以相对较少的培训数据在视觉区域进行现实的翻译,最重要的是,没有地面图案的配对。在本文中,我们探索从同一区域两处拍摄的图像准确本地化任务。我们建议TAYAYGAN - 一个经过修改的图像翻译模型,用来将夜间驱动图像转换为更有用的夜间图像。我们随后用最新的日历图像的翻译时间将每天和最接近的图像的图像化状况加以比较。我们用最新的图像的翻译,然后用最新的图像的一天和最接近的图像格式来改进我们所知道的每天的图像的图像的状态,然后用每天的图像的图像的翻译。