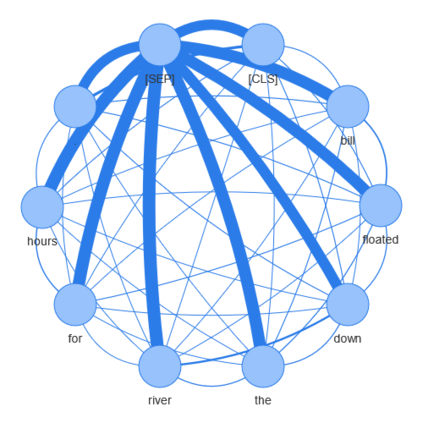

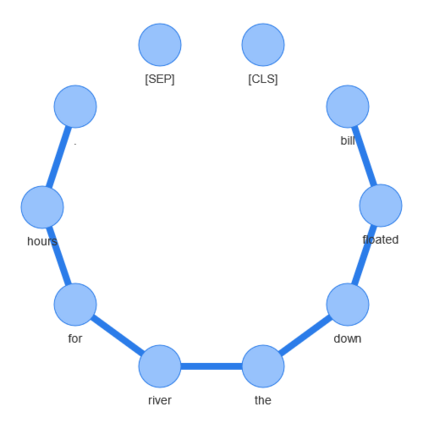

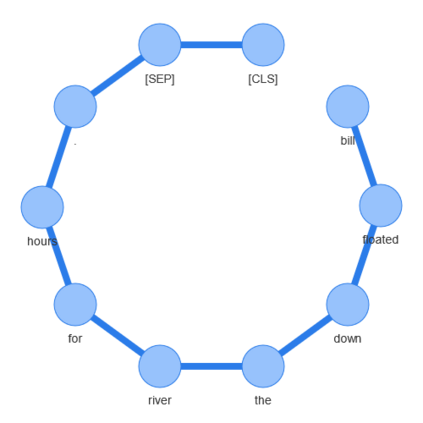

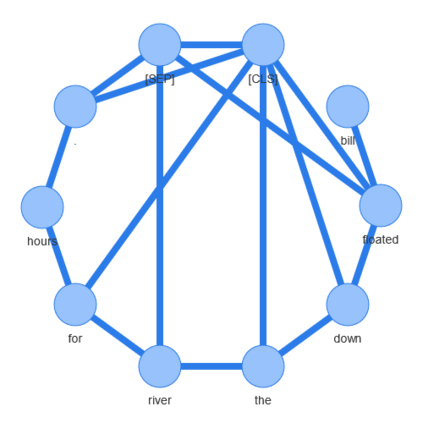

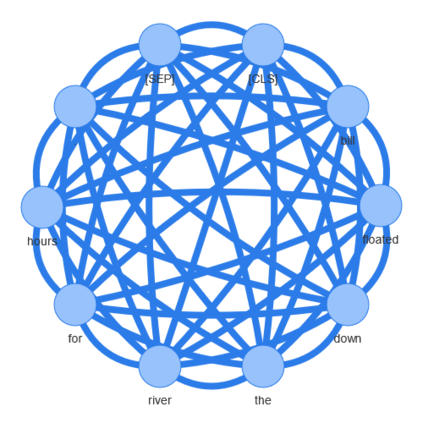

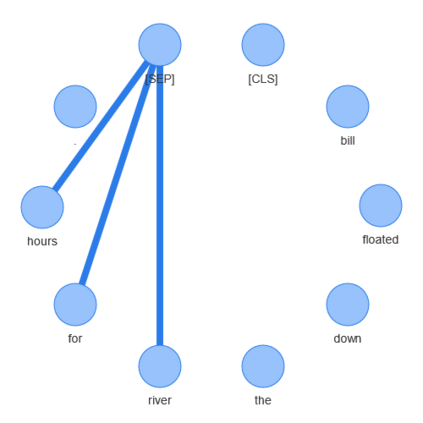

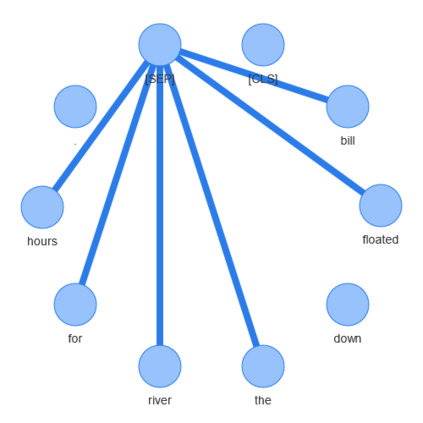

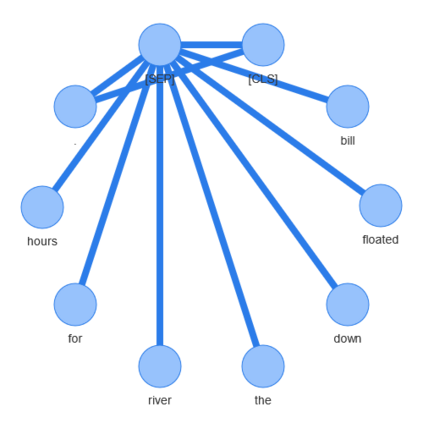

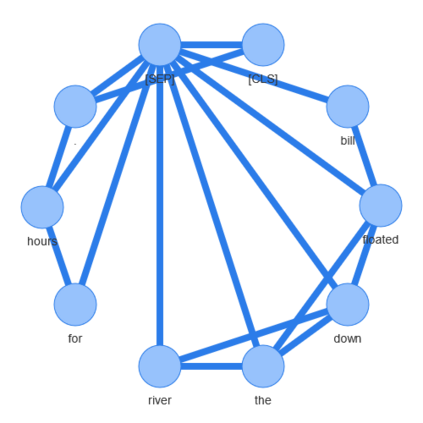

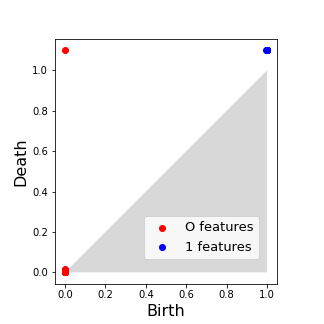

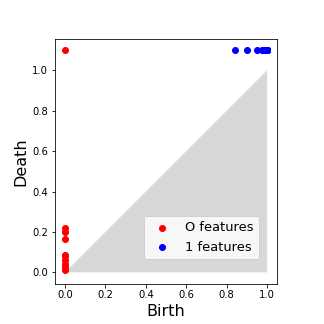

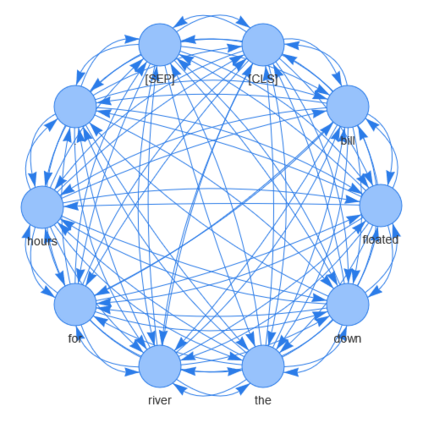

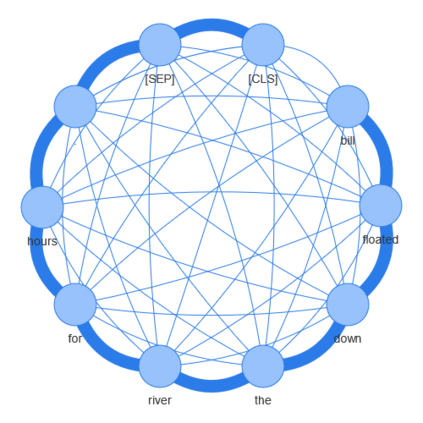

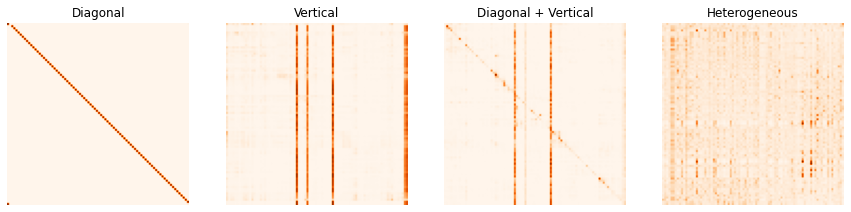

In recent years, the introduction of the Transformer models sparked a revolution in natural language processing (NLP). BERT was one of the first text encoders using only the attention mechanism without any recurrent parts to achieve state-of-the-art results on many NLP tasks. This paper introduces a text classifier using topological data analysis. We use BERT's attention maps transformed into attention graphs as the only input to that classifier. The model can solve tasks such as distinguishing spam from ham messages, recognizing whether a sentence is grammatically correct, or evaluating a movie review as negative or positive. It performs comparably to the BERT baseline and outperforms it on some tasks. Additionally, we propose a new method to reduce the number of BERT's attention heads considered by the topological classifier, which allows us to prune the number of heads from 144 down to as few as ten with no reduction in performance. Our work also shows that the topological model displays higher robustness against adversarial attacks than the original BERT model, which is maintained during the pruning process. To the best of our knowledge, this work is the first to confront topological-based models with adversarial attacks in the context of NLP.

翻译:近年来,采用变换模型引发了自然语言处理(NLP)的革命。BERT是第一批文字编码器之一,它仅使用关注机制,而没有任何经常性部分,用于实现许多NLP任务的最新结果。本文介绍了一个使用地形数据分析的文本分类器。我们用BERT的注意地图转换成关注图作为该分类器的唯一输入。该模型可以解决区分垃圾邮件和废纸信息等任务,承认一个句子是否正确,或者将电影审查评价为负或正。它与BERT基线相对应,并在某些任务上优于它。此外,我们提出了一个新的方法来减少由地形分类师审议的BERT的注意头数目,这使我们能够将头数从144个降低到不到10个,而没有减少性能。我们的工作还表明,与最初的BERT模型相比,对对抗对抗性攻击的力度要高一些,而最初的BERT模型是在运行过程中保持的。我们的最佳的对抗性攻击模型是顶级模型。