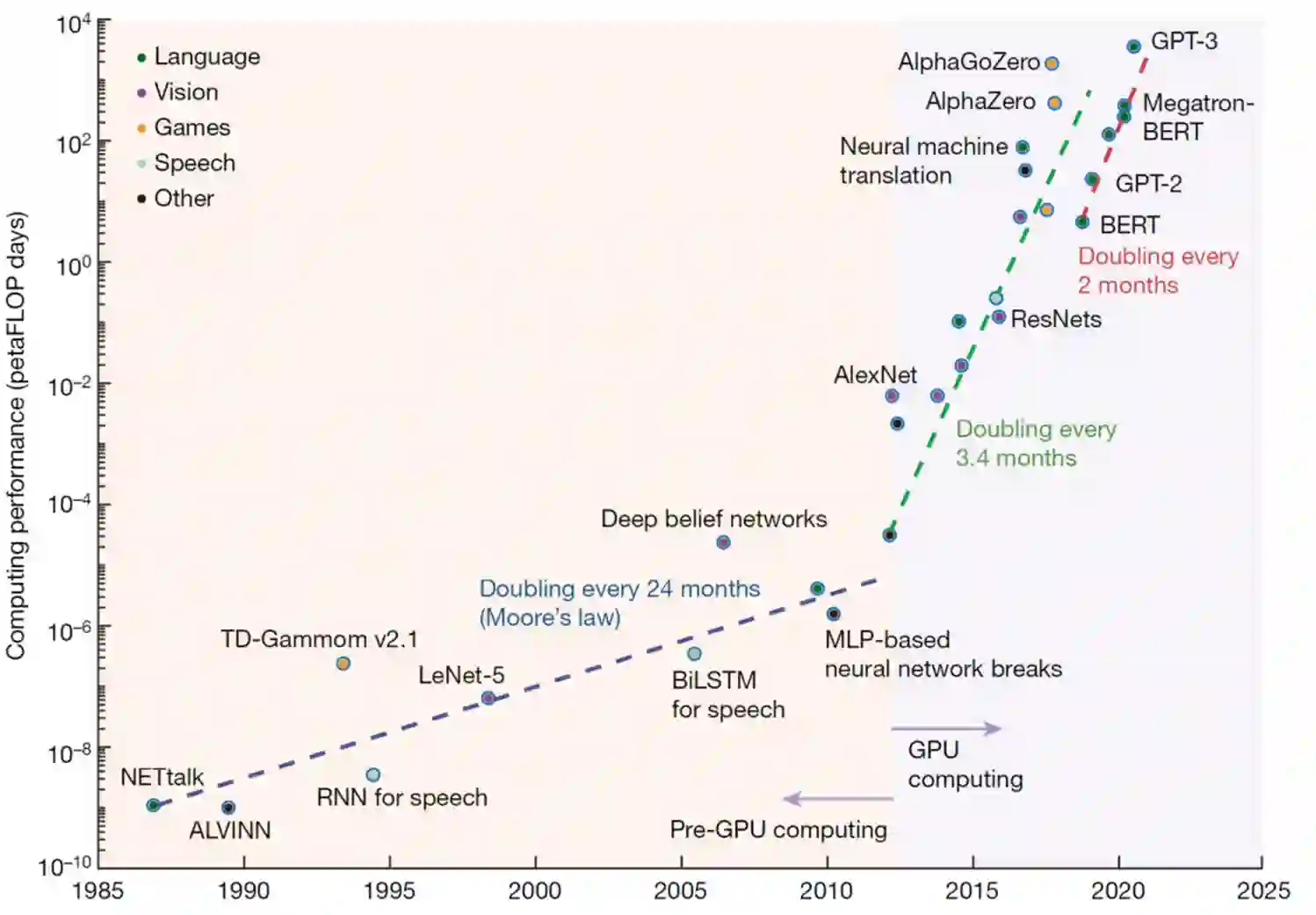

Large Language Models (LLMs) have been transformative. They are pre-trained foundational models that are self-supervised and can be adapted with fine tuning to a wide ranger of natural language tasks, each of which previously would have required a separate network model. This is one step closer to the extraordinary versatility of human language. GPT-3 and more recently LaMDA can carry on dialogs with humans on many topics after minimal priming with a few examples. However, there has been a wide range of reactions on whether these LLMs understand what they are saying or exhibit signs of intelligence. This high variance is exhibited in three interviews with LLMs reaching wildly different conclusions. A new possibility was uncovered that could explain this divergence. What appears to be intelligence in LLMs may in fact be a mirror that reflects the intelligence of the interviewer, a remarkable twist that could be considered a Reverse Turing Test. If so, then by studying interviews we may be learning more about the intelligence and beliefs of the interviewer than the intelligence of the LLMs. As LLMs become more capable they may transform the way we interact with machines and how they interact with each other. LLMs can talk the talk, but can they walk the walk?

翻译:大型语言模型(LLMS)已经具有变革性。它们是经过预先训练的基本模型,是自我监督的,可以经过细微调整,适应广泛的自然语言任务,每个自然任务都需要一个单独的网络模型。这是接近人类语言超常多功能的一步。GPT-3和最近LAMDA可以与人类就许多专题进行的对话,但只举几个例子。然而,对于这些LMS是否理解他们正在说什么或表现出智慧的迹象,人们有着广泛的反应。在与LLMS进行的三次访谈中,这种差异很大,得出了截然不同的结论。发现了一种新的可能性,可以解释这种差异。LMS中似乎具有的智力可能是一种反映采访者智慧的镜子,一种显著的曲折,可以被认为是反转动的图解试验。如果是这样的话,那么通过研究访谈,我们可能比LMS的智慧更多地了解采访者的智慧和信仰。LMS变得更有能力,但是他们可以改变我们与机器互动的方式,他们可以与每个LMS交谈的方式?LMS交谈?