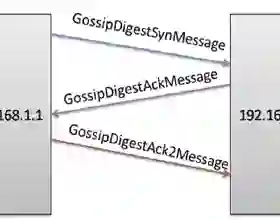

In recent years, decentralized learning has emerged as a powerful tool not only for large-scale machine learning, but also for preserving privacy. One of the key challenges in decentralized learning is that the data distribution held by each node is statistically heterogeneous. To address this challenge, the primal-dual algorithm called the Edge-Consensus Learning (ECL) was proposed and was experimentally shown to be robust to the heterogeneity of data distributions. However, the convergence rate of the ECL is provided only when the objective function is convex, and has not been shown in a standard machine learning setting where the objective function is non-convex. Furthermore, the intuitive reason why the ECL is robust to the heterogeneity of data distributions has not been investigated. In this work, we first investigate the relationship between the ECL and Gossip algorithm and show that the update formulas of the ECL can be regarded as correcting the local stochastic gradient in the Gossip algorithm. Then, we propose the Generalized ECL (G-ECL), which contains the ECL as a special case, and provide the convergence rates of the G-ECL in both (strongly) convex and non-convex settings, which do not depend on the heterogeneity of data distributions. Through synthetic experiments, we demonstrate that the numerical results of both the G-ECL and ECL coincide with the convergence rate of the G-ECL.

翻译:近年来,分散化学习不仅成为大规模机器学习的有力工具,而且也成为保护隐私的有力工具。分散化学习的主要挑战之一是每个节点掌握的数据分布在统计上是多种多样的。为了应对这一挑战,提出了称为“边缘-consensus Learning (ECL)”的原始双算法,并实验性地证明它对于数据分布的异质性具有很强的作用。然而,ECL的趋同率只有在目标函数为Convex时才能提供,而没有在标准机学习设置中显示目标函数为非连接值。此外,没有调查ECL对数据分布的异质性具有很强的直观原因。在这项工作中,我们首先调查ECL和Gossip算法之间的关系,并表明EC的更新公式可被视为纠正Gosip算法中本地的随机梯度。然后,我们提议通用ECL(G-ECLL)的趋同性(EC-L),其中包含EC的趋同性率,而GL的趋同性率则显示GL的不及GL的趋同性。