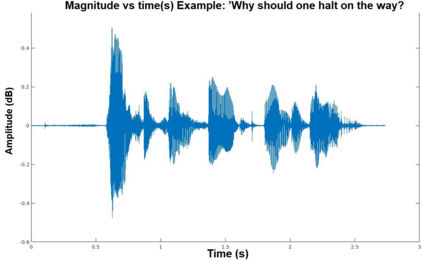

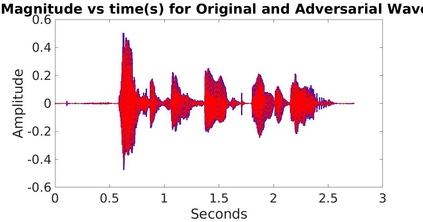

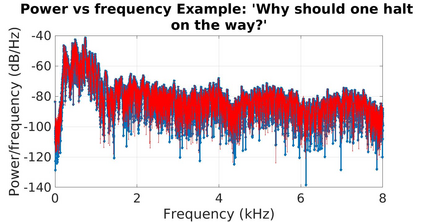

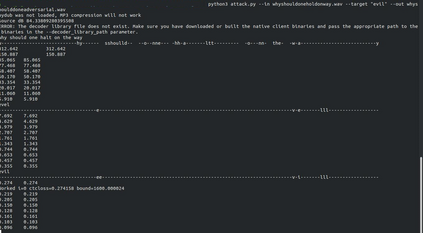

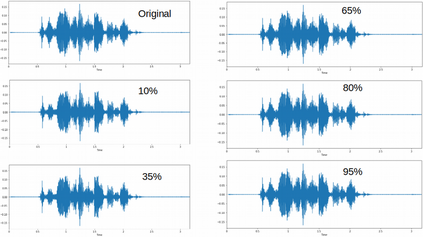

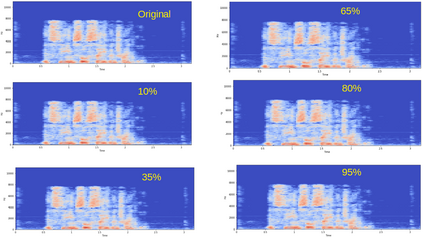

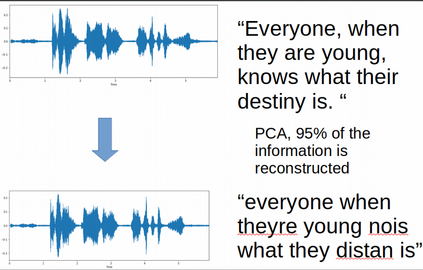

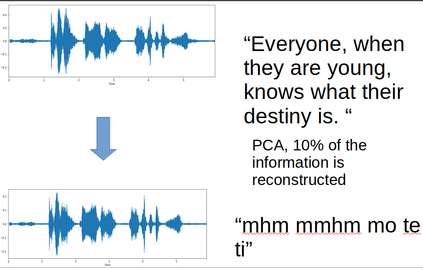

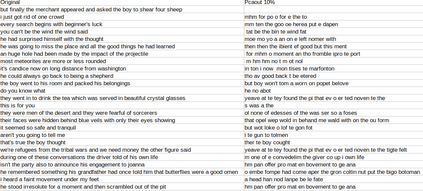

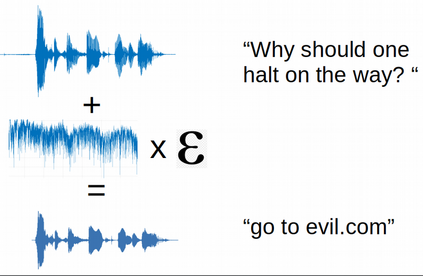

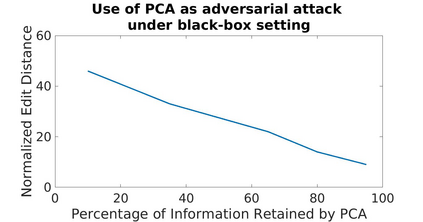

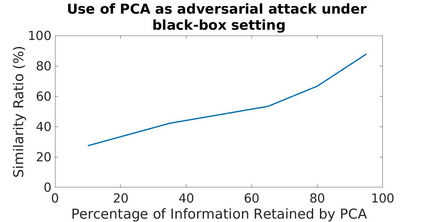

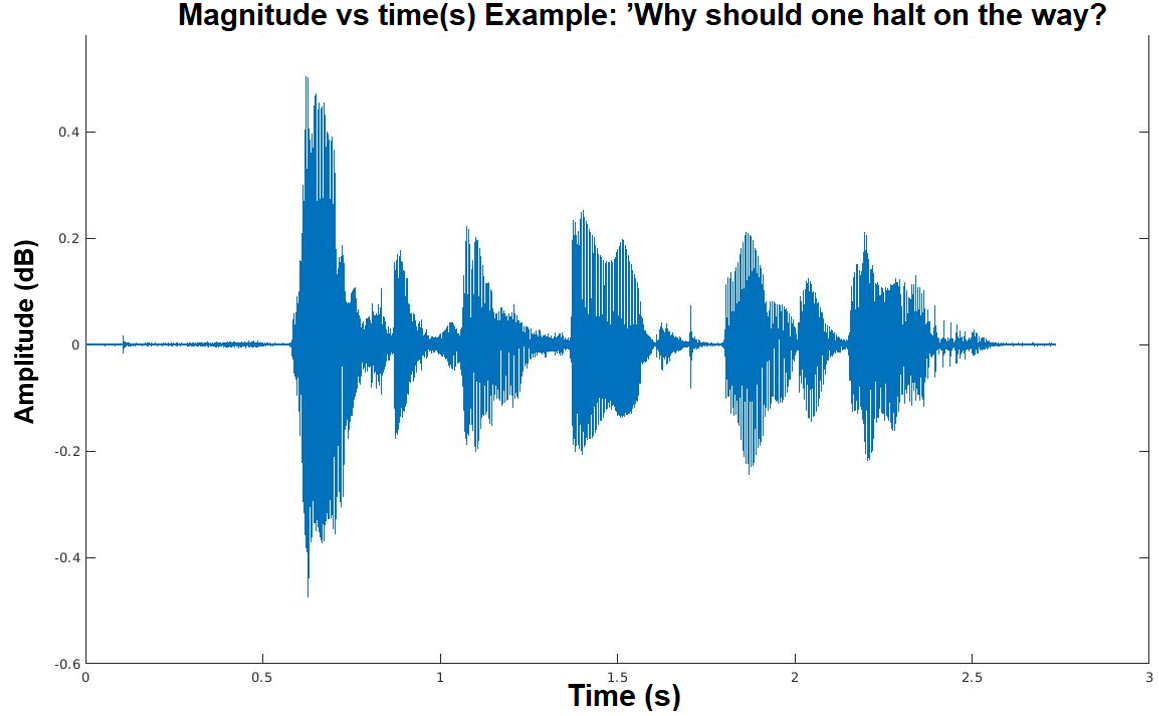

Adversarial attacks are inputs that are similar to original inputs but altered on purpose. Speech-to-text neural networks that are widely used today are prone to misclassify adversarial attacks. In this study, first, we investigate the presence of targeted adversarial attacks by altering wave forms from Common Voice data set. We craft adversarial wave forms via Connectionist Temporal Classification Loss Function, and attack DeepSpeech, a speech-to-text neural network implemented by Mozilla. We achieve 100% adversarial success rate (zero successful classification by DeepSpeech) on all 25 adversarial wave forms that we crafted. Second, we investigate the use of PCA as a defense mechanism against adversarial attacks. We reduce dimensionality by applying PCA to these 25 attacks that we created and test them against DeepSpeech. We observe zero successful classification by DeepSpeech, which suggests PCA is not a good defense mechanism in audio domain. Finally, instead of using PCA as a defense mechanism, we use PCA this time to craft adversarial inputs under a black-box setting with minimal adversarial knowledge. With no knowledge regarding the model, parameters, or weights, we craft adversarial attacks by applying PCA to samples from Common Voice data set and achieve 100% adversarial success under black-box setting again when tested against DeepSpeech. We also experiment with different percentage of components necessary to result in a classification during attacking process. In all cases, adversary becomes successful.

翻译:Adversarial攻击是类似于原始投入但目的上改变的输入。今天广泛使用的语音对文本神经网络容易对对抗性攻击进行错误分类。在本研究中,首先,我们通过改变共同声音数据集的波状来调查是否存在有针对性的对抗性攻击。我们通过连接体时分分分分损失功能来设计对抗性波状形式,并攻击由Mozilla实施的语音对文本神经网络DeepSpeech。我们在所有25种对抗性波状中实现100%的对抗性成功率(DeepSpeech的零成功分级)。第二,我们调查使用CPA作为防御性攻击的防御机制。我们通过将CPA应用于我们创建的25次攻击的波状形式来调查是否存在有针对性的对抗性对抗性攻击。我们观察DepsSpeech的对抗性波状形式没有成功分类,这表明CPA不是音域内一个良好的防御机制。最后,我们利用CPA这个时间在黑框下以最起码的对抗性对抗性攻击性攻击形式下进行对抗性投入。我们用100次的试验模式、参数或重量来根据不同的对抗性攻击性攻击性攻击的试样进行试验。我们又根据共同的试验模式、试验模式、测试性攻击性攻击的试制了100次进行试验。