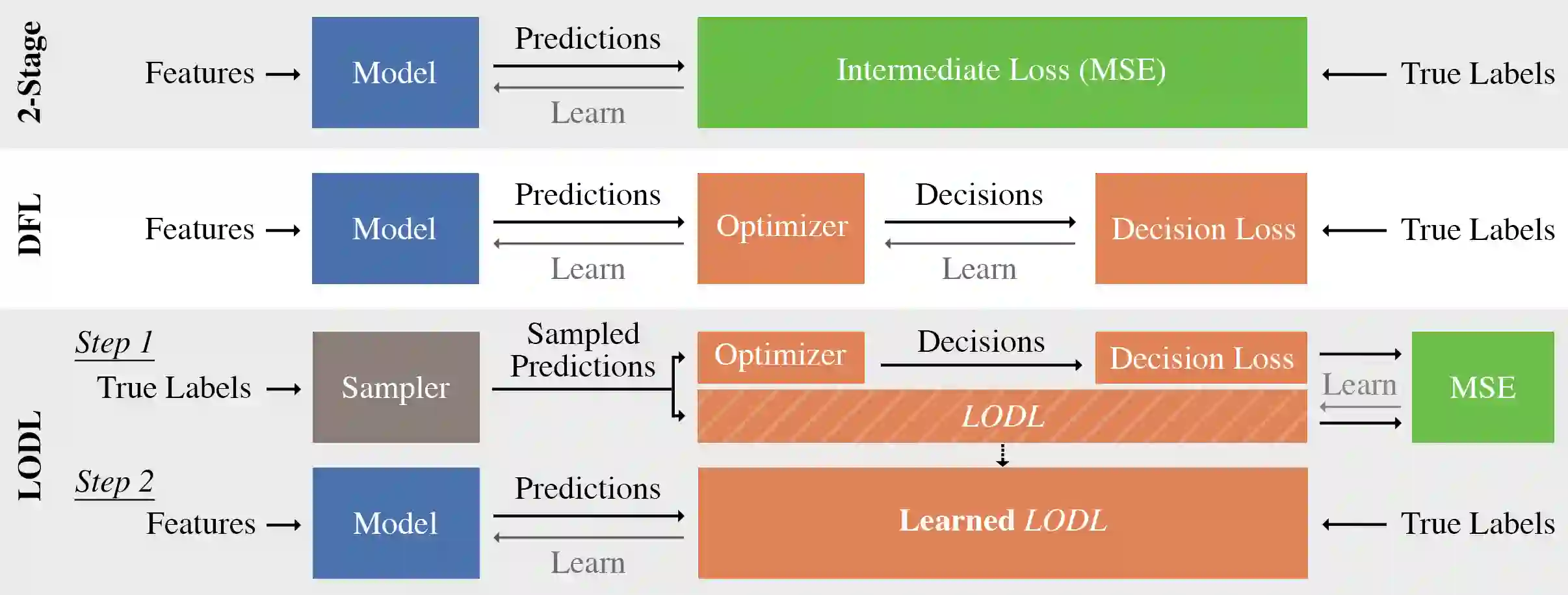

Decision-Focused Learning (DFL) is a paradigm for tailoring a predictive model to a downstream optimization task that uses its predictions in order to perform better on that specific task. The main technical challenge associated with DFL is that it requires being able to differentiate through the optimization problem, which is difficult due to discontinuous solutions and other challenges. Past work has largely gotten around this this issue by handcrafting task-specific surrogates to the original optimization problem that provide informative gradients when differentiated through. However, the need to handcraft surrogates for each new task limits the usability of DFL. In addition, there are often no guarantees about the convexity of the resulting surrogates and, as a result, training a predictive model using them can lead to inferior local optima. In this paper, we do away with surrogates altogether and instead learn loss functions that capture task-specific information. To the best of our knowledge, ours is the first approach that entirely replaces the optimization component of decision-focused learning with a loss that is automatically learned. Our approach (a) only requires access to a black-box oracle that can solve the optimization problem and is thus generalizable, and (b) can be convex by construction and so can be easily optimized over. We evaluate our approach on three resource allocation problems from the literature and find that our approach outperforms learning without taking into account task-structure in all three domains, and even hand-crafted surrogates from the literature.

翻译:与DFL相关的主要技术挑战是,它需要能够通过优化问题加以区分,而优化问题由于不连贯的解决方案和其他挑战而变得很困难。过去的工作在很大程度上绕过这一问题,通过手工制作任务特有的代孕工具来解决这一问题,而最初的优化问题在差异中提供了信息化梯度。然而,需要手动代孕,以每种新任务为主,从而限制DFL的可用性。此外,对于由此产生的代孕的共性,往往没有保证,因此,DFL需要通过优化问题来进行区分,因为优化问题因不连贯的解决方案和其他挑战而难以解决。在本文中,我们完全摆脱了套用套装,而代之以原始优化的梯度问题。我们最了解的是,第一种方法完全取代了以决定为主的学习的优化部分,其使用能力也限制了DFLL的可用性。此外,我们的方法(a)只要求使用最终代孕的代孕方法,因此只能从黑箱或手法上获取一个预测模型,这样,我们可以通过最优化的3个领域来解决最优化问题。