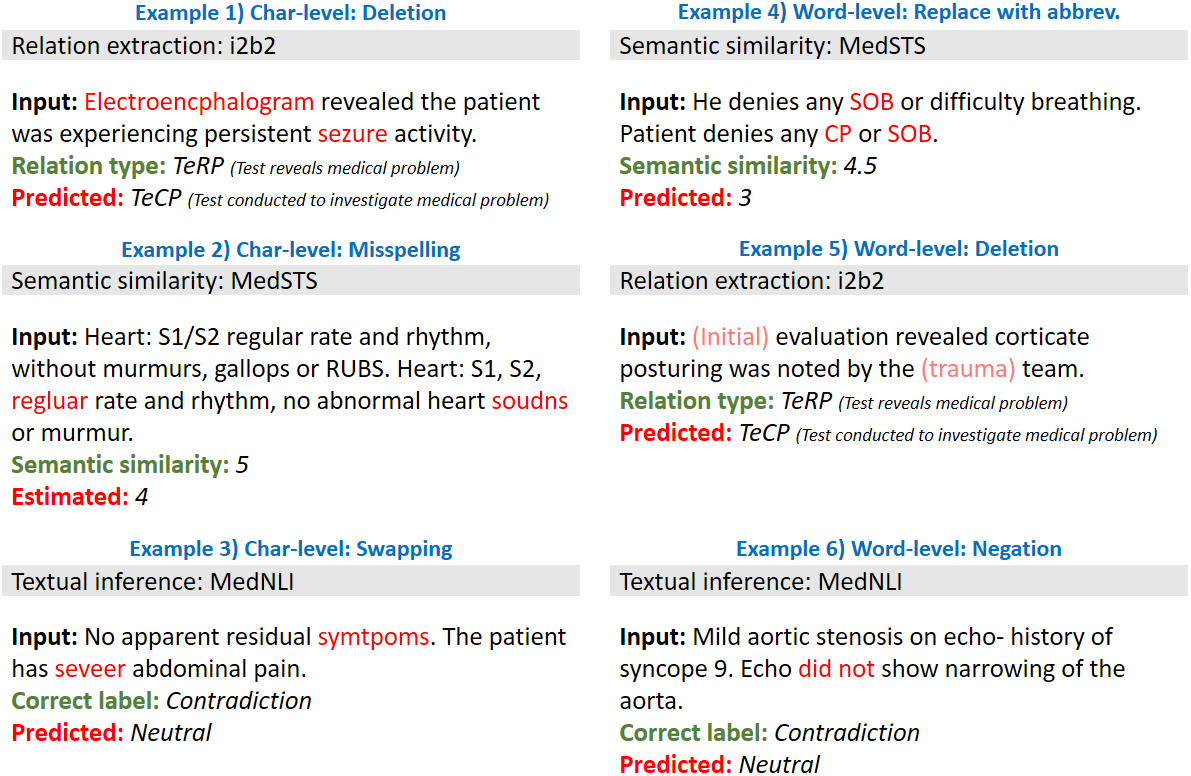

Artificial Intelligence (AI) systems are attracting increasing interest in the medical domain due to their ability to learn complicated tasks that require human intelligence and expert knowledge. AI systems that utilize high-performance Natural Language Processing (NLP) models have achieved state-of-the-art results on a wide variety of clinical text processing benchmarks. They have even outperformed human accuracy on some tasks. However, performance evaluation of such AI systems have been limited to accuracy measures on curated and clean benchmark datasets that may not properly reflect how robustly these systems can operate in real-world situations. In order to address this challenge, we introduce and implement a wide variety of perturbation methods that simulate different types of noise and variability in clinical text data. While noisy samples produced by these perturbation methods can often be understood by humans, they may cause AI systems to make erroneous decisions. Conducting extensive experiments on several clinical text processing tasks, we evaluated the robustness of high-performance NLP models against various types of character-level and word-level noise. The results revealed that the NLP models performance degrades when the input contains small amounts of noise. This study is a significant step towards exposing vulnerabilities of AI models utilized in clinical text processing systems. The proposed perturbation methods can be used in performance evaluation tests to assess how robustly clinical NLP models can operate on noisy data, in real-world settings.

翻译:人工智能(AI)系统由于能够学习需要人类情报和专业知识的复杂任务,因而对医疗领域越来越感兴趣。使用高性能自然语言处理模型的人工智能系统在广泛的临床文本处理基准方面取得了最先进的结果,甚至在某些任务方面超过了人的准确性。然而,这种人工智能系统的业绩评价限于对经整理和干净的基准数据集的精确度措施,这些系统可能无法适当反映这些系统在现实世界中能够如何强有力地运作。为了应对这一挑战,我们采用并采用多种干扰性方法,模拟临床文本数据中不同类型的噪音和变异性。虽然这些扰动性方法产生的噪音样本往往为人类所理解,但它们可能导致人工智能系统作出错误的决定。在对若干临床文本处理任务进行广泛的实验时,我们评估了高性能的自然智能数据模型在各种类型性能和字级噪音中是否稳健健。结果显示,NLP模型在输入含有少量噪音时,其性能会降低。在临床文本处理过程中如何在临床处理中采用一种重大的性能测试方法。