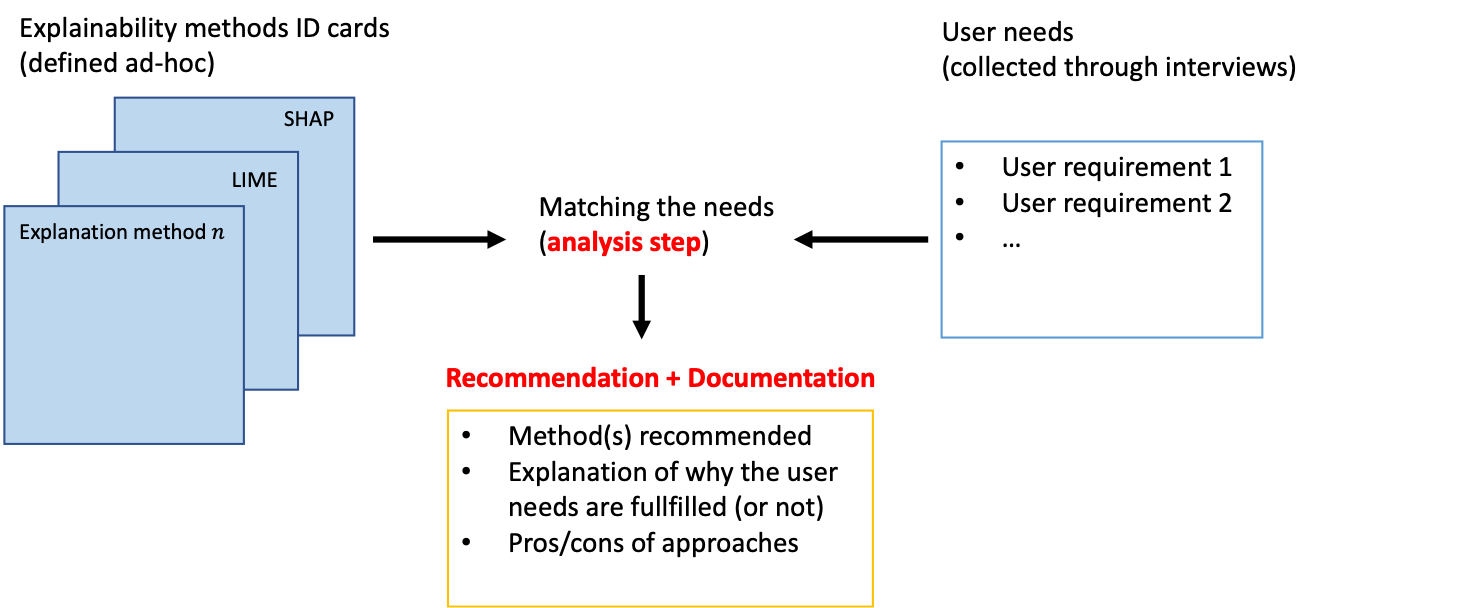

Explainability is becoming an important requirement for organizations that make use of automated decision-making due to regulatory initiatives and a shift in public awareness. Various and significantly different algorithmic methods to provide this explainability have been introduced in the field, but the existing literature in the machine learning community has paid little attention to the stakeholder whose needs are rather studied in the human-computer interface community. Therefore, organizations that want or need to provide this explainability are confronted with the selection of an appropriate method for their use case. In this paper, we argue there is a need for a methodology to bridge the gap between stakeholder needs and explanation methods. We present our ongoing work on creating this methodology to help data scientists in the process of providing explainability to stakeholders. In particular, our contributions include documents used to characterize XAI methods and user requirements (shown in Appendix), which our methodology builds upon.

翻译:由于监管举措和公众意识的转变,利用自动化决策的组织正在成为解释性的重要要求,在外地采用了各种截然不同的算法方法来提供这种解释性,但机器学习界的现有文献很少注意那些需要更是在人-计算机界面界研究的利益攸关方,因此,那些希望或需要提供这种解释性的组织在选择适当的使用方法时面临困难。在本文中,我们主张需要一种方法来弥补利益攸关方需求和解释方法之间的差距。我们介绍了我们目前为帮助数据科学家在向利益攸关方提供解释性的过程中制定这一方法的工作,特别是我们的贡献包括用于说明XAI方法和用户要求的文件(附录中列出),而我们的方法正是以这些文件为基础的。