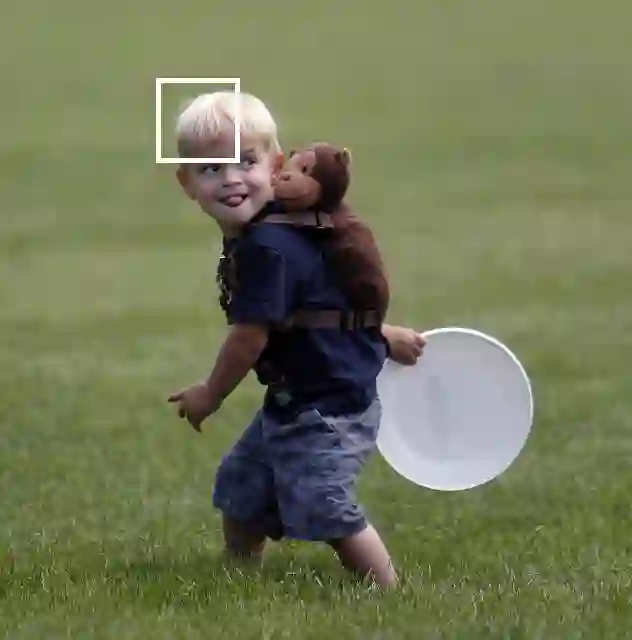

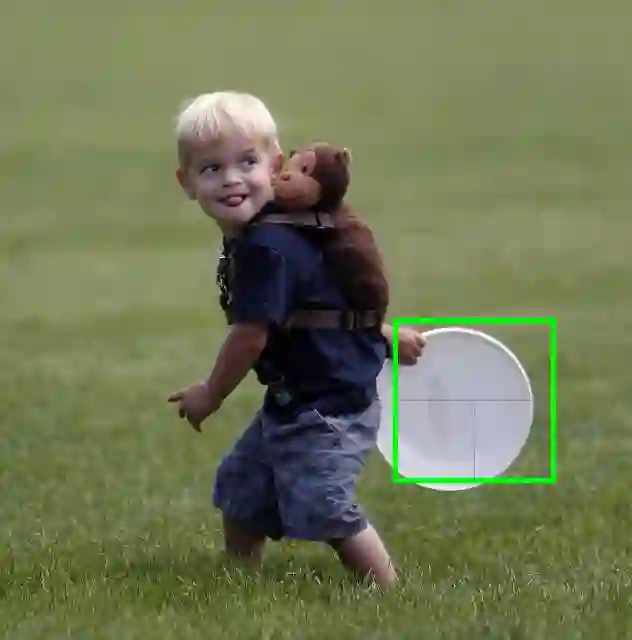

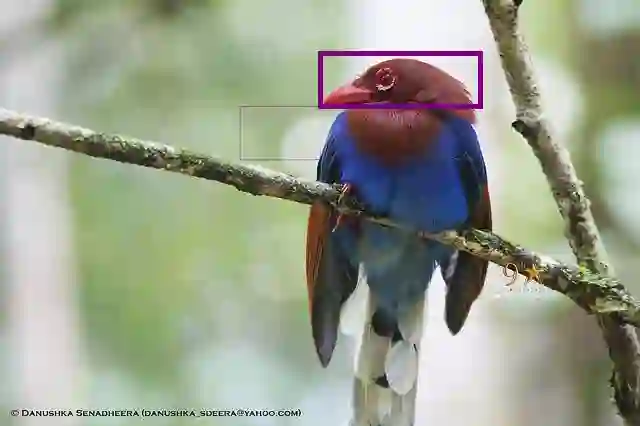

Existing attention mechanisms are trained to attend to individual items in a collection (the memory) with a predefined, fixed granularity, e.g., a word token or an image grid. We propose area attention: a way to attend to areas in the memory, where each area contains a group of items that are structurally adjacent, e.g., spatially for a 2D memory such as images, or temporally for a 1D memory such as natural language sentences. Importantly, the shape and the size of an area are dynamically determined via learning, which enables a model to attend to information with varying granularity. Area attention can easily work with existing model architectures such as multi-head attention for simultaneously attending to multiple areas in the memory. We evaluate area attention on two tasks: neural machine translation (both character and token-level) and image captioning, and improve upon strong (state-of-the-art) baselines in all the cases. These improvements are obtainable with a basic form of area attention that is parameter free.

翻译:对现有关注机制进行了培训,以关注具有预先定义、固定颗粒的收藏(记忆)中的个别物品,例如一个单词符号或图像网格。我们建议区域注意:关注记忆中的某一区域,每个区域都包含一组结构上相邻的物品,例如图像等2D内存的空间或自然语言句等1D内存的时间性。重要的是,一个区域的形状和大小是通过学习动态决定的,使一个模型能够关注不同颗粒的信息。区域注意很容易与现有的模型结构合作,例如多头关注同时关注记忆中的多个区域。我们评估两个任务的区域注意:神经机器翻译(字符和符号级别)和图像说明,以及在所有案例中改进强(状态-艺术)基线。这些改进可以通过无参数的基本区域关注形式获得。