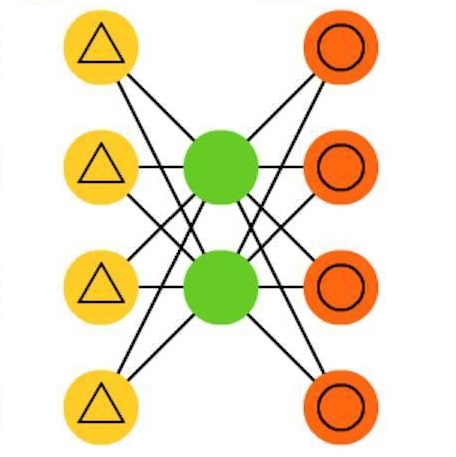

Recently, universal neural machine translation (NMT) with shared encoder-decoder gained good performance on zero-shot translation. Unlike universal NMT, jointly trained language-specific encoders-decoders aim to achieve universal representation across non-shared modules, each of which is for a language or language family. The non-shared architecture has the advantage of mitigating internal language competition, especially when the shared vocabulary and model parameters are restricted in their size. However, the performance of using multiple encoders and decoders on zero-shot translation still lags behind universal NMT. In this work, we study zero-shot translation using language-specific encoders-decoders. We propose to generalize the non-shared architecture and universal NMT by differentiating the Transformer layers between language-specific and interlingua. By selectively sharing parameters and applying cross-attentions, we explore maximizing the representation universality and realizing the best alignment of language-agnostic information. We also introduce a denoising auto-encoding (DAE) objective to jointly train the model with the translation task in a multi-task manner. Experiments on two public multilingual parallel datasets show that our proposed model achieves a competitive or better results than universal NMT and strong pivot baseline. Moreover, we experiment incrementally adding new language to the trained model by only updating the new model parameters. With this little effort, the zero-shot translation between this newly added language and existing languages achieves a comparable result with the model trained jointly from scratch on all languages.

翻译:最近,通用神经机器翻译(NMT)与共用编码器-代碼器(NMT)在零点翻译方面业绩良好。与通用NMT不同的是,经过联合培训的针对特定语言的编码器-代码器(NMT)旨在实现所有非共享模块的普遍代表性,每个模块都是针对一个语言或语言家庭的。非共享架构具有缓解内部语言竞争的优势,特别是当共享词汇和模型参数的大小受到限制时。但是,在零点翻译中使用多个编码器和解码器(NMT)的绩效仍然落后于通用NMT。在这项工作中,我们使用特定语言的编码码(DAE)来研究零点翻译。我们提议将非共享架构和通用NMT(NMT)系统(NMT)系统(NMD)系统(NMT)系统(NMT)系统(NC)系统(NMT)系统(NMT)系统(NMT)系统(ND)系统(OV)系统(ND)系统(NDL)系统(ND(ND)系统)(NDL)系统(NDOL(ND)系统(ND)系统) (ND) (ND) (NV) (NV) (ND(ND) (ND(ND) (ND) (NDOL(NT) (NT) (NT) (NV) (NT) (NT) (NB) (ND) (G) (ND) (NT) (NT) (ND) (G) (NT) (ND) (G) (通用语言(ND) (ND) (ND) (G) (N) (G) (ND) (N) (N)) (N) (N) (G) (N) (ND) (ND) (G) (ND) (ND) (ND) (ND) (ND) (ND) (ND) (N) (N) (N) (N) (N) (N) (N) (N) (N) (N) (N) (N) (N) (N) (N) (N) (N) (N) (