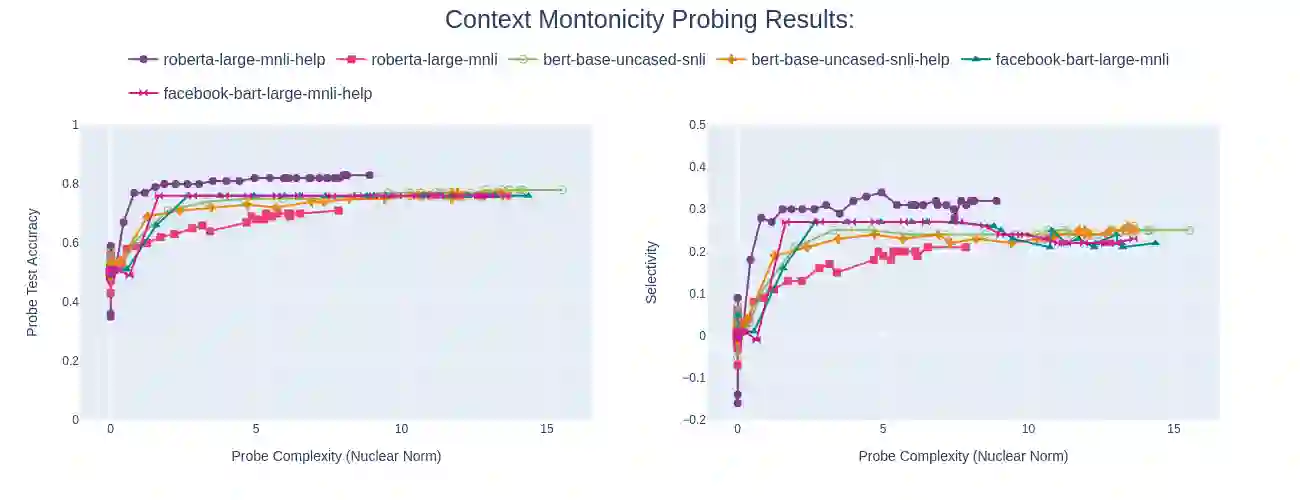

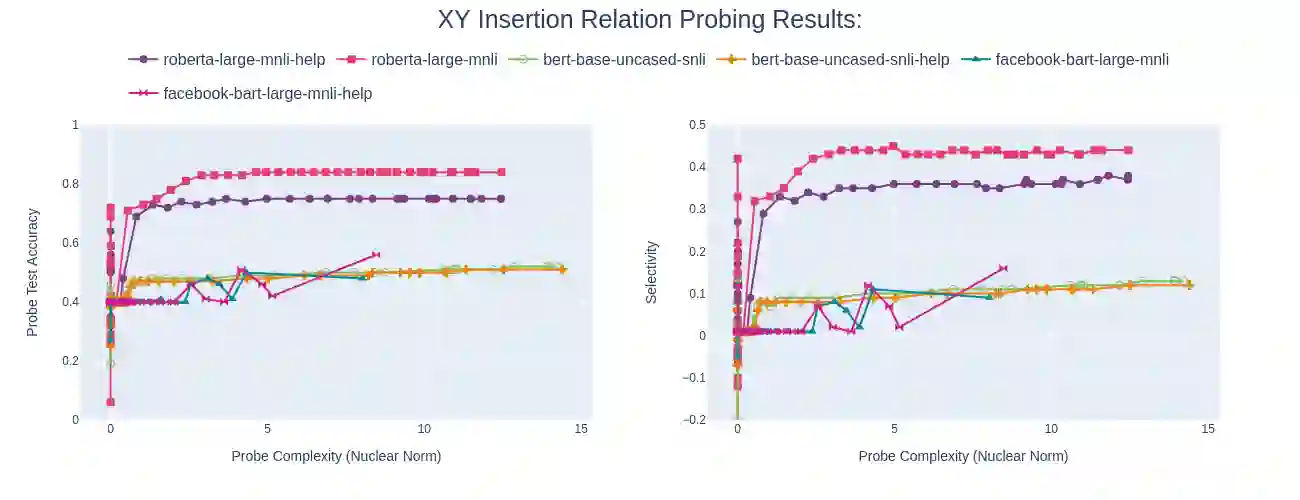

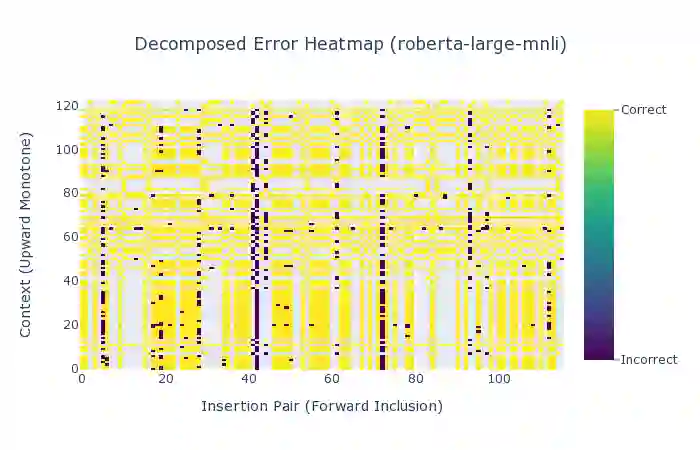

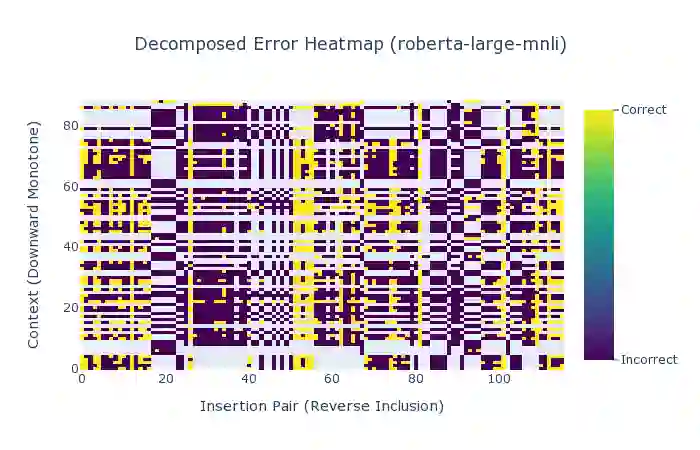

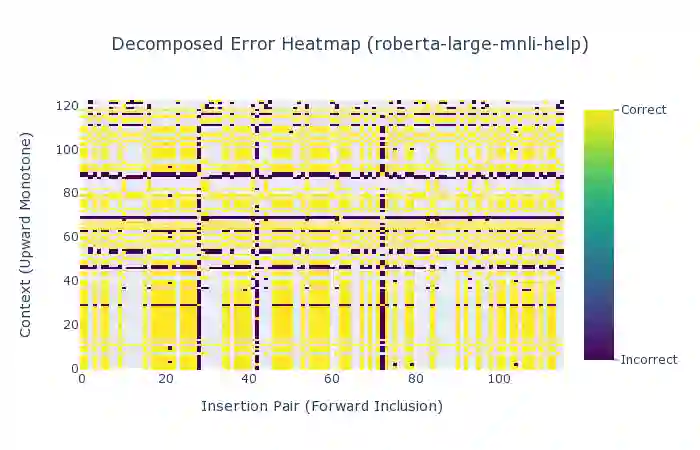

In the interest of interpreting neural NLI models and their reasoning strategies, we carry out a systematic probing study which investigates whether these models capture the crucial semantic features central to natural logic: monotonicity and concept inclusion. Correctly identifying valid inferences in downward-monotone contexts is a known stumbling block for NLI performance, subsuming linguistic phenomena such as negation scope and generalized quantifiers. To understand this difficulty, we emphasize monotonicity as a property of a context and examine the extent to which models capture monotonicity information in the contextual embeddings which are intermediate to their decision making process. Drawing on the recent advancement of the probing paradigm, we compare the presence of monotonicity features across various models. We find that monotonicity information is notably weak in the representations of popular NLI models which achieve high scores on benchmarks, and observe that previous improvements to these models based on fine-tuning strategies have introduced stronger monotonicity features together with their improved performance on challenge sets.

翻译:为了解释神经NLI模型及其推理战略,我们开展了一项系统的测试研究,调查这些模型是否反映了自然逻辑的关键语义特征:单音和概念包容。正确确定向下摩诺因环境中的有效推论是NLI性能的一个已知绊脚石,将否定范围和通用量化指标等语言现象归并起来。为了理解这一困难,我们强调单一性是背景的属性,并审查这些模型在背景嵌入中采集单音信息的程度,而这种嵌入是其决策过程的中间部分。我们借鉴最近推进的Probent范式,比较各种模型中存在的单音特征。我们发现,在流行的NLI模型的表述中,单音信息明显薄弱,这些模型在基准上得分很高。我们注意到,以前根据微调战略对这些模型的改进带来了更强的单调特征,同时提高了其在挑战组合上的性能。