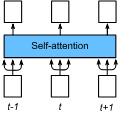

Transformer-based speech recognition models have achieved great success due to the self-attention (SA) mechanism that utilizes every frame in the feature extraction process. Especially, SA heads in lower layers capture various phonetic characteristics by the query-key dot product, which is designed to compute the pairwise relationship between frames. In this paper, we propose a variant of SA to extract more representative phonetic features. The proposed phonetic self-attention (phSA) is composed of two different types of phonetic attention; one is similarity-based and the other is content-based. In short, similarity-based attention captures the correlation between frames while content-based attention only considers each frame without being affected by other frames. We identify which parts of the original dot product equation are related to two different attention patterns and improve each part with simple modifications. Our experiments on phoneme classification and speech recognition show that replacing SA with phSA for lower layers improves the recognition performance without increasing the latency and the parameter size.

翻译:以变换器为基础的语音识别模型取得了巨大成功,因为自省(SA)机制利用了特征提取过程中的每一个框架。 特别是, 低层的SA头通过查询键点产品捕捉到各种语音特征, 用于计算各框架之间的对称关系。 在本文中, 我们提出一个SA变式, 以提取更具代表性的语音特征。 提议的语音自留(phSA) 由两种不同的语音关注类型组成; 一种基于相似性, 另一种基于内容。 简言之, 类似性的注意力捕捉到各框架之间的关联, 而内容基关注只考虑每个框架而不受其他框架的影响。 我们确定最初的点产品方程式的哪些部分与两种不同的关注模式相关, 并用简单的修改来改进每个部分。 我们在电话分类和语音识别方面的实验显示, 以低层的phSA 取代SA 将提高识别性能, 但不增加延绳和参数大小 。