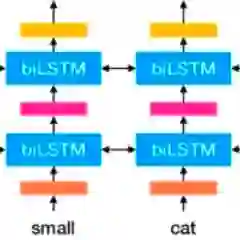

This paper presents Masked ELMo, a new RNN-based model for language model pre-training, evolved from the ELMo language model. Contrary to ELMo which only uses independent left-to-right and right-to-left contexts, Masked ELMo learns fully bidirectional word representations. To achieve this, we use the same Masked language model objective as BERT. Additionally, thanks to optimizations on the LSTM neuron, the integration of mask accumulation and bidirectional truncated backpropagation through time, we have increased the training speed of the model substantially. All these improvements make it possible to pre-train a better language model than ELMo while maintaining a low computational cost. We evaluate Masked ELMo by comparing it to ELMo within the same protocol on the GLUE benchmark, where our model outperforms significantly ELMo and is competitive with transformer approaches.

翻译:本文介绍了基于语言模式培训前培训前新模式的以区域网为基础的新语言模型蒙面语言模型ELMO。与仅使用独立左对右和右对左背景的ELMO不同的是,蒙面ELMO学习完全双向文字表达。为此,我们使用与BERT相同的蒙面语言模型目标。此外,由于LSTM神经元的优化,掩面积累和双向脱轨反向分析的整合,我们大大提高了该模型的培训速度。所有这些改进使得在保持低计算成本的同时,能够对一个比ELMO更好的语言模型进行预培训。我们通过在GLUE基准上将它与ELMO相比较来评估蒙面语言模型。在GLUE基准中,我们的模型大大优于ELMO,并且与变压器方法具有竞争力。