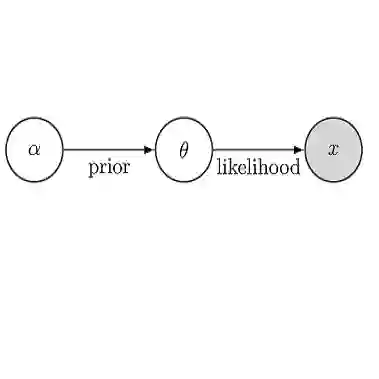

The prior distribution on parameters of a likelihood is the usual starting point for Bayesian uncertainty quantification. In this paper, we present a different perspective. Given a finite data sample $Y_{1:n}$ of size $n$ from an infinite population, we focus on the missing $Y_{n+1:\infty}$ as the source of statistical uncertainty, with the parameter of interest being known precisely given $Y_{1:\infty}$. We argue that the foundation of Bayesian inference is to assign a predictive distribution on $Y_{n+1:\infty}$ conditional on $Y_{1:n}$, which then induces a distribution on the parameter of interest. Demonstrating an application of martingales, Doob shows that choosing the Bayesian predictive distribution returns the conventional posterior as the distribution of the parameter. Taking this as our cue, we relax the predictive machine, avoiding the need for the predictive to be derived solely from the usual prior to posterior to predictive density formula. We introduce the martingale posterior distribution, which returns Bayesian uncertainty directly on any statistic of interest without the need for the likelihood and prior, and this distribution can be sampled through a computational scheme we name predictive resampling. To that end, we introduce new predictive methodologies for multivariate density estimation, regression and classification that build upon recent work on bivariate copulas.

翻译:可能性参数的先前分布是 Bayesian 不确定性量化的通常起点 。 在本文中, 我们呈现了一个不同的视角 。 根据一个有限的数据样本 $Y 1: n} 美元大小, 由无限人口提供, 我们关注缺少的 $Y ón+1 1:\ infty} 美元作为统计不确定性的来源, 并准确给出了 $Y { 1:\ infty} 美元 。 我们主张 Bayesian 推论的基点是 $Y + 1:\ infty} 美元 的预测性分布, 以 $Y 1: n} 为条件, 从而在利息参数上进行分配。 演示对 martingales 的应用, Doob 显示, 选择 Bayesian 预测性分布会将传统后缀作为参数的分布。 作为我们的提示, 我们放松了预测机器的预测性, 避免预测性仅从通常的先期到预测性多密度公式 。 我们引入了 martingale aptial adio adrial restial digration digration digration 分布, 将返回 Bayes 先前的预测性 方法, 和 。