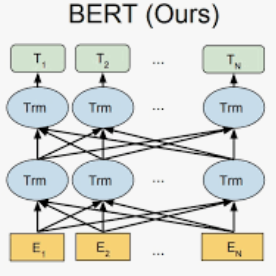

Code completion is one of the main features of modern Integrated Development Environments (IDEs). Its objective is to speed up code writing by predicting the next code token(s) the developer is likely to write. Research in this area has substantially bolstered the predictive performance of these techniques. However, the support to developers is still limited to the prediction of the next few tokens to type. In this work, we take a step further in this direction by presenting a large-scale empirical study aimed at exploring the capabilities of state-of-the-art deep learning (DL) models in supporting code completion at different granularity levels, including single tokens, one or multiple entire statements, up to entire code blocks (e.g., the iterated block of a for loop). To this aim, we train and test several adapted variants of the recently proposed RoBERTa model, and evaluate its predictions from several perspectives, including: (i) metrics usually adopted when assessing DL generative models (i.e., BLEU score and Levenshtein distance); (ii) the percentage of perfect predictions (i.e., the predicted code snippets that match those written by developers); and (iii) the "semantic" equivalence of the generated code as compared to the one written by developers. The achieved results show that BERT models represent a viable solution for code completion, with perfect predictions ranging from ~7%, obtained when asking the model to guess entire blocks, up to ~58%, reached in the simpler scenario of few tokens masked from the same code statement.

翻译:代码完成是现代集成开发环境(IDE)的主要特征之一。 它的目标是通过预测开发者可能写下下一个代号符号来加快代码写法速度。 这一领域的研究大大增强了这些技术的预测性能。 但是, 对开发者的支持仍然局限于预测下几个要输入的代号。 在这项工作中, 我们向这个方向迈出了一步, 提出大规模的经验性研究, 旨在探索最先进的深层次掩码模型( DL) 模型在不同颗粒级别支持代码完成的能力, 包括单标号、 一个或多个完整的声明, 直至整个代码区块( 例如循环的迭代号块) 。 为此, 我们培训和测试最近提议的 RoBERTa 模型的若干经修改的变异, 从几个角度评估其预测, 包括:(一) 在评估整个模型的精度模型( 即, BLEU 分数和 Levenshtein 距离) 中通常采用的标准, 包括单标值的精度, 从单个符号、 一个或多个完整的代号声明, 从一个预算的预算结果, 显示B. 三, 通过预估的代码完成结果, 和预算结果, 显示一个预算结果。