【论文推荐】最新六篇行人再识别相关论文—特定视角、多目标、双注意匹配网络、联合属性-身份、迁移学习、多通道金字塔型

【导读】专知内容组整理了最近六篇行人再识别(Person Re-Identification)相关文章,为大家进行介绍,欢迎查看!

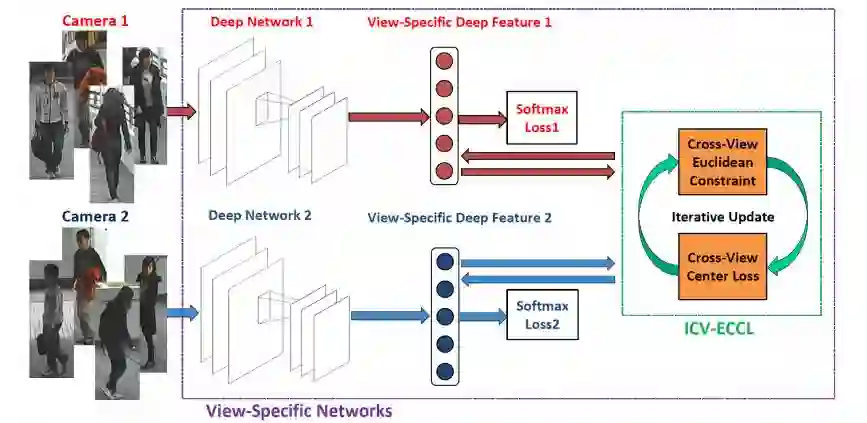

1. Learning View-Specific Deep Networks for Person Re-Identification(学习特定视角深度网络的行人再识别)

作者:Zhanxiang Feng,Jianhuang Lai,Xiaohua Xie

摘要:In recent years, a growing body of research has focused on the problem of person re-identification (re-id). The re-id techniques attempt to match the images of pedestrians from disjoint non-overlapping camera views. A major challenge of re-id is the serious intra-class variations caused by changing viewpoints. To overcome this challenge, we propose a deep neural network-based framework which utilizes the view information in the feature extraction stage. The proposed framework learns a view-specific network for each camera view with a cross-view Euclidean constraint (CV-EC) and a cross-view center loss (CV-CL). We utilize CV-EC to decrease the margin of the features between diverse views and extend the center loss metric to a view-specific version to better adapt the re-id problem. Moreover, we propose an iterative algorithm to optimize the parameters of the view-specific networks from coarse to fine. The experiments demonstrate that our approach significantly improves the performance of the existing deep networks and outperforms the state-of-the-art methods on the VIPeR, CUHK01, CUHK03, SYSU-mReId, and Market-1501 benchmarks.

期刊:arXiv, 2018年3月30日

网址:

http://www.zhuanzhi.ai/document/daa50570436444908cc84b6e4ac4b373

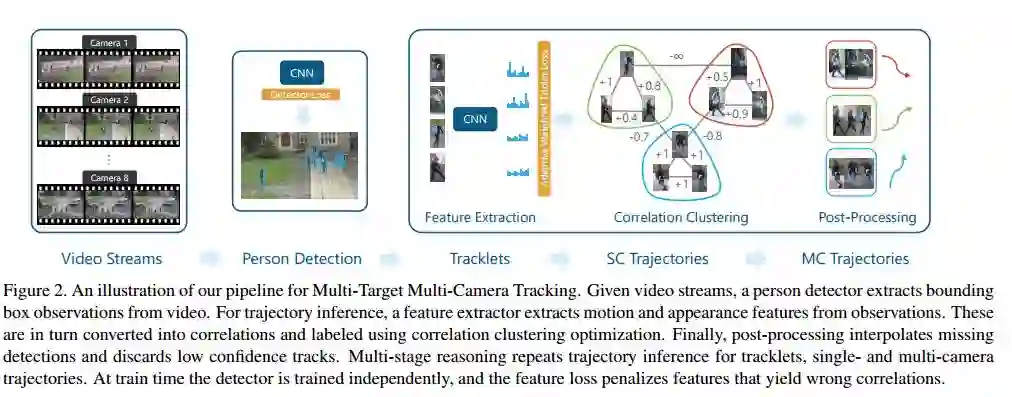

2. Features for Multi-Target Multi-Camera Tracking and Re-Identification(多目标多摄像头跟踪和行人再识别的特征)

作者:Ergys Ristani,Carlo Tomasi

机构:Duke University

摘要:Multi-Target Multi-Camera Tracking (MTMCT) tracks many people through video taken from several cameras. Person Re-Identification (Re-ID) retrieves from a gallery images of people similar to a person query image. We learn good features for both MTMCT and Re-ID with a convolutional neural network. Our contributions include an adaptive weighted triplet loss for training and a new technique for hard-identity mining. Our method outperforms the state of the art both on the DukeMTMC benchmarks for tracking, and on the Market-1501 and DukeMTMC-ReID benchmarks for Re-ID. We examine the correlation between good Re-ID and good MTMCT scores, and perform ablation studies to elucidate the contributions of the main components of our system. Code is available.

期刊:arXiv, 2018年3月29日

网址:

http://www.zhuanzhi.ai/document/6e80d20c9524dd130519189922488a10

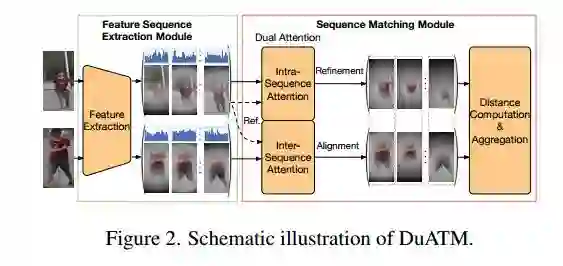

3. Dual Attention Matching Network for Context-Aware Feature Sequence based Person Re-Identification(基于上下文感知特征序列行人再识别的双注意匹配网络)

作者:Jianlou Si,Honggang Zhang,Chun-Guang Li,Jason Kuen,Xiangfei Kong,Alex C. Kot,Gang Wang

机构:Beijing University of Posts and Telecommunications, Beijing

摘要:Typical person re-identification (ReID) methods usually describe each pedestrian with a single feature vector and match them in a task-specific metric space. However, the methods based on a single feature vector are not sufficient enough to overcome visual ambiguity, which frequently occurs in real scenario. In this paper, we propose a novel end-to-end trainable framework, called Dual ATtention Matching network (DuATM), to learn context-aware feature sequences and perform attentive sequence comparison simultaneously. The core component of our DuATM framework is a dual attention mechanism, in which both intra-sequence and inter-sequence attention strategies are used for feature refinement and feature-pair alignment, respectively. Thus, detailed visual cues contained in the intermediate feature sequences can be automatically exploited and properly compared. We train the proposed DuATM network as a siamese network via a triplet loss assisted with a de-correlation loss and a cross-entropy loss. We conduct extensive experiments on both image and video based ReID benchmark datasets. Experimental results demonstrate the significant advantages of our approach compared to the state-of-the-art methods.

期刊:arXiv, 2018年3月27日

网址:

http://www.zhuanzhi.ai/document/5a1030689db1d3a1e07a6baae2ec604a

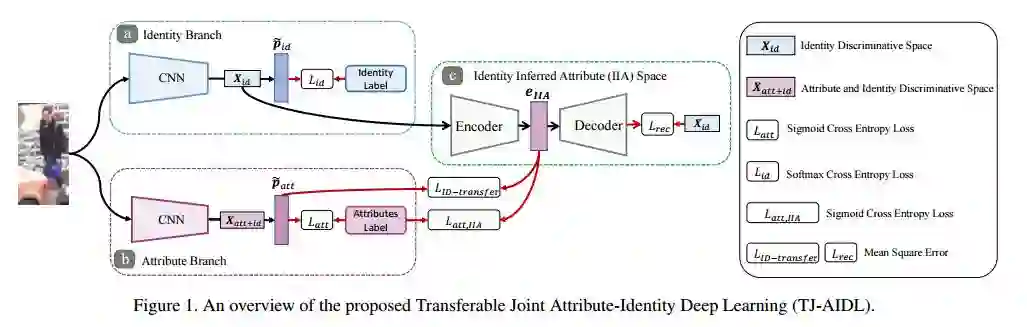

4. Transferable Joint Attribute-Identity Deep Learning for Unsupervised Person Re-Identification(基于可转移的联合属性-身份深度学习的无监督行人重识别)

作者:Jingya Wang,Xiatian Zhu,Shaogang Gong,Wei Li

机构:Queen Mary University of London

摘要:Most existing person re-identification (re-id) methods require supervised model learning from a separate large set of pairwise labelled training data for every single camera pair. This significantly limits their scalability and usability in real-world large scale deployments with the need for performing re-id across many camera views. To address this scalability problem, we develop a novel deep learning method for transferring the labelled information of an existing dataset to a new unseen (unlabelled) target domain for person re-id without any supervised learning in the target domain. Specifically, we introduce an Transferable Joint Attribute-Identity Deep Learning (TJ-AIDL) for simultaneously learning an attribute-semantic and identitydiscriminative feature representation space transferrable to any new (unseen) target domain for re-id tasks without the need for collecting new labelled training data from the target domain (i.e. unsupervised learning in the target domain). Extensive comparative evaluations validate the superiority of this new TJ-AIDL model for unsupervised person re-id over a wide range of state-of-the-art methods on four challenging benchmarks including VIPeR, PRID, Market-1501, and DukeMTMC-ReID.

期刊:arXiv, 2018年3月27日

网址:

http://www.zhuanzhi.ai/document/65b5a31db67109a0eb6f215e977beafa

5. Unsupervised Cross-dataset Person Re-identification by Transfer Learning of Spatial-Temporal Patterns(通过迁移学习时空模式进行无监督跨数据集的行人再识别)

作者:Jianming Lv,Weihang Chen,Qing Li,Can Yang

机构:South China University of Technology,City University of Hongkongy

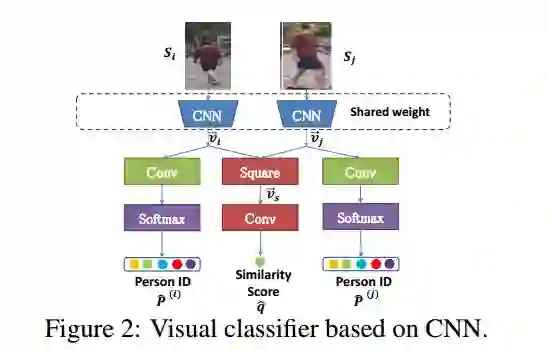

摘要:Most of the proposed person re-identification algorithms conduct supervised training and testing on single labeled datasets with small size, so directly deploying these trained models to a large-scale real-world camera network may lead to poor performance due to underfitting. It is challenging to incrementally optimize the models by using the abundant unlabeled data collected from the target domain. To address this challenge, we propose an unsupervised incremental learning algorithm, TFusion, which is aided by the transfer learning of the pedestrians' spatio-temporal patterns in the target domain. Specifically, the algorithm firstly transfers the visual classifier trained from small labeled source dataset to the unlabeled target dataset so as to learn the pedestrians' spatial-temporal patterns. Secondly, a Bayesian fusion model is proposed to combine the learned spatio-temporal patterns with visual features to achieve a significantly improved classifier. Finally, we propose a learning-to-rank based mutual promotion procedure to incrementally optimize the classifiers based on the unlabeled data in the target domain. Comprehensive experiments based on multiple real surveillance datasets are conducted, and the results show that our algorithm gains significant improvement compared with the state-of-art cross-dataset unsupervised person re-identification algorithms.

期刊:arXiv, 2018年3月20日

网址:

http://www.zhuanzhi.ai/document/0522841c63093f56ebca3f6847d4368b

6. Multi-Channel Pyramid Person Matching Network for Person Re-Identification(利用多通道金字塔型行人匹配网络进行行人再识别)

作者:Chaojie Mao,Yingming Li,Yaqing Zhang,Zhongfei Zhang,Xi Li

机构:Zhejiang University

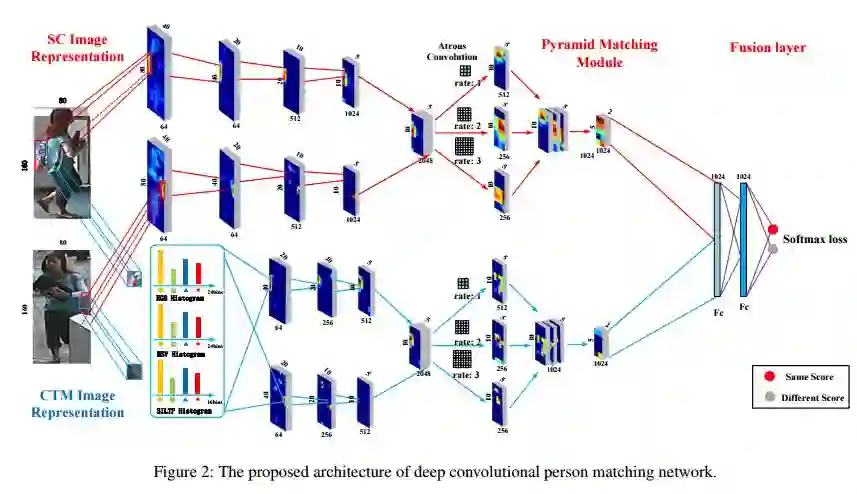

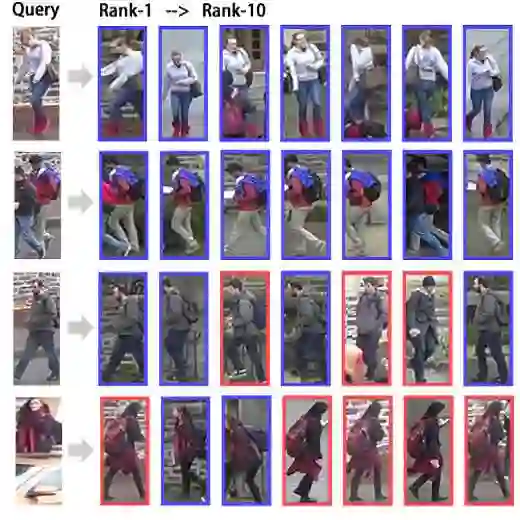

摘要:In this work, we present a Multi-Channel deep convolutional Pyramid Person Matching Network (MC-PPMN) based on the combination of the semantic-components and the color-texture distributions to address the problem of person re-identification. In particular, we learn separate deep representations for semantic-components and color-texture distributions from two person images and then employ pyramid person matching network (PPMN) to obtain correspondence representations. These correspondence representations are fused to perform the re-identification task. Further, the proposed framework is optimized via a unified end-to-end deep learning scheme. Extensive experiments on several benchmark datasets demonstrate the effectiveness of our approach against the state-of-the-art literature, especially on the rank-1 recognition rate.

期刊:arXiv, 2018年3月7日

网址:

http://www.zhuanzhi.ai/document/2a28452f9dad43cbba6c3ef5dc8e6729

-END-

专 · 知

人工智能领域主题知识资料查看获取:【专知荟萃】人工智能领域26个主题知识资料全集(入门/进阶/论文/综述/视频/专家等)

同时欢迎各位用户进行专知投稿,详情请点击:

【诚邀】专知诚挚邀请各位专业者加入AI创作者计划!了解使用专知!

请PC登录www.zhuanzhi.ai或者点击阅读原文,注册登录专知,获取更多AI知识资料!

请扫一扫如下二维码关注我们的公众号,获取人工智能的专业知识!

请加专知小助手微信(Rancho_Fang),加入专知主题人工智能群交流!加入专知主题群(请备注主题类型:AI、NLP、CV、 KG等)交流~

投稿&广告&商务合作:fangquanyi@gmail.com

点击“阅读原文”,使用专知