【干货】多文本人脸生成

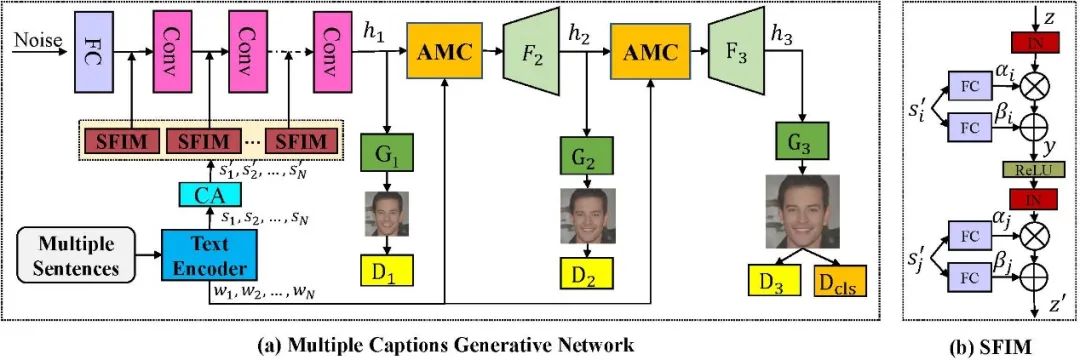

图 2 模型框架示意图

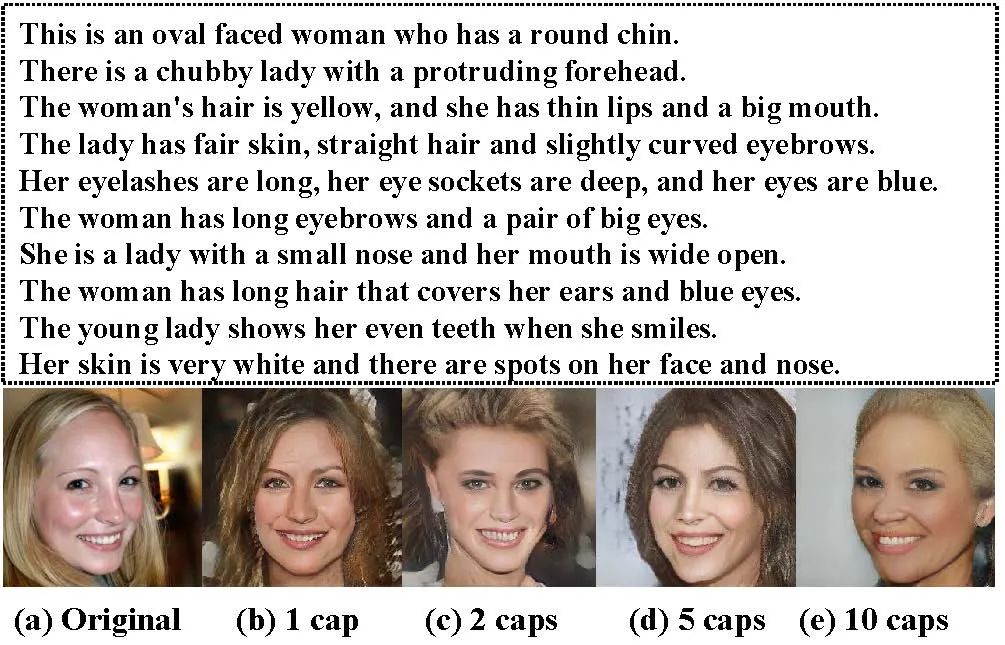

此外,团队首次建立了一个大规模收工标注的数据集,首先在CelebAMask-HQ数据集中筛选了15010张图片,每个图片分别由十个工作人员手工标注十个文本描述,十个描述按照由粗到细的顺序分别描述人脸的不同部位。

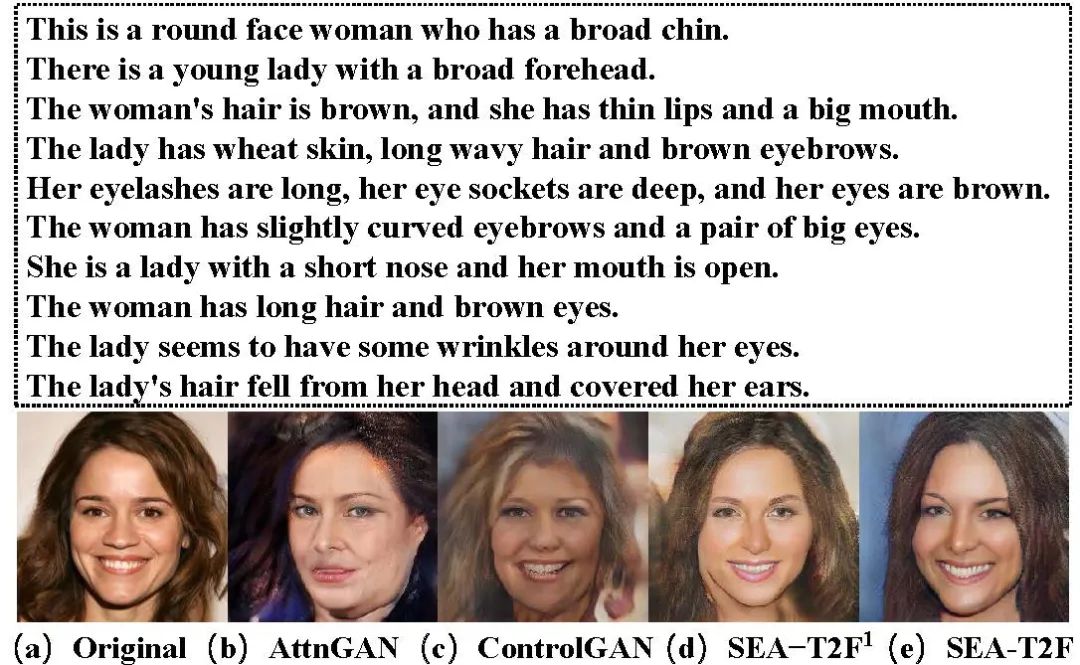

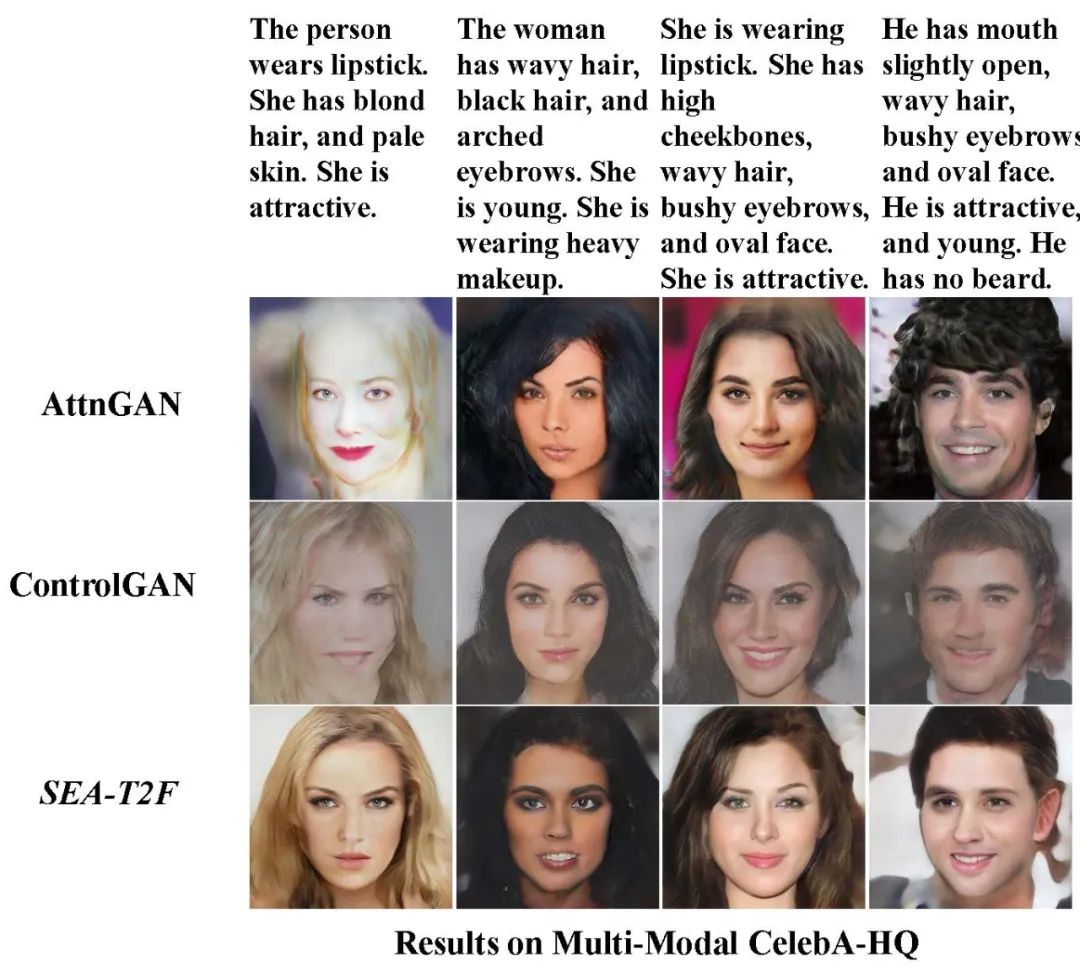

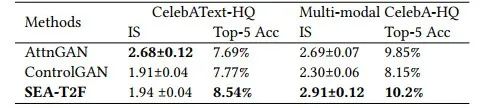

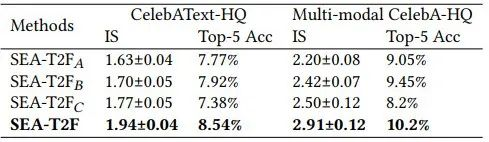

团队对提出的方法进行了定性和定量分析[5,6],实验结果表明,该方法不仅能生成高质量的图像,并且更加符合文本描述。

图 3 不同方法比较结果

1. Osaid Rehman Nasir, Shailesh Kumar Jha, Manraj Singh Grover, Yi Yu, Ajit Kumar, and Rajiv Ratn Shah. 2019. Text2FaceGAN: face generation from fine grained textual descriptions. In IEEE International Conference on Multimedia Big Data (BigMM). 58–67.

2. Xiang Chen, Lingbo Qing, Xiaohai He, Xiaodong Luo, and Yining Xu. 2019. FTGAN: A fully-trained generative adversarial networks for text to face generation. arXiv preprint arXiv:1904.05729 (2019).

3. David Stap, Maurits Bleeker, Sarah Ibrahimi, and Maartje ter Hoeve. 2020. Conditional image generation and manipulation for user-specified content. arXiv preprint arXiv:2005.04909 (2020).

4. Weihao Xia, Yujiu Yang, Jing-Hao Xue, and Baoyuan Wu. 2021. TediGAN: Textguided diverse image generation and manipulation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR). 2256–2265.

5. Tao Xu, Pengchuan Zhang, Qiuyuan Huang, Han Zhang, Zhe Gan, Xiaolei Huang, and Xiaodong He. 2018. Attngan: Fine-grained text to image generation with attentional generative adversarial networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR). 1316–1324.

6. Bowen Li, Xiaojuan Qi, Thomas Lukasiewicz, and Philip Torr. 2019. Controllable text-to-image generation. In Advances in Neural Information Processing Systems (NeuIPS). 2065–2075.

孙哲南

李琦

赵健

孙建新

原文标题:Multi-caption Text-to-Face Synthesis: Dataset and Algorithm

原文链接:https://dl.acm.org/doi/10.1145/3474085.3475391