2020年,我终于决定入门GCN

加入极市专业CV交流群,与10000+来自腾讯,华为,百度,北大,清华,中科院等名企名校视觉开发者互动交流!

同时提供每月大咖直播分享、真实项目需求对接、干货资讯汇总,行业技术交流。关注 极市平台 公众号 ,回复 加群,立刻申请入群~

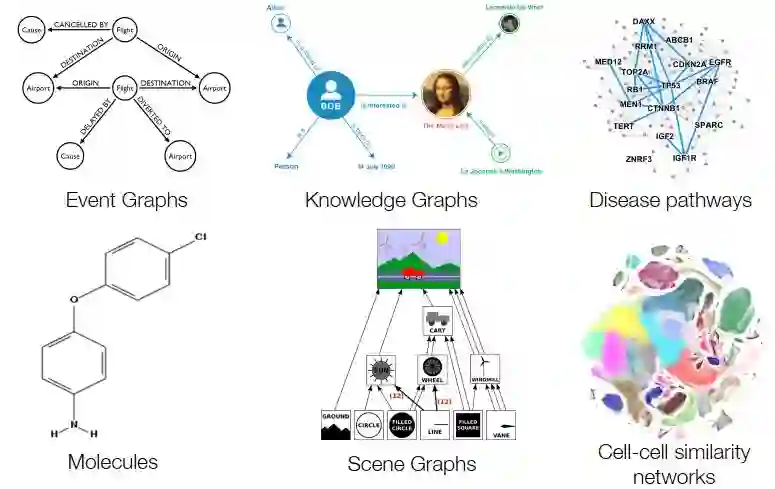

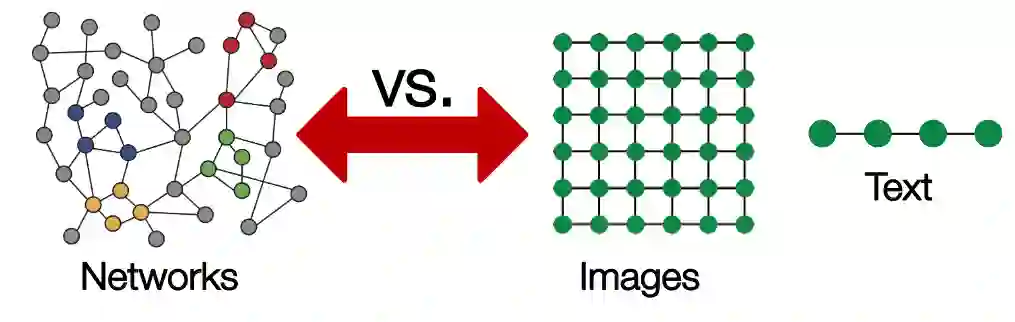

图的概念

-

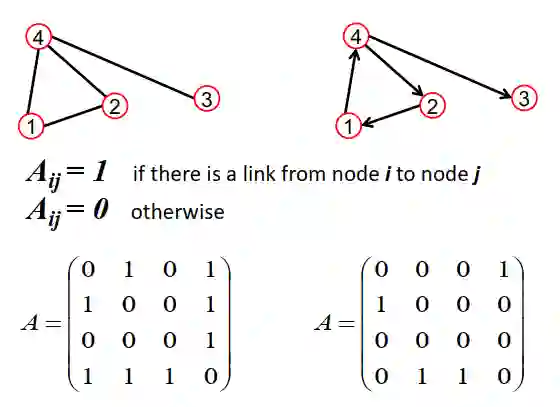

邻接矩阵 :adjacency matrix,用来表示节点间的连接关系,这里我们假定是0-1矩阵; -

度矩阵 :degree matrix,每个节点的度指的是其连接的节点数,这是一个对角矩阵,其中对角线元素 ; -

特征矩阵 :用于表示节点的特征, ,这里F是特征的维度;

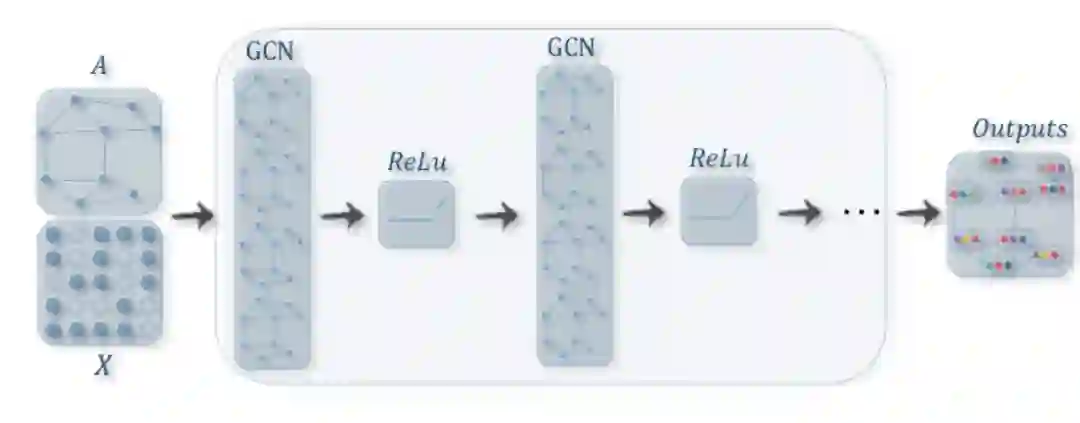

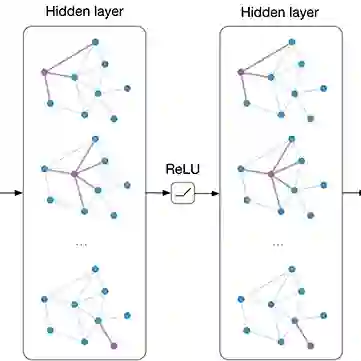

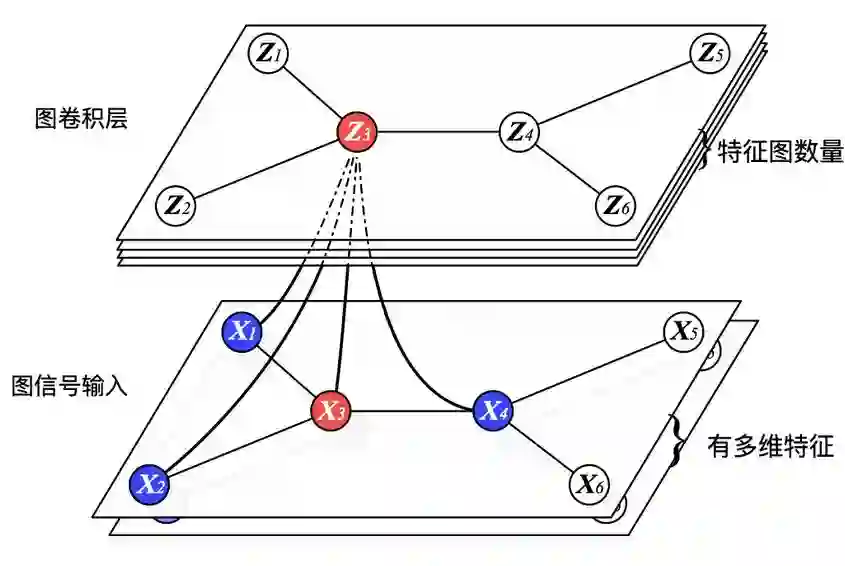

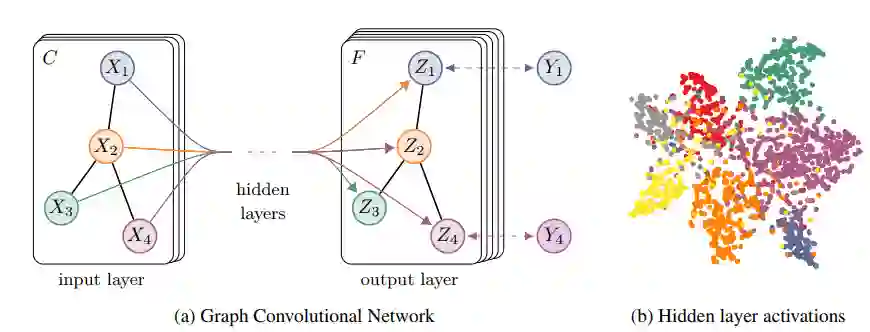

学习新特征

深度学习中最重要的是学习特征:随着网络层数的增加,特征越来越抽象,然后用于最终的任务。对于图任务来说,这点同样适用,我们希望深度模型从图的最初始特征 出发学习到更抽象的特征,比如学习到了某个节点的高级特征,这个特征根据图结构融合了图中其他节点的特征,我们就可以用这个特征用于节点分类或者属性预测。那么图网络就是要学习新特征,用公式表达就是:

-

变换(transform):对当前的节点特征进行变换学习,这里就是乘法规则(Wx); -

聚合(aggregate):聚合领域节点的特征,得到该节点的新特征,这里是简单的加法规则; -

激活(activate):采用激活函数,增加非线性。

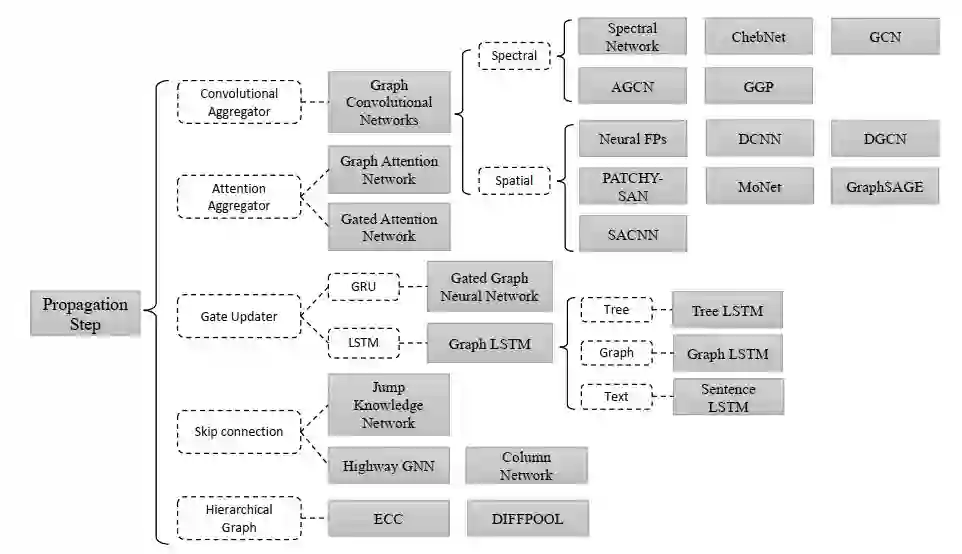

图卷积

-

Graph Neural Networks: A Review of Methods and Application https://arxiv.org/pdf/1812.08434.pdf -

A Comprehensive Survey on Graph Neural Networks https://arxiv.org/pdf/1901.00596.pdf -

Deep Learning on Graphs: A Survey https://arxiv.org/pdf/1812.04202.pdf

GCN的PyTorch实现

import torch

import torch.nn as nn

class GraphConvolution(nn.Module):

"""GCN layer"""

def __init__(self, in_features, out_features, bias=True):

super(GraphConvolution, self).__init__()

self.in_features = in_features

self.out_features = out_features

self.weight = nn.Parameter(torch.Tensor(in_features, out_features))

if bias:

self.bias = nn.Parameter(torch.Tensor(out_features))

else:

self.register_parameter('bias', None)

self.reset_parameters()

def reset_parameters(self):

nn.init.kaiming_uniform_(self.weight)

if self.bias is not None:

nn.init.zeros_(self.bias)

def forward(self, input, adj):

support = torch.mm(input, self.weight)

output = torch.spmm(adj, support)

if self.bias is not None:

return output + self.bias

else:

return output

def extra_repr(self):

return 'in_features={}, out_features={}, bias={}'.format(

self.in_features, self.out_features, self.bias is not None

)

class GCN(nn.Module):

"""a simple two layer GCN"""

def __init__(self, nfeat, nhid, nclass):

super(GCN, self).__init__()

self.gc1 = GraphConvolution(nfeat, nhid)

self.gc2 = GraphConvolution(nhid, nclass)

def forward(self, input, adj):

h1 = F.relu(self.gc1(input, adj))

logits = self.gc2(h1, adj)

return logits

半监督分类实例

Case_Based, Genetic_Algorithms, Neural_Networks, Probabilistic_Methods, Reinforcement_Learning, Rule_Learning, Theory

# https://github.com/tkipf/pygcn/blob/master/pygcn/utils.py

adj, features, labels, idx_train, idx_val, idx_test = load_data(path=

"./data/cora/")

值得注意的有两点,一是论文引用应该是单向图,但是在网络时我们要先将其转成无向图,或者说建立双向引用,我发现这个对模型训练结果影响较大:

# build symmetric adjacency matrix

adj = adj + adj.T.multiply(adj.T > adj) - adj.multiply(adj.T > adj)

另外官方实现中对邻接矩阵采用的是普通均值归一化,当然我们也可以采用对称归一化方式:

def normalize_adj(adj):

"""compute L=D^-0.5 * (A+I) * D^-0.5"""

adj += sp.eye(adj.shape[

0])

degree = np.array(adj.sum(

1))

d_hat = sp.diags(np.power(degree,

-0.5).flatten())

norm_adj = d_hat.dot(adj).dot(d_hat)

return norm_adj

loss_history = []

val_acc_history = []

for epoch

in range(epochs):

model.train()

logits = model(features, adj)

loss = criterion(logits[idx_train], labels[idx_train])

train_acc = accuracy(logits[idx_train], labels[idx_train])

optimizer.zero_grad()

loss.backward()

optimizer.step()

val_acc = test(idx_val)

loss_history.append(loss.item())

val_acc_history.append(val_acc.item())

print(

"Epoch {:03d}: Loss {:.4f}, TrainAcc {:.4}, ValAcc {:.4f}".format(

epoch, loss.item(), train_acc.item(), val_acc.item()))

只需要训练200个epoch,我们就可以在测试集上达到80%左右的分类准确,GCN的强大可想而知:

结语

参考

Semi-Supervised Classification with Graph Convolutional Networks https://arxiv.org/abs/1609.02907

How to do Deep Learning on Graphs with Graph Convolutional Networks https://towardsdatascience.com/how-to-do-deep-learning-on-graphs-with-graph-convolutional-networks-7d2250723780

Graph Convolutional Networks http://tkipf.github.io/graph-convolutional-networks

Graph Convolutional Networks in PyTorch https://github.com/tkipf/pygcn

回顾频谱图卷积的经典工作:从ChebNet到GCN https://www.jianshu.com/p/2fd5a2454781

图数据集之cora数据集介绍- 用pyton处理 - 可用于GCN任务 https://blog.csdn.net/yeziand01/article/details/93374216

推荐阅读:

△长按添加极市小助手

△长按关注极市平台,获取最新CV干货

觉得有用麻烦给个在看啦~