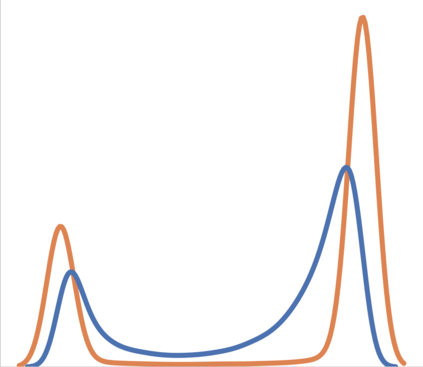

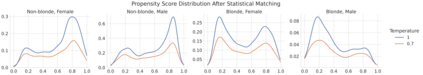

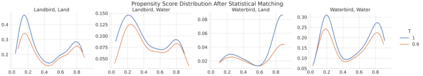

Machine learning classifiers are typically trained to minimise the average error across a dataset. Unfortunately, in practice, this process often exploits spurious correlations caused by subgroup imbalance within the training data, resulting in high average performance but highly variable performance across subgroups. Recent work to address this problem proposes model patching with CAMEL. This previous approach uses generative adversarial networks to perform intra-class inter-subgroup data augmentations, requiring (a) the training of a number of computationally expensive models and (b) sufficient quality of model's synthetic outputs for the given domain. In this work, we propose RealPatch, a framework for simpler, faster, and more data-efficient data augmentation based on statistical matching. Our framework performs model patching by augmenting a dataset with real samples, mitigating the need to train generative models for the target task. We demonstrate the effectiveness of RealPatch on three benchmark datasets, CelebA, Waterbirds and a subset of iWildCam, showing improvements in worst-case subgroup performance and in subgroup performance gap in binary classification. Furthermore, we conduct experiments with the imSitu dataset with 211 classes, a setting where generative model-based patching such as CAMEL is impractical. We show that RealPatch can successfully eliminate dataset leakage while reducing model leakage and maintaining high utility. The code for RealPatch can be found at https://github.com/wearepal/RealPatch.

翻译:不幸的是,在实践中,这一过程往往利用培训数据中分组失衡造成的虚假关联,导致不同分组之间业绩平均较高,但业绩差异很大。最近为解决这一问题而开展的工作提议了与CAMEL的模型补丁。先前的方法是使用基因对抗网络来进行类内分组间数据增强,这需要:(a) 培训若干计算成本昂贵的模型,(b) 模型合成产出对特定域的足够质量。在这项工作中,我们提议 RealPatch,一个基于统计匹配的更简单、更快和数据效率更高的数据增强框架。我们的框架通过用真实样本增加数据集来进行模型补补补补,减轻为目标任务培训基因化模型的需要。我们展示了RealPatch在三个基准数据集、CelebA、Waterbirls和iWildPam的一个子集上的有效性,显示最差的分组业绩和二进制分类中的分组业绩差距。此外,我们用不成熟的网络化数据模型进行实验,用211的模板进行升级,而我们则用真实的保密性数据升级来进行。