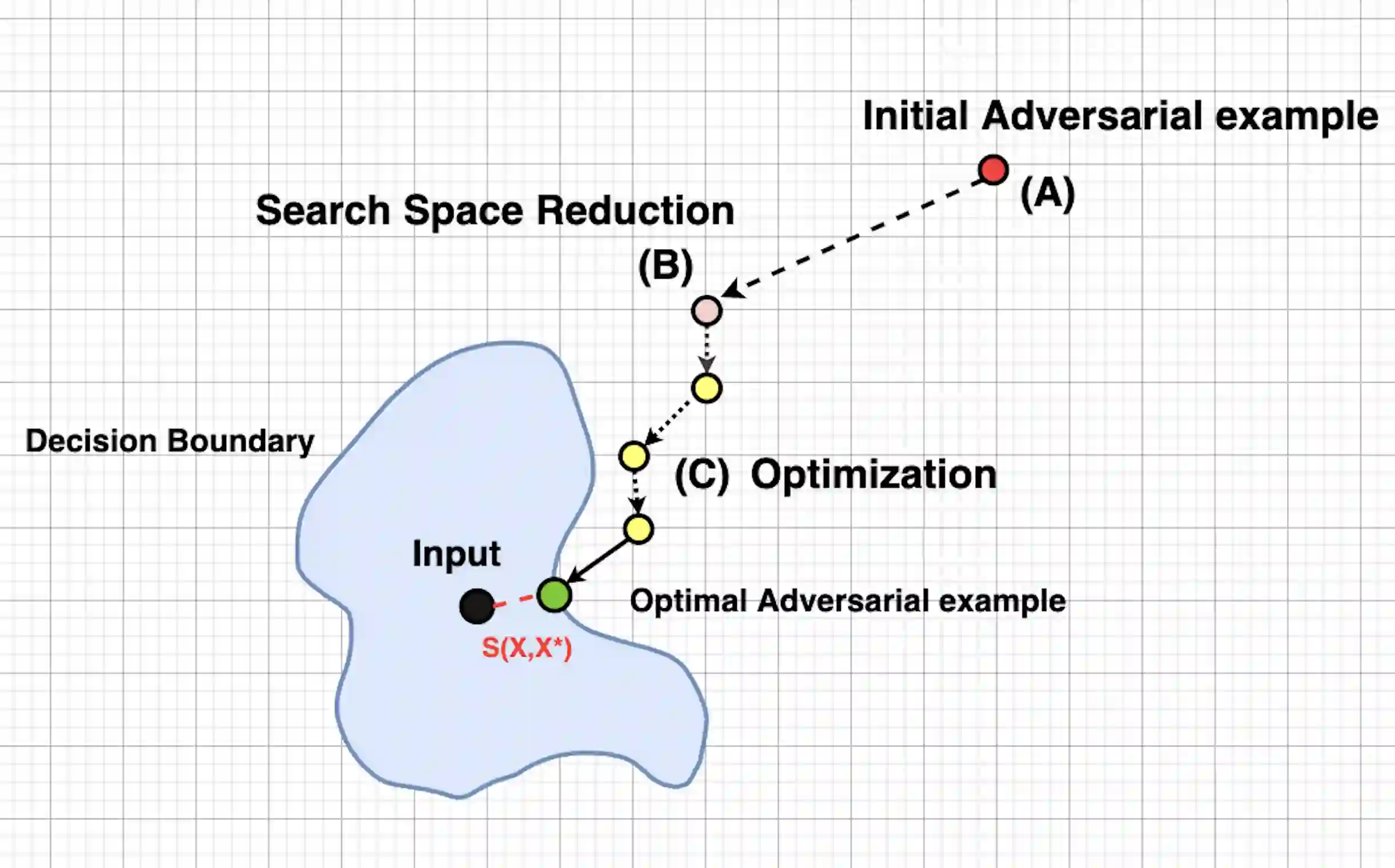

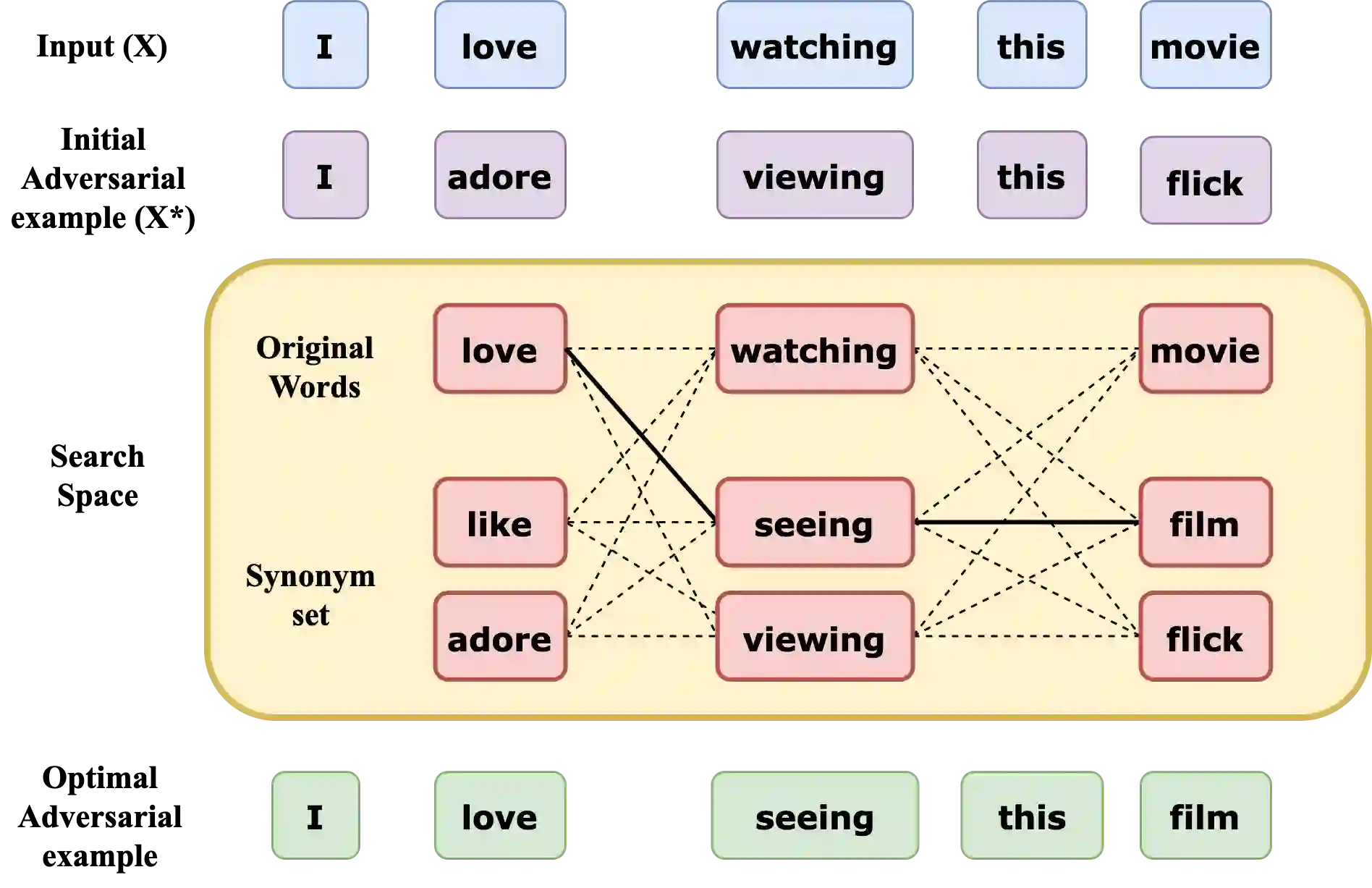

We study an important and challenging task of attacking natural language processing models in a hard label black box setting. We propose a decision-based attack strategy that crafts high quality adversarial examples on text classification and entailment tasks. Our proposed attack strategy leverages population-based optimization algorithm to craft plausible and semantically similar adversarial examples by observing only the top label predicted by the target model. At each iteration, the optimization procedure allow word replacements that maximizes the overall semantic similarity between the original and the adversarial text. Further, our approach does not rely on using substitute models or any kind of training data. We demonstrate the efficacy of our proposed approach through extensive experimentation and ablation studies on five state-of-the-art target models across seven benchmark datasets. In comparison to attacks proposed in prior literature, we are able to achieve a higher success rate with lower word perturbation percentage that too in a highly restricted setting.

翻译:我们研究了在硬标签黑盒设置中攻击自然语言处理模型的重要和具有挑战性的任务。我们提出了一个基于决定的攻击战略,在文本分类和随附任务方面设计出高质量的对抗性实例。我们提出的攻击战略利用基于人口的优化算法,只通过观察目标模型预测的顶级标签来生成合理和语义相似的对抗性实例。在每一次迭代中,优化程序允许用词替换,使原始文本和敌对文本之间的整体语义相似性最大化。此外,我们的方法并不依赖于使用替代模型或任何类型的培训数据。我们通过对七个基准数据集的五个最先进的目标模型进行广泛的实验和调整研究,展示了我们拟议方法的有效性。与先前文献中提议的攻击相比,我们能够在高度受限制的环境中,以较低的词扰动率实现更高的成功率。