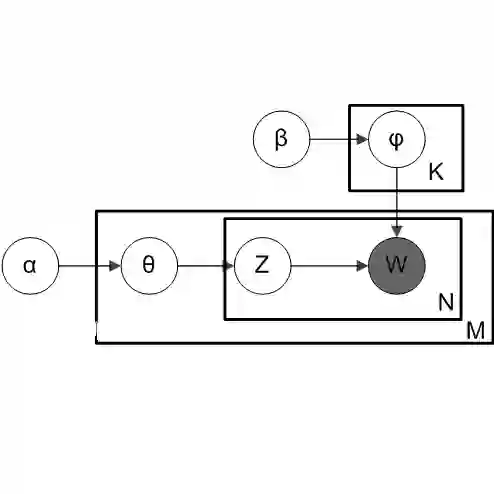

Context: Topic modeling finds human-readable structures in unstructured textual data. A widely used topic modeler is Latent Dirichlet allocation. When run on different datasets, LDA suffers from "order effects" i.e. different topics are generated if the order of training data is shuffled. Such order effects introduce a systematic error for any study. This error can relate to misleading results;specifically, inaccurate topic descriptions and a reduction in the efficacy of text mining classification results. Objective: To provide a method in which distributions generated by LDA are more stable and can be used for further analysis. Method: We use LDADE, a search-based software engineering tool that tunes LDA's parameters using DE (Differential Evolution). LDADE is evaluated on data from a programmer information exchange site (Stackoverflow), title and abstract text of thousands ofSoftware Engineering (SE) papers, and software defect reports from NASA. Results were collected across different implementations of LDA (Python+Scikit-Learn, Scala+Spark); across different platforms (Linux, Macintosh) and for different kinds of LDAs (VEM,or using Gibbs sampling). Results were scored via topic stability and text mining classification accuracy. Results: In all treatments: (i) standard LDA exhibits very large topic instability; (ii) LDADE's tunings dramatically reduce cluster instability; (iii) LDADE also leads to improved performances for supervised as well as unsupervised learning. Conclusion: Due to topic instability, using standard LDA with its "off-the-shelf" settings should now be depreciated. Also, in future, we should require SE papers that use LDA to test and (if needed) mitigate LDA topic instability. Finally, LDADE is a candidate technology for effectively and efficiently reducing that instability.

翻译:主题建模在非结构化文本数据中找到可人读的结构。 一个广泛使用的主题建模器是 Lentant Dirichlet 分配。 当运行在不同数据集上时, LDA 受到“ 命令效果” 的影响, 也就是说, 如果培训数据顺序被冲洗, 就会产生不同的话题。 这种命令效果会给任何研究带来系统错误。 这个错误可能与误导结果有关; 具体来说, 主题描述不准确, 文本采矿分类结果的效率降低。 目标 : 提供一种方法, 使 LDA 生成的分布更加稳定, 可以用于进一步分析。 方法 : 我们使用 LDADA, 一个基于搜索的软件工程工具, 使用 DA DA 参数( 不同进化进化进化进化进化进化) 。 LDADA 文件的标题和抽象文本报告现在应该通过LADA( Pythson+ Dealdaldald) 来收集结果, (LADA 数据解算法的精度, 也通过LADA 标准 流流流流流流流流学, 来降低进化到 CLDALDA