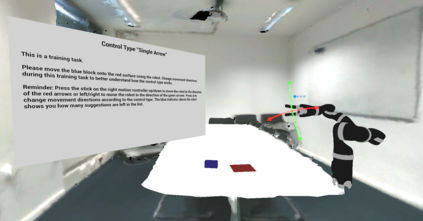

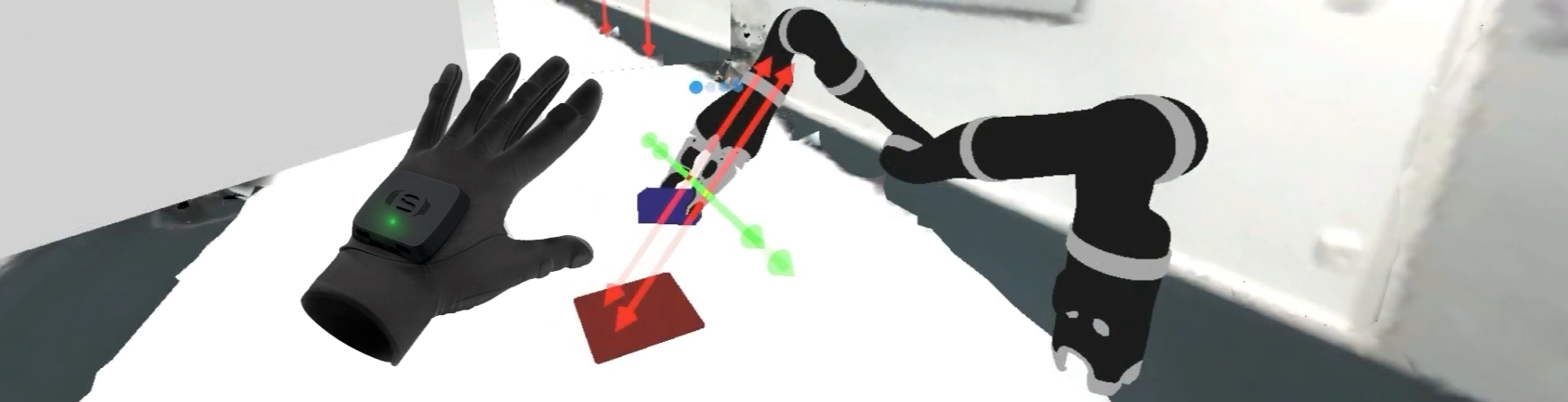

Nowadays, robots are found in a growing number of areas where they collaborate closely with humans. Enabled by lightweight materials and safety sensors, these cobots are gaining increasing popularity in domestic care, supporting people with physical impairments in their everyday lives. However, when cobots perform actions autonomously, it remains challenging for human collaborators to understand and predict their behavior, which is crucial for achieving trust and user acceptance. One significant aspect of predicting cobot behavior is understanding their motion intention and comprehending how they "think" about their actions. Moreover, other information sources often occupy human visual and audio modalities, rendering them frequently unsuitable for transmitting such information. We work on a solution that communicates cobot intention via haptic feedback to tackle this challenge. In our concept, we map planned motions of the cobot to different haptic patterns to extend the visual intention feedback.

翻译:暂无翻译