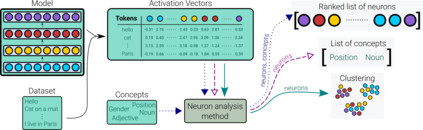

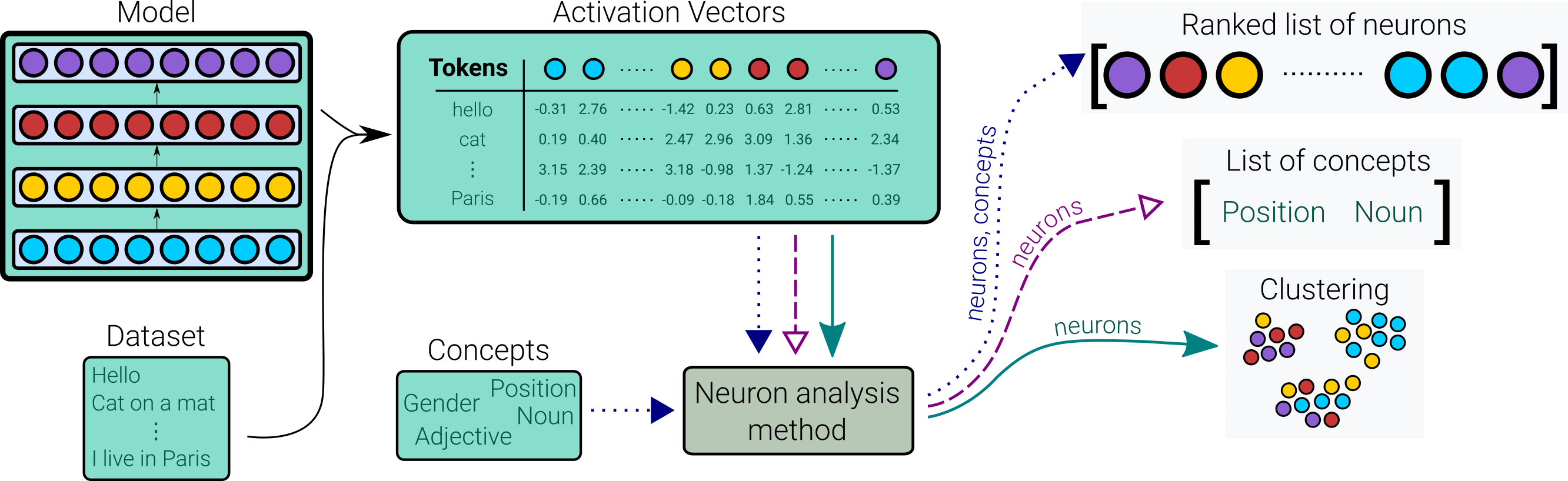

The proliferation of deep neural networks in various domains has seen an increased need for interpretability of these models. Preliminary work done along this line and papers that surveyed such, are focused on high-level representation analysis. However, a recent branch of work has concentrated on interpretability at a more granular level of analyzing neurons within these models. In this paper, we survey the work done on neuron analysis including: i) methods to discover and understand neurons in a network, ii) evaluation methods, iii) major findings including cross architectural comparisons that neuron analysis has unraveled, iv) applications of neuron probing such as: controlling the model, domain adaptation etc., and v) a discussion on open issues and future research directions.

翻译:不同领域深层神经网络的扩展表明越来越需要对这些模型进行解释,在这条线上完成的初步工作以及调查这些模型的论文都侧重于高层代表性分析,然而,最近的一项工作集中在这些模型中较颗粒层次的神经元分析分析的可解释性上,在本文件中,我们调查了在神经元分析方面所做的工作,包括:(一) 在网络中发现和理解神经元的方法,(二) 评价方法,(三) 主要结论,包括神经分析所揭示的跨结构比较,(四) 神经检测的应用,例如:控制模型、领域适应等,以及(五) 关于开放问题和未来研究方向的讨论。