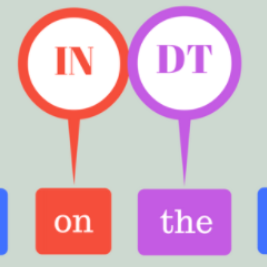

Contextual word-representations became a standard in modern natural language processing systems. These models use subword tokenization to handle large vocabularies and unknown words. Word-level usage of such systems requires a way of pooling multiple subwords that correspond to a single word. In this paper we investigate how the choice of subword pooling affects the downstream performance on three tasks: morphological probing, POS tagging and NER, in 9 typologically diverse languages. We compare these in two massively multilingual models, mBERT and XLM-RoBERTa. For morphological tasks, the widely used `choose the first subword' is the worst strategy and the best results are obtained by using attention over the subwords. For POS tagging both of these strategies perform poorly and the best choice is to use a small LSTM over the subwords. The same strategy works best for NER and we show that mBERT is better than XLM-RoBERTa in all 9 languages. We publicly release all code, data and the full result tables at \url{https://github.com/juditacs/subword-choice}.

翻译:在现代自然语言处理系统中,上下文的文字表示方式成为了现代自然语言处理系统中的标准。这些模型使用子字符号处理大型词汇和未知词。单词系统需要一种方法,将一个单词对应的多个子字集中起来。在本文中,我们调查了子词集合的选择如何影响下游在三种任务上的表现:形态学学学、POS标记和NER,使用9种类型多样的语言。我们用两种大规模多语种模式,即 mBERT 和 XLM-ROBERTA 来比较这些模式。在形态学任务中,广泛使用的“选择第一个子字”是最糟糕的战略,而最佳的结果是通过对子词的注意获得的。对于POS来说,这两个战略的标记效果很差,最好的选择是用小LSTM来取代子字。同样的战略对NER最有效,我们用所有9种语言来显示 mBERT比XLM-ROBERTA。我们公开发布所有代码、数据和URLS-GUBERA/chiscoforum_gisum_gisum_qob_qob_cisol_cisgy_qoqs_qo)