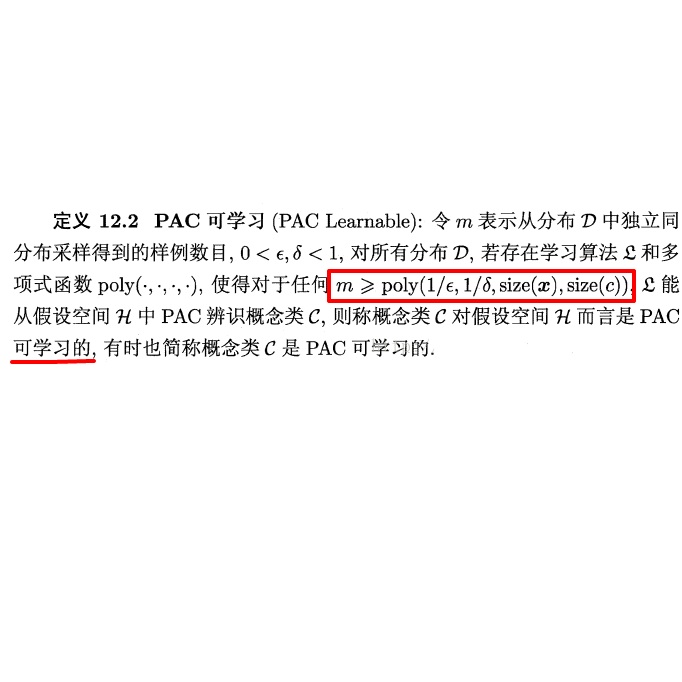

We introduce a novel clean-label targeted poisoning attack on learning mechanisms. While classical poisoning attacks typically corrupt data via addition, modification and omission, our attack focuses on data omission only. Our attack misclassifies a single, targeted test sample of choice, without manipulating that sample. We demonstrate the effectiveness of omission attacks against a large variety of learners including deep neural networks, SVM and decision trees, using several datasets including MNIST, IMDB and CIFAR. The focus of our attack on data omission only is beneficial as well, as it is simpler to implement and analyze. We show that, with a low attack budget, our attack's success rate is above 80%, and in some cases 100%, for white-box learning. It is systematically above the reference benchmark for black-box learning. For both white-box and black-box cases, changes in model accuracy are negligible, regardless of the specific learner and dataset. We also prove theoretically in a simplified agnostic PAC learning framework that, subject to dataset size and distribution, our omission attack succeeds with high probability against any successful simplified agnostic PAC learner.

翻译:我们在学习机制中引入了新的清洁标签定点中毒袭击。 虽然典型的中毒袭击通常通过添加、修改和遗漏来腐蚀数据, 我们的攻击仅侧重于数据遗漏。 我们的攻击错误地分解了一个单一的、有针对性的测试样本, 而没有操纵该样本。 我们用包括MNIST、IMDB和CIFAR在内的多个数据集, 展示了对包括深神经网络、 SVM 和决策树在内的大量学生的遗漏袭击的有效性。 我们攻击数据遗漏的焦点不仅有益,而且更容易实施和分析。 我们显示,在低攻击预算下,我们的攻击成功率超过80%, 在某些情况下, 超过100%, 用于白箱学习。 它系统地高于黑箱学习的基准。 对于白箱和黑箱案例来说, 模型精度的变化是微不足道的, 不论具体的学习者和数据集如何。 我们还在简化的微小的 PAC学习框架中证明, 我们的遗漏袭击成功率很高, 与任何成功的简化的 PAC 简化的 PAC 学习者相比, 取决于数据集大小和分布。