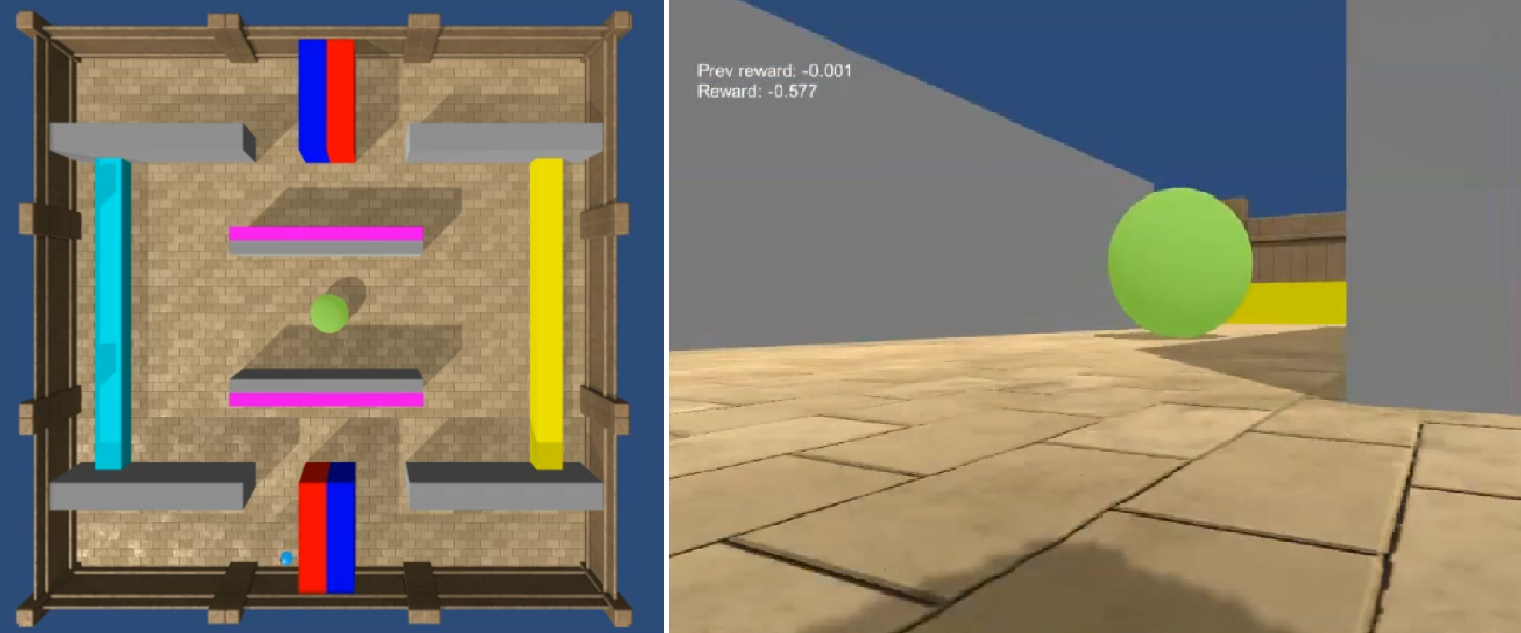

State of the art deep reinforcement learning algorithms are sample inefficient due to the large number of episodes they require to achieve asymptotic performance. Episodic Reinforcement Learning (ERL) algorithms, inspired by the mammalian hippocampus, typically use extended memory systems to bootstrap learning from past events to overcome this sample-inefficiency problem. However, such memory augmentations are often used as mere buffers, from which isolated past experiences are drawn to learn from in an offline fashion (e.g., replay). Here, we demonstrate that including a bias in the acquired memory content derived from the order of episodic sampling improves both the sample and memory efficiency of an episodic control algorithm. We test our Sequential Episodic Control (SEC) model in a foraging task to show that storing and using integrated episodes as event sequences leads to faster learning with fewer memory requirements as opposed to a standard ERL benchmark, Model-Free Episodic Control, that buffers isolated events only. We also study the effect of memory constraints and forgetting on the sequential and non-sequential version of the SEC algorithm. Furthermore, we discuss how a hippocampal-like fast memory system could bootstrap slow cortical and subcortical learning subserving habit formation in the mammalian brain.

翻译:在哺乳动物河马坎普斯的启发下,“强化学习”(ERL)算法通常使用延长的记忆系统,从过去的事件中学习,以示克服这种抽样效率低下的问题。然而,这种记忆增强往往仅仅用作缓冲,从中汲取孤立的过去经验,以便从离线式学习(例如,重放)。在这里,我们证明,包括从偶发抽样测序中获取的记忆内容的偏差,可以提高一个偶发控制算法的样本和记忆效率。我们测试我们从过去的事件中汲取的长效记忆系统,以便从过去的事件中学习,以克服这个抽样效率问题。但是,这种记忆增强往往只是作为缓冲,从这种缓冲中汲取孤立的过去经验,这样可以更快地学习,而不是一个标准的ERL基准,即模型-无孔径控制。我们还要研究记忆限制和忘记后继和非后继式止感后继式的止痛感控制(ASEEC) 后期(CORA),我们还要讨论在快速的感官-CREDADSB中学习后期制程和缓冲式的大脑演算。