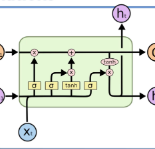

Nowadays, the development of social media allows people to access the latest news easily. During the COVID-19 pandemic, it is important for people to access the news so that they can take corresponding protective measures. However, the fake news is flooding and is a serious issue especially under the global pandemic. The misleading fake news can cause significant loss in terms of the individuals and the society. COVID-19 fake news detection has become a novel and important task in the NLP field. However, fake news always contain the correct portion and the incorrect portion. This fact increases the difficulty of the classification task. In this paper, we fine tune the pre-trained Bidirectional Encoder Representations from Transformers (BERT) model as our base model. We add BiLSTM layers and CNN layers on the top of the finetuned BERT model with frozen parameters or not frozen parameters methods respectively. The model performance evaluation results showcase that our best model (BERT finetuned model with frozen parameters plus BiLSTM layers) achieves state-of-the-art results towards COVID-19 fake news detection task. We also explore keywords evaluation methods using our best model and evaluate the model performance after removing keywords.

翻译:目前,社交媒体的发展使人们很容易获得最新消息。在COVID-19大流行期间,人们必须获得新闻,以便采取相应的保护措施。然而,假消息正在泛滥,是全球大流行病下的一个严重问题。误导假消息可能会对个人和社会造成重大损失。COVID-19假新闻探测已成为NLP领域一项新颖的重要任务。然而,假消息总是包含正确的部分和错误部分。这一事实增加了分类任务的难度。在本文中,我们调整了来自变换器(BERT)的预先培训的双向编码显示模型,作为我们的基础模型。我们在微调的BERT模型上添加了BLSTM层和CNND层,分别使用冻结参数或非冻结参数方法。示范性业绩评价结果显示,我们的最佳模型(BERT与冻结参数和BILSTM层的微调模型)实现了对COVID-19假新闻探测任务的最新结果。我们还利用最佳模型来探索关键词评价方法,并在删除后评估模型性能。