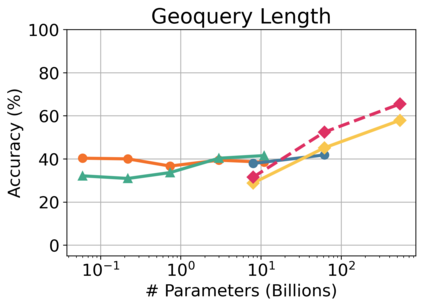

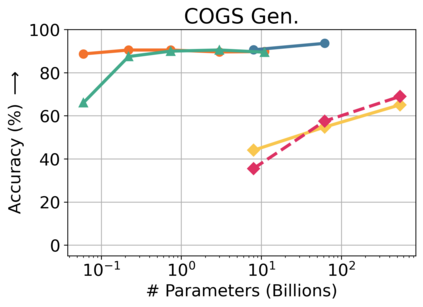

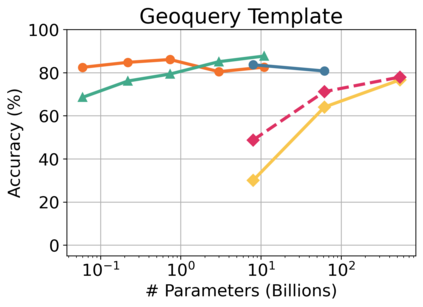

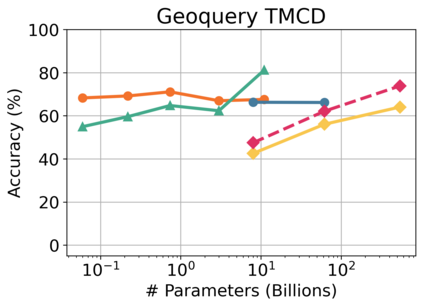

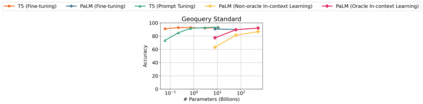

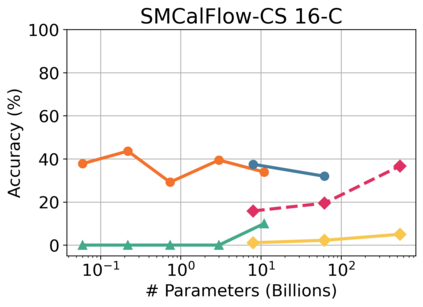

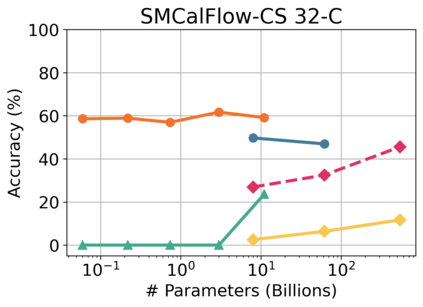

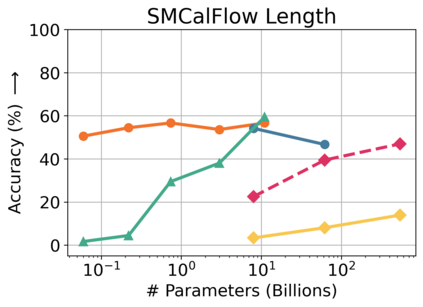

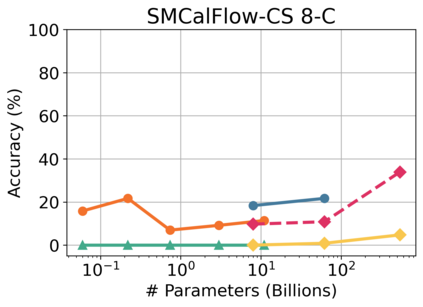

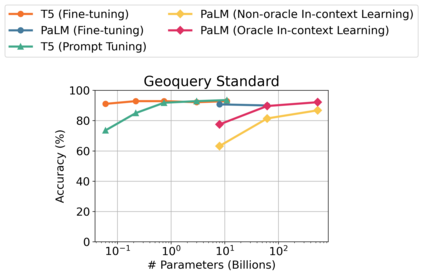

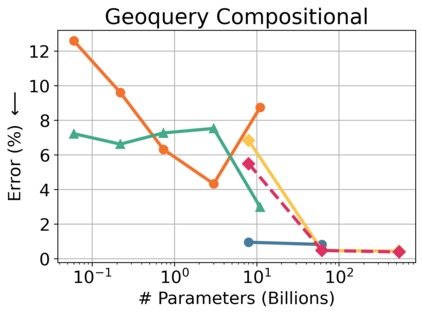

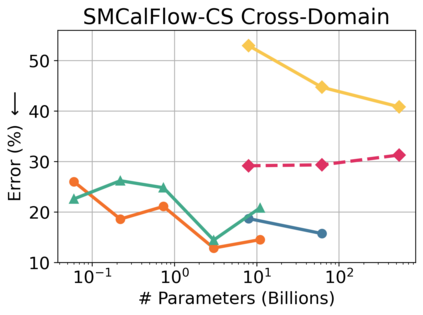

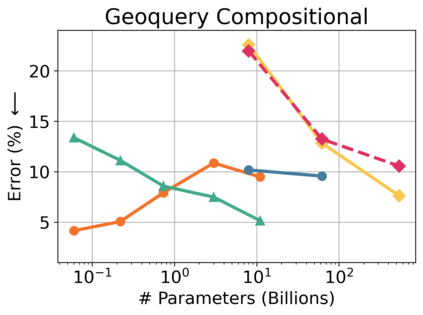

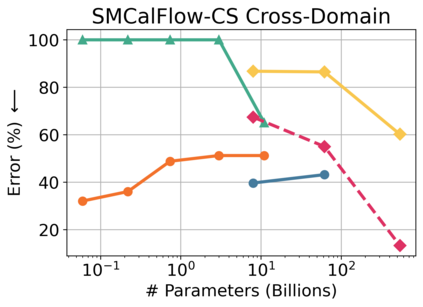

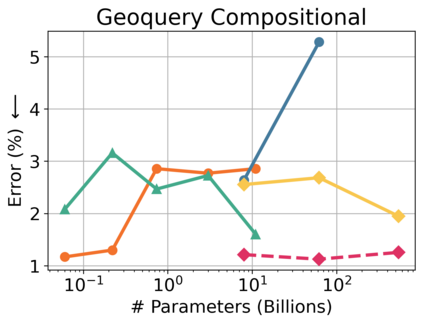

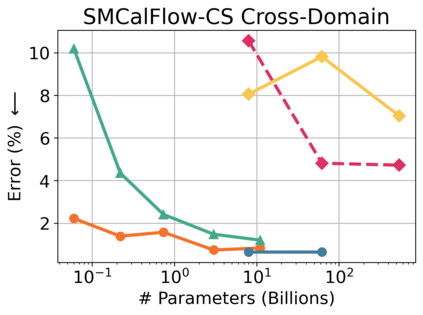

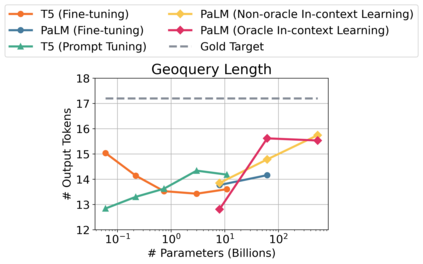

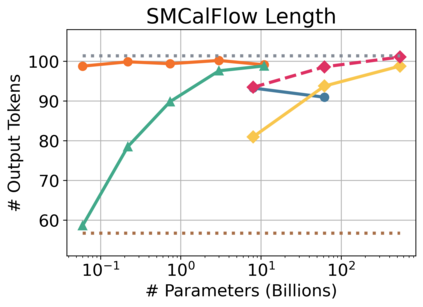

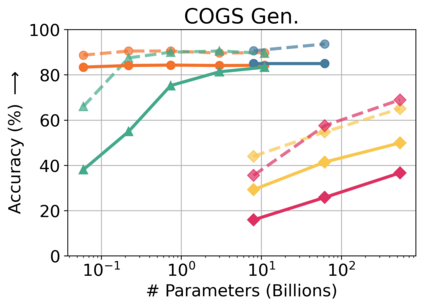

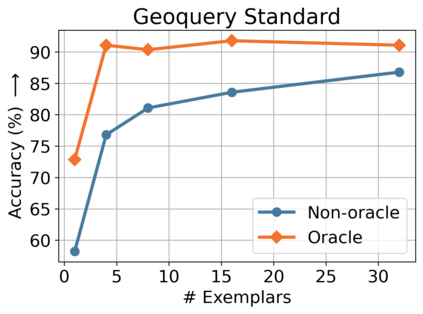

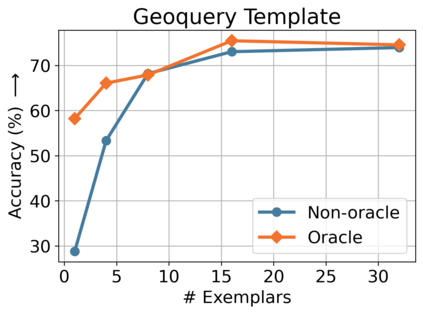

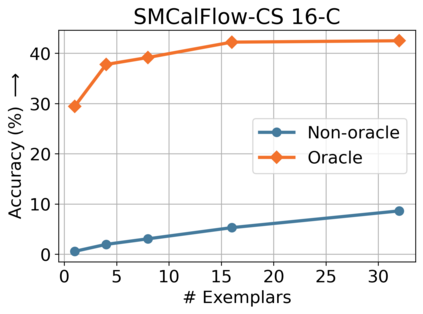

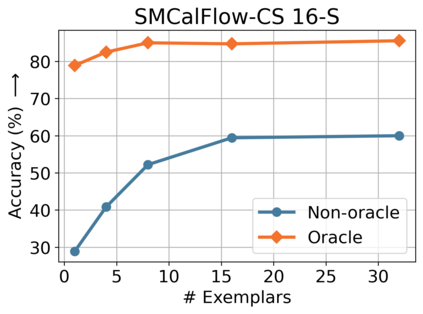

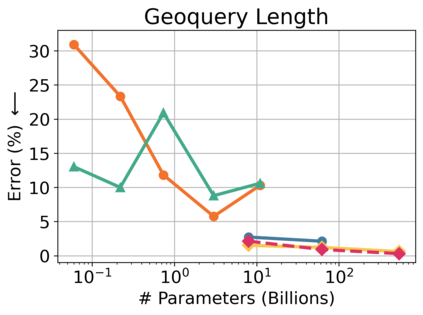

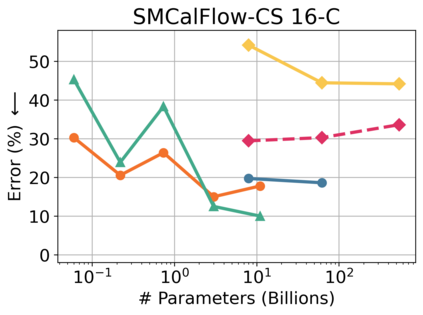

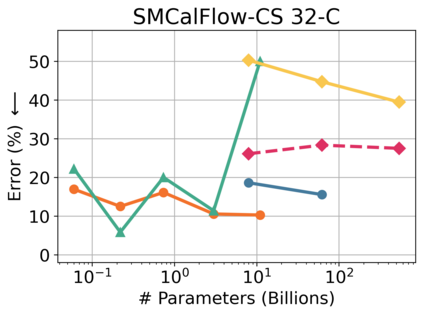

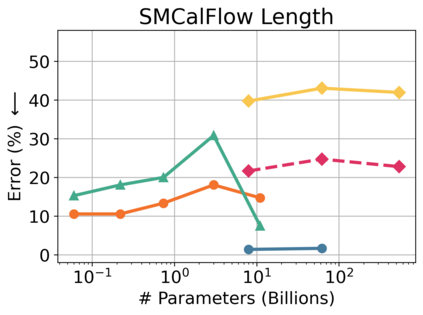

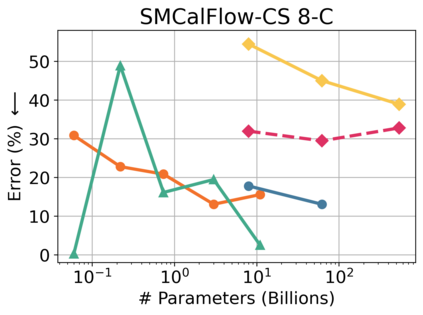

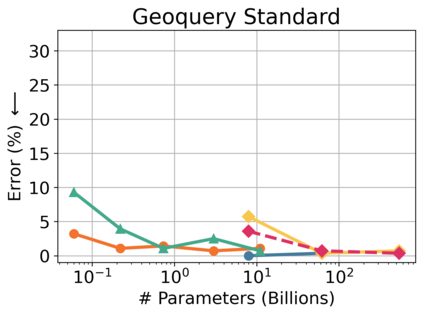

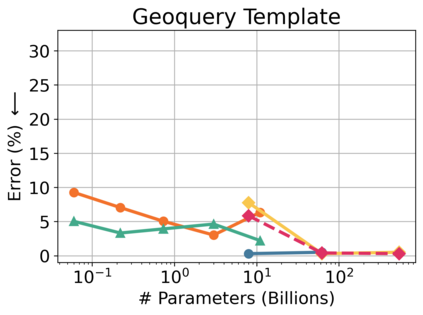

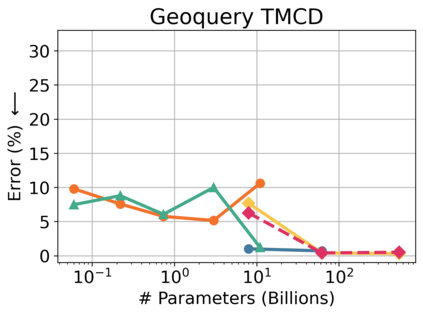

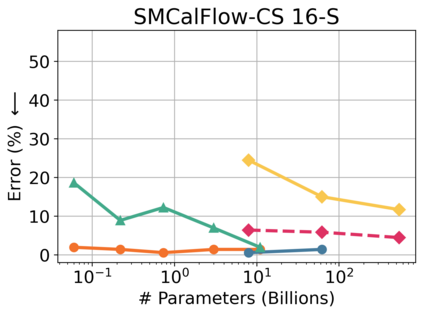

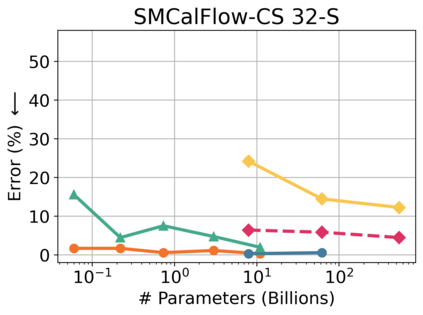

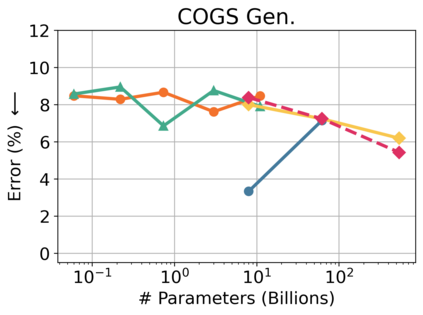

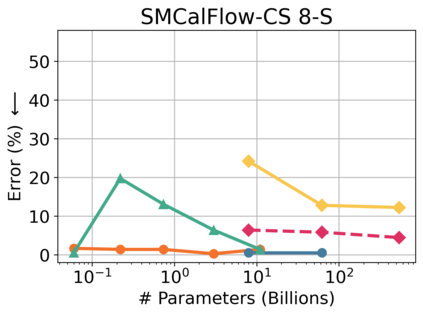

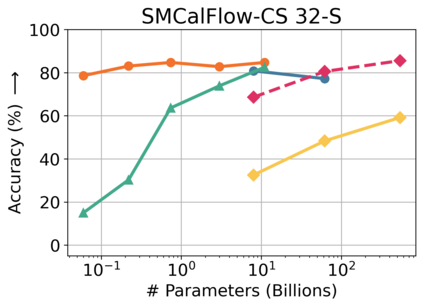

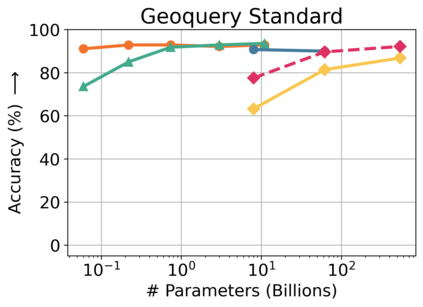

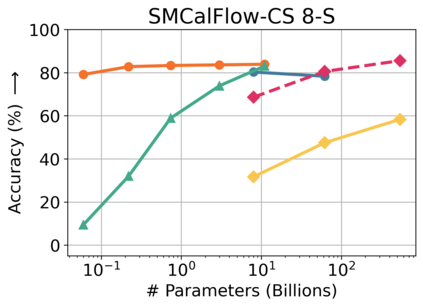

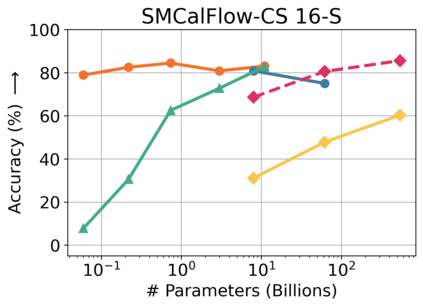

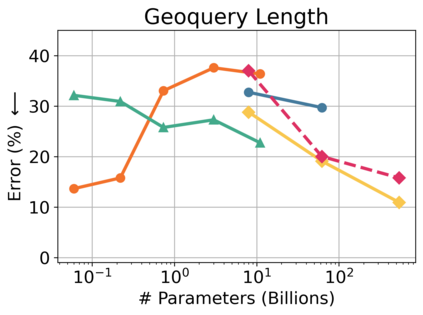

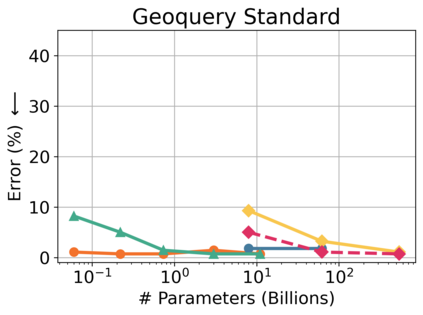

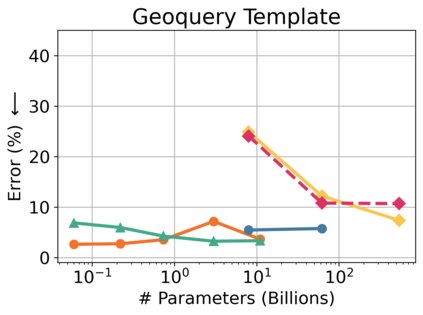

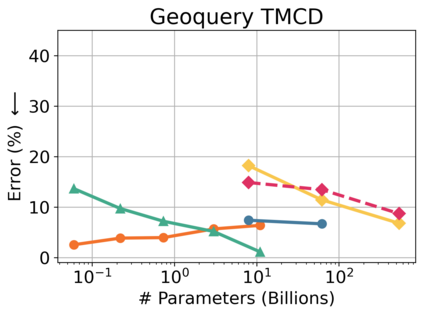

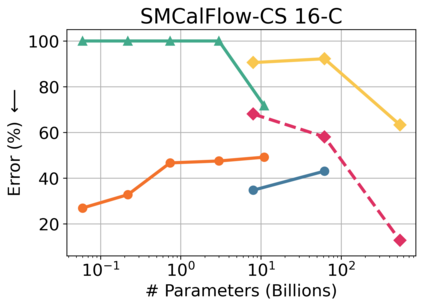

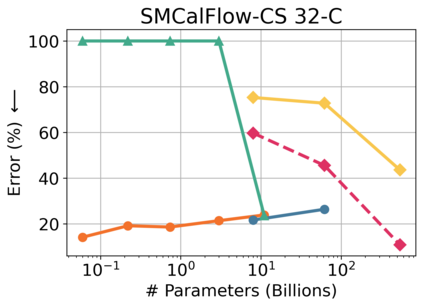

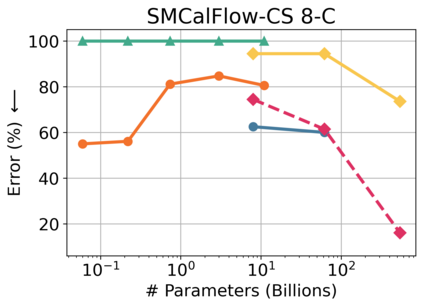

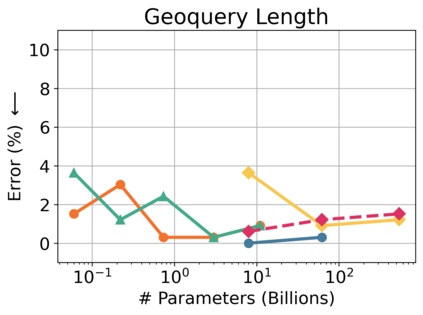

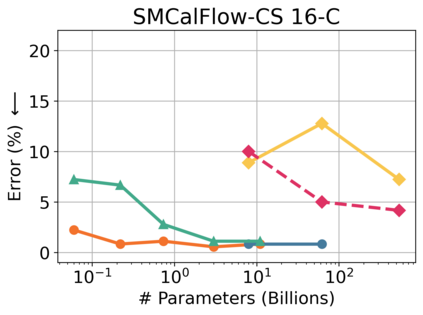

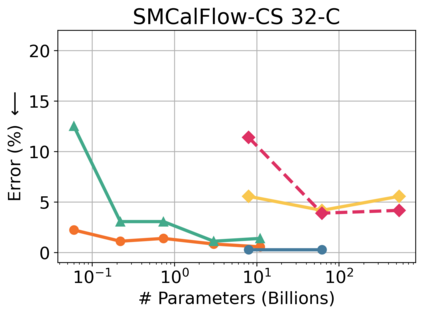

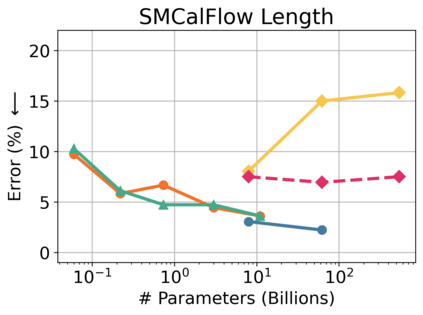

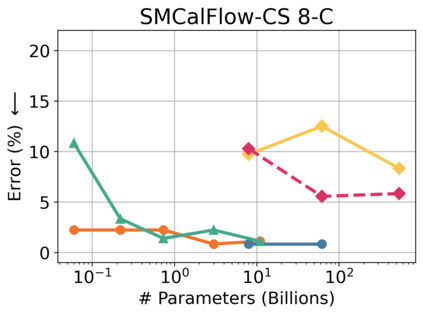

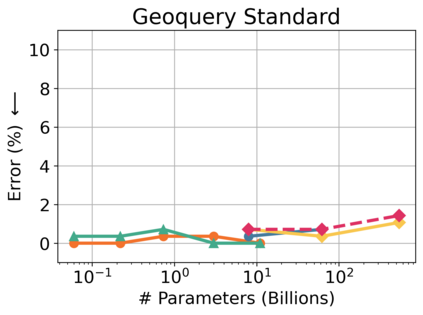

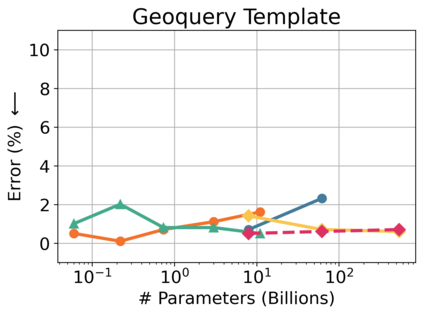

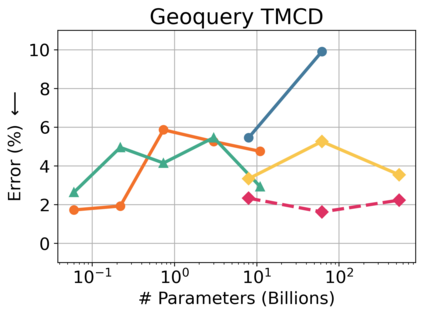

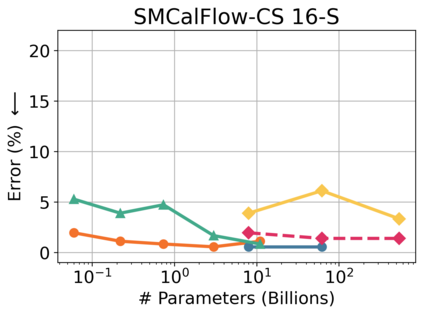

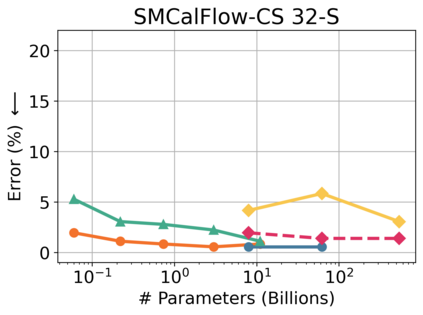

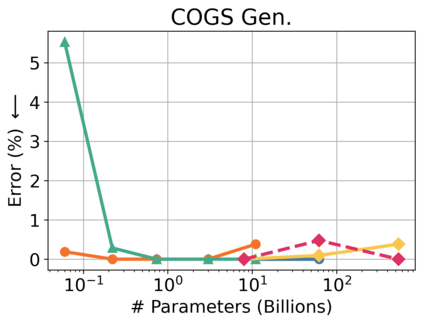

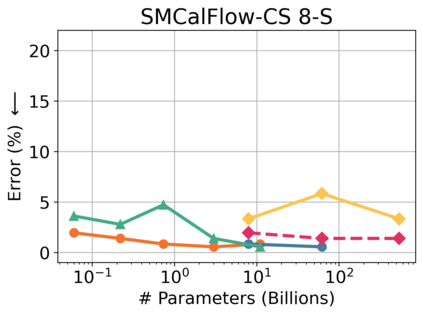

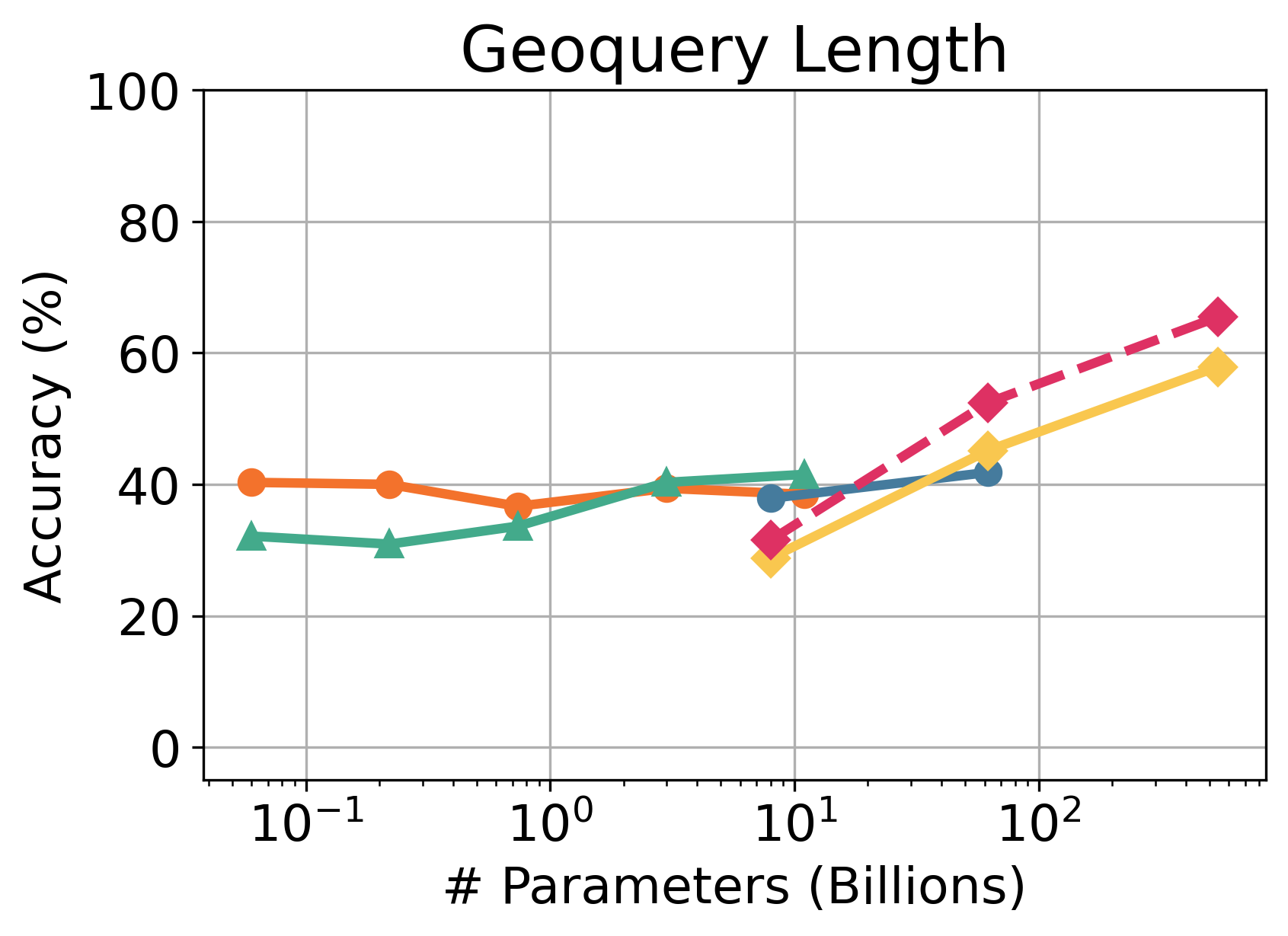

Despite their strong performance on many tasks, pre-trained language models have been shown to struggle on out-of-distribution compositional generalization. Meanwhile, recent work has shown considerable improvements on many NLP tasks from model scaling. Can scaling up model size also improve compositional generalization in semantic parsing? We evaluate encoder-decoder models up to 11B parameters and decoder-only models up to 540B parameters, and compare model scaling curves for three different methods for transfer learning: fine-tuning all parameters, prompt tuning, and in-context learning. We observe that fine-tuning generally has flat or negative scaling curves on out-of-distribution compositional generalization in semantic parsing evaluations. In-context learning has positive scaling curves, but is generally outperformed by much smaller fine-tuned models. Prompt-tuning can outperform fine-tuning, suggesting further potential improvements from scaling as it exhibits a more positive scaling curve. Additionally, we identify several error trends that vary with model scale. For example, larger models are generally better at modeling the syntax of the output space, but are also more prone to certain types of overfitting. Overall, our study highlights limitations of current techniques for effectively leveraging model scale for compositional generalization, while our analysis also suggests promising directions for future work.

翻译:尽管在很多任务上表现良好,但经过培训的语文模型已经表明,在分配范围外的概括性化方面挣扎。与此同时,最近的工作表明,从模型规模的扩大中,许多NLP任务有了相当大的改进。能够扩大模型规模,还能够改善语义分解中的拼写性概括性吗?我们评价编码器脱coder模型,最高达到11B参数,而只分解编码模型,最高达到540B参数,并比较三种不同的转移学习方法的模型缩放曲线:微调所有参数,迅速调,和文本内学习。我们发现,微调一般而言,在分配范围外的概括性评价中,有平或负的缩放曲线。内文学习具有积极的缩放曲线,但通常比小得多的微缩放模型要强得多。 快速调可以超越微调,并表明,由于显示更积极的缩放缩缩曲线,因此有可能作出进一步的改进。此外,我们发现一些与模型规模不同的错误趋势。例如,较大型模型一般比较在模拟中比较了分配目前产出空间的缩略性,同时也倾向于进行总体分析。