文章题目

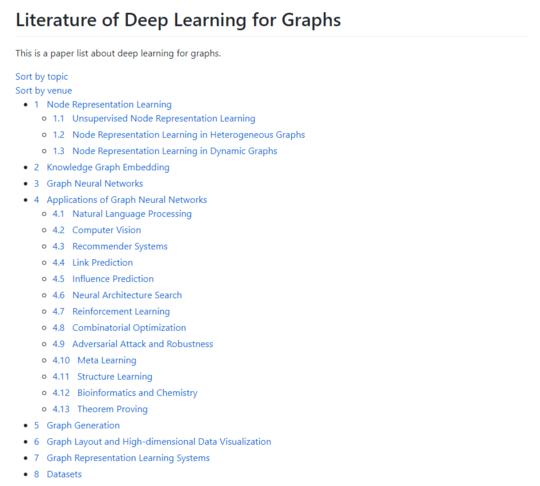

图论深度学习研究综述:A comprehensive collection of recent papers on graph deep learning

文章内容

作者怀着大公无私的精神,致力于服务广大AI从事人员,此次将关于图深度学习的最新最经典的书籍,论文等资料全部搜集了一下,以供广大图深度学习者参考,内容海纳百川,包罗万象,精彩丰富,实在不容错过。

成为VIP会员查看完整内容

相关内容

专知会员服务

83+阅读 · 2020年5月10日

专知会员服务

159+阅读 · 2020年4月2日

专知会员服务

79+阅读 · 2020年3月19日

专知会员服务

20+阅读 · 2020年1月7日

专知会员服务

58+阅读 · 2019年12月2日

A Survey of Reinforcement Learning Techniques: Strategies, Recent Development, and Future Directions

Arxiv

80+阅读 · 2020年1月19日