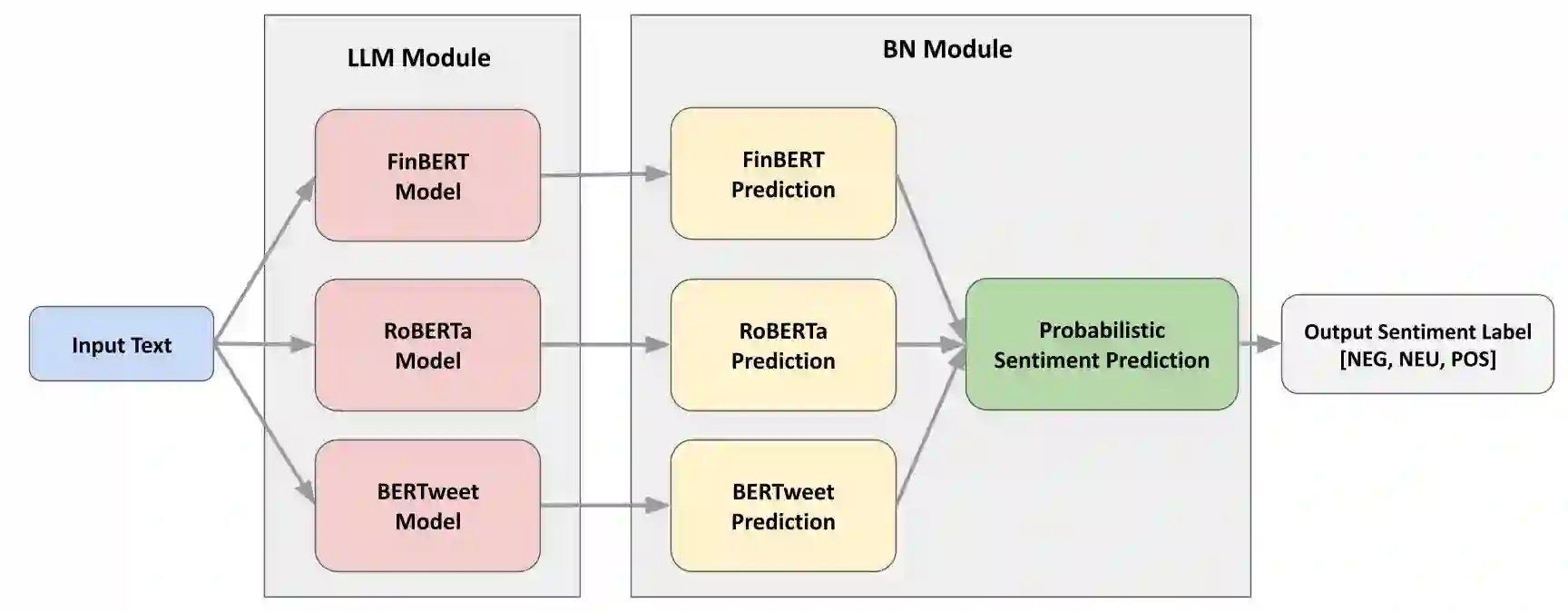

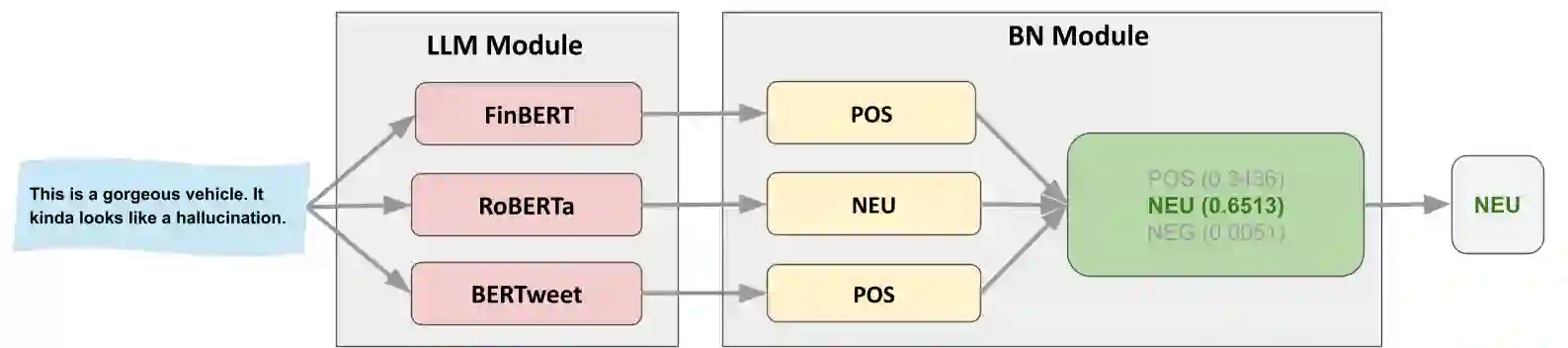

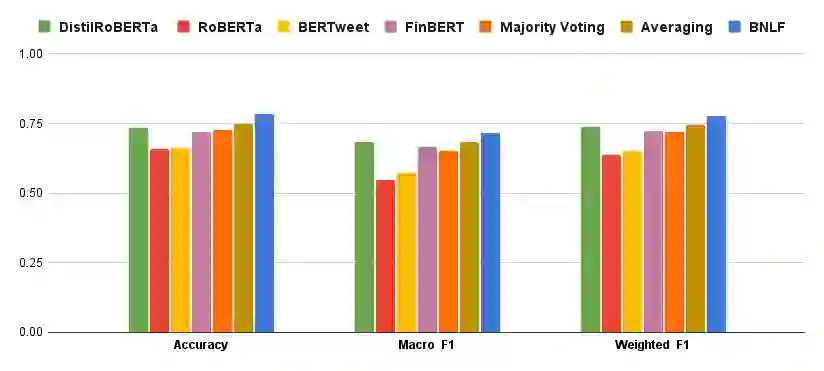

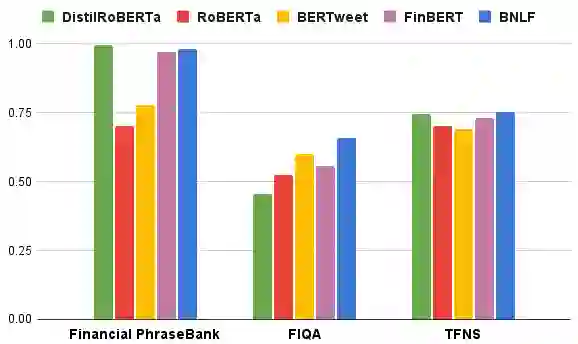

Large language models (LLMs) continue to advance, with an increasing number of domain-specific variants tailored for specialised tasks. However, these models often lack transparency and explainability, can be costly to fine-tune, require substantial prompt engineering, yield inconsistent results across domains, and impose significant adverse environmental impact due to their high computational demands. To address these challenges, we propose the Bayesian network LLM fusion (BNLF) framework, which integrates predictions from three LLMs, including FinBERT, RoBERTa, and BERTweet, through a probabilistic mechanism for sentiment analysis. BNLF performs late fusion by modelling the sentiment predictions from multiple LLMs as probabilistic nodes within a Bayesian network. Evaluated across three human-annotated financial corpora with distinct linguistic and contextual characteristics, BNLF demonstrates consistent gains of about six percent in accuracy over the baseline LLMs, underscoring its robustness to dataset variability and the effectiveness of probabilistic fusion for interpretable sentiment classification.

翻译:大语言模型(LLMs)持续发展,针对特定任务的领域专用变体日益增多。然而,这些模型通常缺乏透明度和可解释性,微调成本高昂,需要大量提示工程,在不同领域的结果不一致,且因其高计算需求对环境造成显著负面影响。为应对这些挑战,我们提出了贝叶斯网络LLM融合(BNLF)框架,该框架通过概率机制整合了FinBERT、RoBERTa和BERTweet三种LLM的预测结果,用于情感分析。BNLF通过将多个LLM的情感预测建模为贝叶斯网络中的概率节点,实现后期融合。在三个具有不同语言和上下文特征的人工标注金融语料库上进行评估,BNLF相比基线LLM在准确率上持续提升约6%,突显了其对数据集变异性的鲁棒性以及概率融合在可解释情感分类中的有效性。