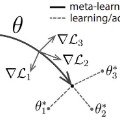

Bilevel optimization has become a powerful framework in various machine learning applications including meta-learning, hyperparameter optimization, and network architecture search. There are generally two classes of bilevel optimization formulations for machine learning: 1) problem-based bilevel optimization, whose inner-level problem is formulated as finding a minimizer of a given loss function; and 2) algorithm-based bilevel optimization, whose inner-level solution is an output of a fixed algorithm. For the first class, two popular types of gradient-based algorithms have been proposed for hypergradient estimation via approximate implicit differentiation (AID) and iterative differentiation (ITD). Algorithms for the second class include the popular model-agnostic meta-learning (MAML) and almost no inner loop (ANIL). However, the convergence rate and fundamental limitations of bilevel optimization algorithms have not been well explored. This thesis provides a comprehensive convergence rate analysis for bilevel algorithms in the aforementioned two classes. We further propose principled algorithm designs for bilevel optimization with higher efficiency and scalability. For the problem-based formulation, we provide a convergence rate analysis for AID- and ITD-based bilevel algorithms. We then develop acceleration bilevel algorithms, for which we provide shaper convergence analysis with relaxed assumptions. We also provide the first lower bounds for bilevel optimization, and establish the optimality by providing matching upper bounds under certain conditions. We finally propose new stochastic bilevel optimization algorithms with lower complexity and higher efficiency in practice. For the algorithm-based formulation, we develop a theoretical convergence for general multi-step MAML and ANIL, and characterize the impact of parameter selections and loss geometries on the their complexities.

翻译:双层优化已成为各种机器学习应用,包括元学习、超参数优化和网络架构搜索的强大框架。一般有两类双级优化配方,用于机器学习:1)基于问题的双级优化,其内部问题被表述为对特定损失功能的最小化;2)基于算法的双级优化,其内部解决方案是固定算法的输出。关于第一类,提出了两种基于梯度的算法的流行类型,以便通过近似隐含的区别(AID)和迭代差异(ITD)来进行高层次的估算。第二类的二级的调整包括流行的模型――不可知性多级学习(MAML)和几乎没有内部循环(ANIL)。然而,对双级优化算法的趋同率和基本限制,尚未很好探讨。对于上述两类的双级算法,我们进一步提议了双级优化,以更高的效率和可缩缩略性(ITD)为基于问题的配法。我们为AID和ITD级的多级多级的多级混合配价分析,我们用基于双级的升级的升级的双级的比级的升级的升级演算法,我们随后又以提供双级的升级的升级的升级的升级的升级的升级的升级的升级的升级的升级和升级。我们提供了双级平级的升级的升级的升级的升级的升级的升级的升级的升级的升级的升级的升级的升级的升级的升级和升级的升级。