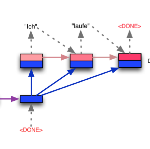

Dialogue meaning representation formulates natural language utterance semantics in their conversational context in an explicit and machine-readable form. Previous work typically follows the intent-slot framework, which is easy for annotation yet limited on scalability for complex linguistic expressions. A line of works alleviates the representation issue by introducing hierarchical structures but challenging to express complex compositional semantics, such as negation and coreference. We propose Dialogue Meaning Representation (DMR), a flexible and easily extendable representation for task-oriented dialogue. Our representation contains a set of nodes and edges with inheritance hierarchy to represent rich semantics for compositional semantics and task-specific concepts. We annotated DMR-FastFood, a multi-turn dialogue dataset with more than 70k utterances, with DMR. We propose two evaluation tasks to evaluate different machine learning based dialogue models, and further propose a novel coreference resolution model GNNCoref for the graph-based coreference resolution task. Experiments show that DMR can be parsed well with pretrained Seq2Seq model, and GNNCoref outperforms the baseline models by a large margin.

翻译:对话意味着在对话背景中以明确和机器可读的形式表达自然语言表达语义。 先前的工作通常遵循意向分布框架, 即易于说明, 但对于复杂语言表达的可缩放性则有限。 一行工作通过引入等级结构缓解了代表性问题,但对于表达复杂的构成语义(如否定和共同参照)却具有挑战性。 我们提议对话表示(DMR), 这是一种灵活和易于扩展的任务导向对话代表。 我们的代表包含一系列带有继承等级的节点和边框, 以代表成文语和任务特定概念的丰富语义。 我们附加了DMR- Fast Food, 是一个多方向对话数据集, 其超70公里的语义。 我们提出两项评估任务, 以评价不同的机器学习对话模式, 并进一步提出基于图形的共参照解答任务的新的共同参照决议模型 GNNNCoref。 实验显示, DMR 可以与预先培训的Seq2Seq模型和GNNCoref 超越基线模型。