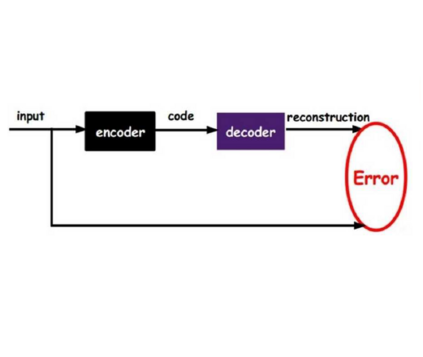

Machine learning on tree data has been mostly focused on trees as input. Much less research has covered trees as output, like in chemical molecule optimization or hint generation for intelligent tutoring systems. In this work, we propose recursive tree grammar autoencoders (RTG-AEs), which encode trees via a bottom-up parser and decode trees via a tree grammar, both controlled by a neural network that minimizes the variational autoencoder loss. The resulting encoding and decoding functions can then be employed in subsequent tasks, such as optimization and time series prediction. Our key message is that combining grammar knowledge with recursive processing improves performance compared to using either grammar knowledge or non-sequential processing, but not both. In particular, we show experimentally that our model improves autoencoding error, training time, and optimization score on four benchmark datasets compared to baselines from the literature.

翻译:树木数据方面的机器学习大多集中在树上作为输入。 远不如研究将树作为输出, 如化学分子优化或智能导师系统提示生成。 在这项工作中, 我们提议通过自下而上的剖析器将树木编码, 通过树语解码, 并通过树语图解码。 两者都由神经网络控制, 最大限度地减少变异自动编码损失。 由此产生的编码和解码功能随后可以用于以后的任务, 如优化和时间序列预测。 我们的关键信息是, 将语法知识与递归处理相结合, 与使用语法知识或非顺序处理相比, 而不是两者都提高了性能。 特别是, 我们实验性地显示, 我们模型可以改进自动编码错误、 培训时间和四个基准数据集的优化得分, 与文献的基线相比 。