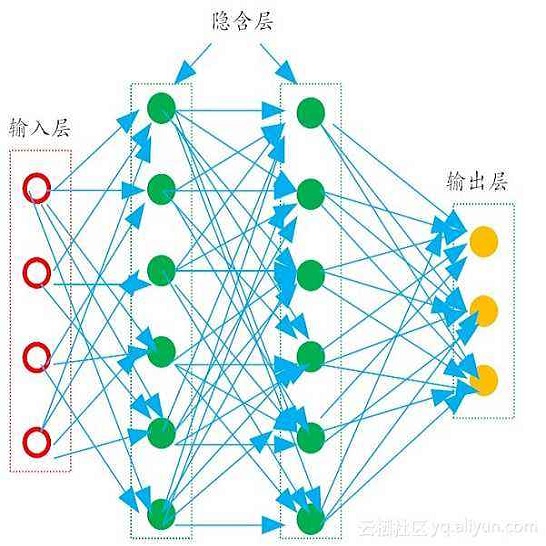

Student engagement is a key construct for learning and teaching. While most of the literature explored the student engagement analysis on computer-based settings, this paper extends that focus to classroom instruction. To best examine student visual engagement in the classroom, we conducted a study utilizing the audiovisual recordings of classes at a secondary school over one and a half month's time, acquired continuous engagement labeling per student (N=15) in repeated sessions, and explored computer vision methods to classify engagement levels from faces in the classroom. We trained deep embeddings for attentional and emotional features, training Attention-Net for head pose estimation and Affect-Net for facial expression recognition. We additionally trained different engagement classifiers, consisting of Support Vector Machines, Random Forest, Multilayer Perceptron, and Long Short-Term Memory, for both features. The best performing engagement classifiers achieved AUCs of .620 and .720 in Grades 8 and 12, respectively. We further investigated fusion strategies and found score-level fusion either improves the engagement classifiers or is on par with the best performing modality. We also investigated the effect of personalization and found that using only 60-seconds of person-specific data selected by margin uncertainty of the base classifier yielded an average AUC improvement of .084.

翻译:虽然大多数文献探索了计算机环境下的学生参与分析,但本文将重点扩展至课堂教学。为了最好地审查课堂上的学生视觉参与情况,我们利用一个半月以上中学班级的音像记录进行了一项研究,在多次课中获得了每个学生(N=15)的连续参与标签,并探索了将参与程度与课堂面部区分的计算机愿景方法。我们为关注和情感特征进行了深入的嵌入培训,为头部的注意网进行了显示估计,为面部表达识别提供了Affect-Net。我们还为这两个特征培训了不同的参与分类,包括支持矢量机、随机森林、多层 Perceptron 和长期记忆。最佳参与分类人员在8年级和12年级分别实现了620和720的AUC。我们进一步调查了融合战略,发现得分级融合要么改进了参与分类,要么与最佳表现模式相匹配。我们还调查了个人化的影响,并发现仅利用了608秒的某人具体数据升级幅度。