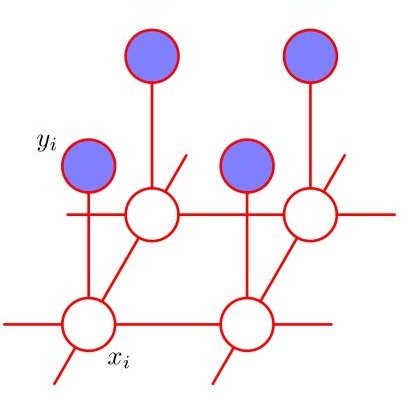

Non-stationarity is one thorny issue in multi-agent reinforcement learning, which is caused by the policy changes of agents during the learning procedure. Current works to solve this problem have their own limitations in effectiveness and scalability, such as centralized critic and decentralized actor (CCDA), population-based self-play, modeling of others and etc. In this paper, we novelly introduce a $\delta$-stationarity measurement to explicitly model the stationarity of a policy sequence, which is theoretically proved to be proportional to the joint policy divergence. However, simple policy factorization like mean-field approximation will mislead to larger policy divergence, which can be considered as trust region decomposition dilemma. We model the joint policy as a general Markov random field and propose a trust region decomposition network based on message passing to estimate the joint policy divergence more accurately. The Multi-Agent Mirror descent policy algorithm with Trust region decomposition, called MAMT, is established with the purpose to satisfy $\delta$-stationarity. MAMT can adjust the trust region of the local policies adaptively in an end-to-end manner, thereby approximately constraining the divergence of joint policy to alleviate the non-stationary problem. Our method can bring noticeable and stable performance improvement compared with baselines in coordination tasks of different complexity.

翻译:多试剂强化学习中的一个棘手问题是不稳定性,这是由学习过程中代理人的政策变化造成的。目前,解决这一问题的工作在效力和可扩展性方面有其自身的局限性,例如中央批评家和分散的行为者(CCDA)、基于人口的自我游戏、其他人的模型等等。在本文中,我们新引入了一种以美元为基准的常态衡量方法,以明确模拟政策序列的静态性,从理论上说,这证明与联合政策差异成比例。然而,像平均地点接近这样的简单政策因子化将误导到更大的政策差异,这可被视为信任区域的分解两难点。我们把联合政策建成一个通用的马尔科夫随机字段,并根据传递的信息提出一个信任区域分解网络,以更准确地估计联合政策差异。多位代表的血缘政策算法,称为MAMT,旨在满足美元为基准值的常态性。MAMT可以调整当地政策的信任区,最终可被视为信任区分解的两难点。我们可以将共同政策改进程度的差距与我们的共同改进标准的方法相比较。