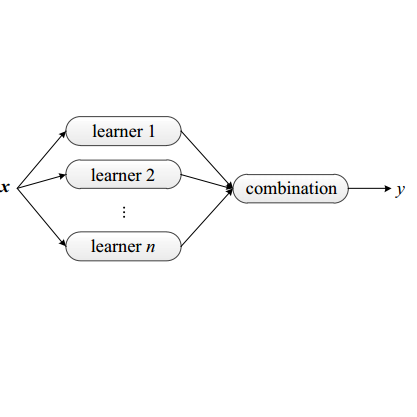

One of the most effective methods of channel pruning is to trim on the basis of the importance of each neuron. However, measuring the importance of each neuron is an NP-hard problem. Previous works have proposed to trim by considering the statistics of a single layer or a plurality of successive layers of neurons. These works cannot eliminate the influence of different data on the model in the reconstruction error, and currently, there is no work to prove that the absolute values of the parameters can be directly used as the basis for judging the importance of the weights. A more reasonable approach is to eliminate the difference between batch data that accurately measures the weight of influence. In this paper, we propose to use ensemble learning to train a model for different batches of data and use the influence function (a classic technique from robust statistics) to learn the algorithm to track the model's prediction and return its training parameter gradient, so that we can determine the responsibility for each parameter, which we call "influence", in the prediction process. In addition, we theoretically prove that the back-propagation of the deep network is a first-order Taylor approximation of the influence function of the weights. We perform extensive experiments to prove that pruning based on the influence function using the idea of ensemble learning will be much more effective than just focusing on error reconstruction. Experiments on CIFAR shows that the influence pruning achieves the state-of-the-art result.

翻译:以每个神经元的重要性为基础, 测量每个神经元的重要性是一个NP- 硬问题。 先前的工作建议通过考虑单层或多个神经元层的统计来修补。 这些工程无法消除不同数据对重建错误模型的影响, 而目前, 没有工作可以证明参数的绝对值可以直接用作判断重量重要性的基础。 更合理的方法是消除准确衡量影响重量的批量数据之间的差异。 在本文中, 我们提议使用混合学习来培训不同数据批量的模型, 并使用影响功能( 一种来自可靠统计数据的经典技术) 来学习算法来跟踪模型的预测并返回其培训参数梯度, 这样我们就可以确定在预测过程中对每个参数的责任, 我们称之为“ 影响 ” 。 此外, 我们理论上证明深层网络的反调是初等级的Taylor对影响力的近似值, 并且使用影响力函数( 从强的典型技术) 来学习模型的精确度。 我们用模型的精确性功能来测试, 将显示以更精确的精确度为基准。