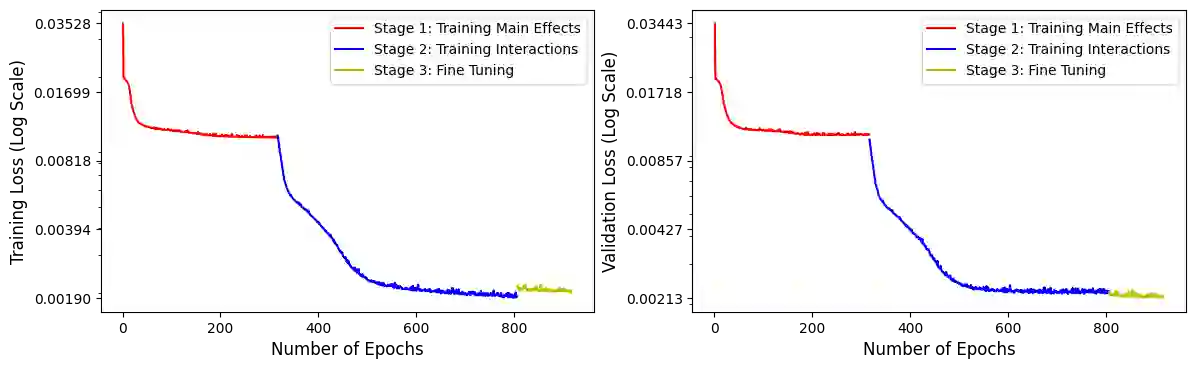

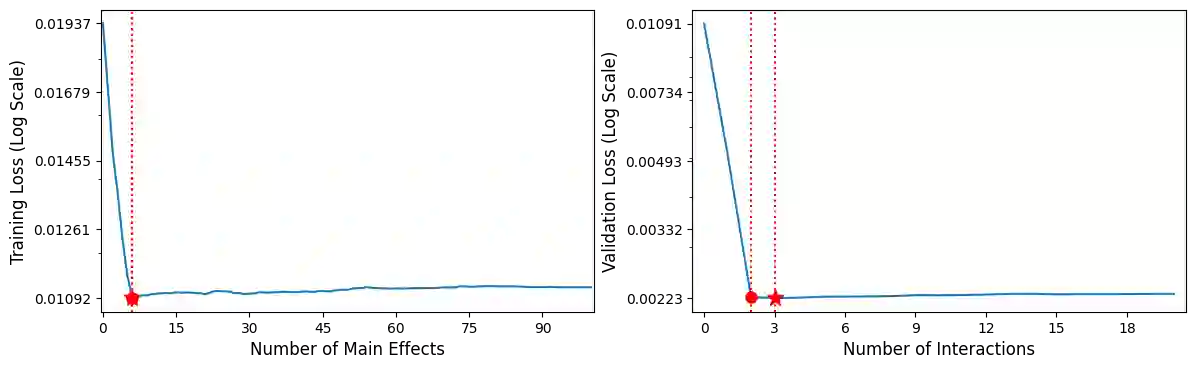

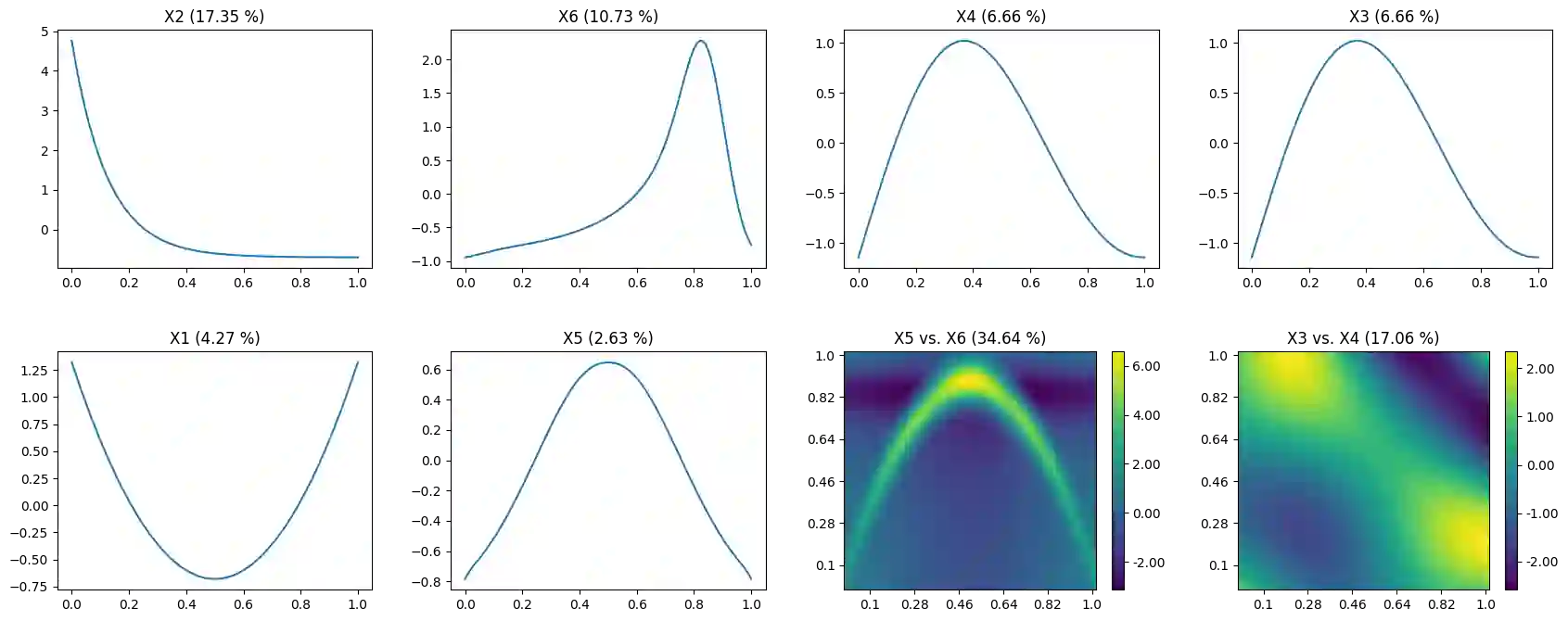

The lack of interpretability is an inevitable problem when using neural network models in real applications. In this paper, an explainable neural network based on generalized additive models with structured interactions (GAMI-Net) is proposed to pursue a good balance between prediction accuracy and model interpretability. GAMI-Net is a disentangled feedforward network with multiple additive subnetworks; each subnetwork consists of multiple hidden layers and is designed for capturing one main effect or one pairwise interaction. Three interpretability aspects are further considered, including a) sparsity, to select the most significant effects for parsimonious representations; b) heredity, a pairwise interaction could only be included when at least one of its parent main effects exists; and c) marginal clarity, to make main effects and pairwise interactions mutually distinguishable. An adaptive training algorithm is developed, where main effects are first trained and then pairwise interactions are fitted to the residuals. Numerical experiments on both synthetic functions and real-world datasets show that the proposed model enjoys superior interpretability and it maintains competitive prediction accuracy in comparison to the explainable boosting machine and other classic machine learning models.

翻译:在实际应用中使用神经网络模型时,缺乏解释性是一个不可避免的问题。在本文中,建议基于具有结构互动的通用添加模型(GAMI-Net)的可解释性神经网络,以在预测准确性和模型解释性之间求得良好的平衡。GAMI-Net是一个与多个添加子网络分离的进化进化网络;每个子网络由多个隐藏层组成,旨在捕捉一种主要效应或一种双向互动。进一步考虑了三个可解释性方面,包括a) 宽度,以选择对异性表现最重要的影响;b) 遗传性,一种对称性互动只有在至少存在其母主要效应之一时才能包括在内;c) 边际清晰度,使主要效应和对称互动能够相互区别。开发了适应性培训算法,主要效果首先经过培训,然后使对称互动与残余相配对。合成函数和真实世界数据集的数值实验表明,拟议的模型具有较高的可解释性,在与可解释性机器和其他经典机器学习模型相比,保持竞争性预测性准确性。