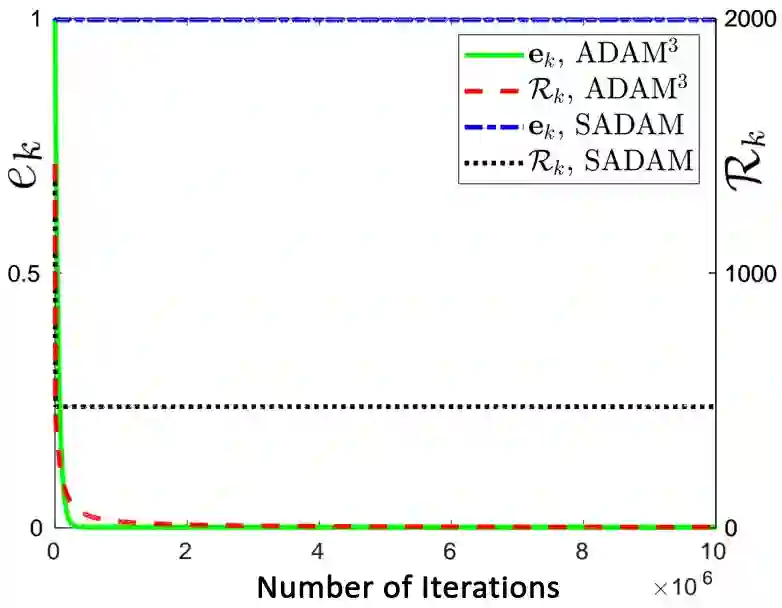

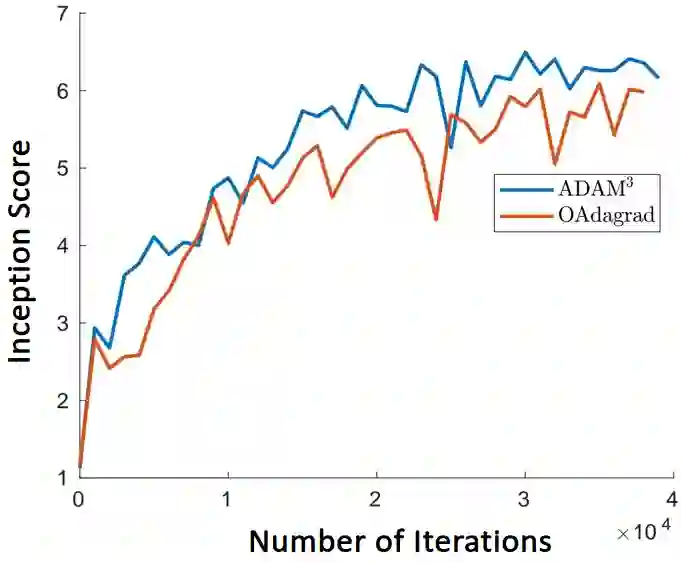

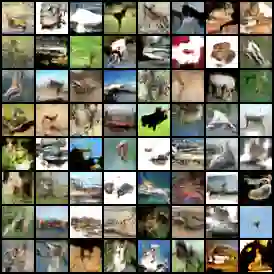

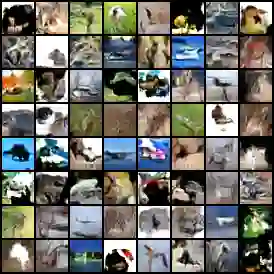

Adaptive momentum methods have recently attracted a lot of attention for training of deep neural networks. They use an exponential moving average of past gradients of the objective function to update both search directions and learning rates. However, these methods are not suited for solving min-max optimization problems that arise in training generative adversarial networks. In this paper, we propose an adaptive momentum min-max algorithm that generalizes adaptive momentum methods to the non-convex min-max regime. Further, we establish non-asymptotic rates of convergence for the proposed algorithm when used in a reasonably broad class of non-convex min-max optimization problems. Experimental results illustrate its superior performance vis-a-vis benchmark methods for solving such problems.

翻译:适应性动力方法最近吸引了对深神经网络培训的大量关注,它们利用目标功能过去梯度的指数移动平均值更新搜索方向和学习率。但是,这些方法并不适合解决在培训基因对抗网络中出现的微量最大优化问题。在本文中,我们建议采用适应性动力最小最大算法,将适应性动力方法概括为非康维克斯最小最大系统。此外,我们为在相当广泛的非康维克斯最小最大优化问题类别中使用的拟议算法制定了非零缓解性趋同率。实验结果表明其优于解决此类问题的基准方法。