知识荟萃

基础入门

随机森林

1.Bagging及随机森林 作者:王大宝的CD http://blog.csdn.net/sinat_22594309/article/details/60465700

描述:上一次我们讲到了决策树的应用,但其实我们发现单棵决策树的效果并不是那么的好,有什么办法可以提升决策树的效果呢?这就是今天要提到的Bagging思想。

2.Bagging与随机森林算法原理小结 作者: 刘建平Pinardd http://www.cnblogs.com/pinard/p/6156009.html

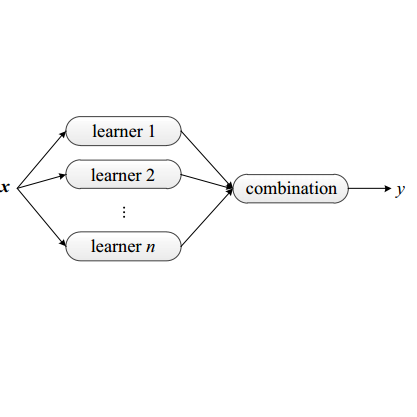

描述:在集成学习原理小结中,我们讲到了集成学习有两个流派,一个是boosting派系,它的特点是各个弱学习器之间有依赖关系。另一种是bagging流派,它的特点是各个弱学习器之间没有依赖关系,可以并行拟合。本文就对集成学习中Bagging与随机森林算法做一个总结。

3.集成学习:Bagging与随机森林 作者:bigbigship http://blog.csdn.net/bigbigship/article/details/51136985

描述:Bagging是并行式集成学习方法的著名代表,它是基于自助采样法(有放回的取样)来提高学习器泛化能力的一种很高效的集成学习方法。

4.Bagging(Bootstrap aggregating)、随机森林(random forests)、AdaBoost 作者:xlinsist http://blog.csdn.net/xlinsist/article/details/51475345

描述:在这篇文章中,我会详细地介绍Bagging、随机森林和AdaBoost算法的实现,并比较它们之间的优缺点,并用scikit-learn分别实现了这3种算法来拟合Wine数据集。全篇文章伴随着实例,由浅入深,看过这篇文章以后,相信大家一定对ensemble的这些方法有了很清晰地了解。

5.Bagging(Bootstrap aggregating)、随机森林(random forests)、AdaBoost http://www.mamicode.com/info-detail-1363258.html

描述:在这篇文章中,我会详细地介绍Bagging、随机森林和AdaBoost算法的实现,并比较它们之间的优缺点,并用scikit-learn分别实现了这3种算法来拟合Wine数据集。全篇文章伴随着实例,由浅入深,看过这篇文章以后,相信大家一定对ensemble的这些方法有了很清晰地了解。

6.分类器组合方法Bootstrap, Boosting, Bagging, 随机森林(一) 作者:Maggie张张 http://blog.csdn.net/zjsghww/article/details/51591009

描述: 提到组合方法(classifier combination),有很多的名字涌现,如bootstraping, boosting, adaboost, bagging, random forest 等等。那么它们之间的关系如何?

7.Bagging与随机森林算法原理小结 作者:6053145618 http://blog.sina.com.cn/s/blog_168cbac120102xbaz.html

描述:在集成学习原理小结中,我们讲到达集成学习有两个流派,一个是boosting派系,它的特点是各个弱学习器之间有倚赖关系。另一种是bagging流派,它的特点是各个弱学习器之间没有倚赖关系,可以并行拟合。本文就对集成学习中Bagging与随机森林算法做一个总结。

8.集成学习(Boosting,Bagging和随机森林) 作者:combatant_yunyun http://blog.csdn.net/u014665416/article/details/51557318

9.基于adaboost,bagging及random forest算法对人脸识别的初探 作者:liyuxin6 http://www.dataguru.cn/thread-290341-1-1.html

描述:人脸识别,也就是给一张脸我们就能让电脑判断这是谁的脸,是一个很有趣的话题。如何才能让电脑也能像我们一样能认出其他人的脸并不是一件容易的事儿:首先你得从一个人的多张脸的图片(训练集)提取出他的脸的特征,然后再将这些特征与新的图片(测试集)进行比较,如果特征都差不多,那这十有八九得是同一张脸。

10.机器学习第5周--炼数成金-----决策树,组合提升算法,bagging和adaboost,随机森林 http://www.mamicode.com/info-detail-1297465.html

描述:随机森林算法优点 准确率可以和Adaboost媲美、对错误和离群点更加鲁棒性、决策树容易过度拟合的问题会随着森林规模而削弱、在大数据情况下速度快,性能好

11.集成学习 (AdaBoost、Bagging、随机森林 ) python 预测 作者:江海成 http://blog.csdn.net/qingyang666/article/details/66472981

描述:森林的一个通俗解释就是:由一组决策树构建的复合分类器,它的特点是每个决策树在样本域里使用随机的特征属性进行分类划分。最后分类时投票每棵分类树的结论取最高票为最终分类结论。