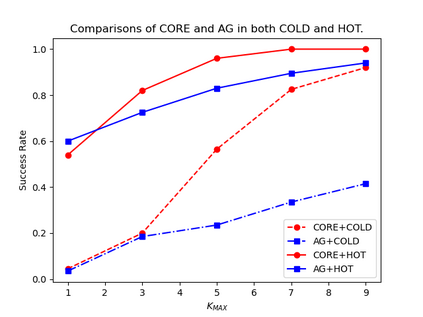

Recommender systems trained on offline historical user behaviors are embracing conversational techniques to online query user preference. Unlike prior conversational recommendation approaches that systemically combine conversational and recommender parts through a reinforcement learning framework, we propose CORE, a new offline-training and online-checking paradigm that bridges a COnversational agent and REcommender systems via a unified uncertainty minimization framework. It can benefit any recommendation platform in a plug-and-play style. Here, CORE treats a recommender system as an offline relevance score estimator to produce an estimated relevance score for each item; while a conversational agent is regarded as an online relevance score checker to check these estimated scores in each session. We define uncertainty as the summation of unchecked relevance scores. In this regard, the conversational agent acts to minimize uncertainty via querying either attributes or items. Based on the uncertainty minimization framework, we derive the expected certainty gain of querying each attribute and item, and develop a novel online decision tree algorithm to decide what to query at each turn. Experimental results on 8 industrial datasets show that CORE could be seamlessly employed on 9 popular recommendation approaches. We further demonstrate that our conversational agent could communicate as a human if empowered by a pre-trained large language model.

翻译:暂无翻译