【专知-PyTorch手把手深度学习教程08】NLP-PyTorch: 用字符级RNN生成名字

点击上方“专知”关注获取更多AI知识!

【导读】主题链路知识是我们专知的核心功能之一,为用户提供AI领域系统性的知识学习服务,一站式学习人工智能的知识,包含人工智能( 机器学习、自然语言处理、计算机视觉等)、大数据、编程语言、系统架构。使用请访问专知 进行主题搜索查看 - 桌面电脑访问www.zhuanzhi.ai, 手机端访问www.zhuanzhi.ai 或关注微信公众号后台回复" 专知"进入专知,搜索主题查看。值国庆佳节,专知特别推出独家特刊-来自中科院自动化所专知小组博士生huaiwen和Mandy创作的-PyTorch教程学习系列, 今日带来最后一篇-< NLP系列(三) 基于字符级RNN的姓名生成 >

< NLP系列(三) 基于字符级RNN的姓名生成 >

Practical PyTorch: 用字符级RNN生成名字

本文翻译自spro/practical-pytorch

原文:https://github.com/spro/practical-pytorch/blob/master/conditional-char-rnn/conditional-char-rnn.ipynb翻译: Mandy

辅助: huaiwen

在上次的教程中,我们使用一个RNN来将名字分类成他们的原生语言。这一节我们将根据语言生成名字。

比如:

> python generate.py Russian

Rovakov

Uantov

Shavakov

> python generate.py German

Gerren

Ereng

Rosher

> python generate.py Spanish

Salla

Parer

Allan

> python generate.py Chinese

Chan

Hang

Iun推荐阅读

假设你至少安装了PyTorch,知道Python,并了解Tensors:

http://pytorch.org/ ( 有关安装说明的网址)

Deep Learning with PyTorch: A 60-minute Blitz (大致了解什么是PyTorch)

jcjohnson's PyTorch examples ( 深入了解PyTorch )

Introduction to PyTorch for former Torchies ( 如果你之前用过 Lua Torch )

知道并了解RNNs 以及它们是如何工作的是很有用的:

The Unreasonable Effectiveness of Recurrent Neural Networks ( 展示了一堆现实生活中的例子)

Understanding LSTM Networks ( 是关于LSTM具体的,但也是关于RNN的一般介绍)

我同样建议之前的一些教程:

Classifying Names with a Character-Level RNN (使用RNN将文本分类)

Generating Shakespeare with a Character-Level RNN ( 使用RNN一次生成一个字符)

准备数据

数据详细信息, 参见上一篇使用字符级RNN分类姓名 - 这次我们使用完全相同的数据集。简而言之,有一堆纯文本文件data / names / [Language] .txt,每行一个名称。我们将线分割成一个数组,将Unicode转换为ASCII,最后使用字典{language:[names ...]}。

import glob

import unicodedata

import string

all_letters = string.ascii_letters + " .,;'-"

n_letters = len(all_letters) + 1 # Plus EOS marker

EOS = n_letters - 1

# Turn a Unicode string to plain ASCII, thanks to http://stackoverflow.com/a/518232/2809427

def unicode_to_ascii(s):

return ''.join(

c for c in unicodedata.normalize('NFD', s)

if unicodedata.category(c) != 'Mn'

and c in all_letters

)

print(unicode_to_ascii("O'Néàl"))O'Neal

# Read a file and split into lines

def read_lines(filename):

lines = open(filename).read().strip().split('\n')

return [unicode_to_ascii(line) for line in lines]

# Build the category_lines dictionary, a list of lines per category

category_lines = {}

all_categories = []

for filename in glob.glob('../data/names/*.txt'):

category = filename.split('/')[-1].split('.')[0]

all_categories.append(category)

lines = read_lines(filename)

category_lines[category] = lines

n_categories = len(all_categories)

print('# categories:', n_categories, all_categories)

# 输出

# categories: 18 ['Arabic', 'Chinese', 'Czech', 'Dutch', 'English', 'French', 'German', 'Greek', 'Irish', 'Italian', 'Japanese', 'Korean', 'Polish', 'Portuguese', 'Russian', 'Scottish', 'Spanish', 'Vietnamese']创建网络

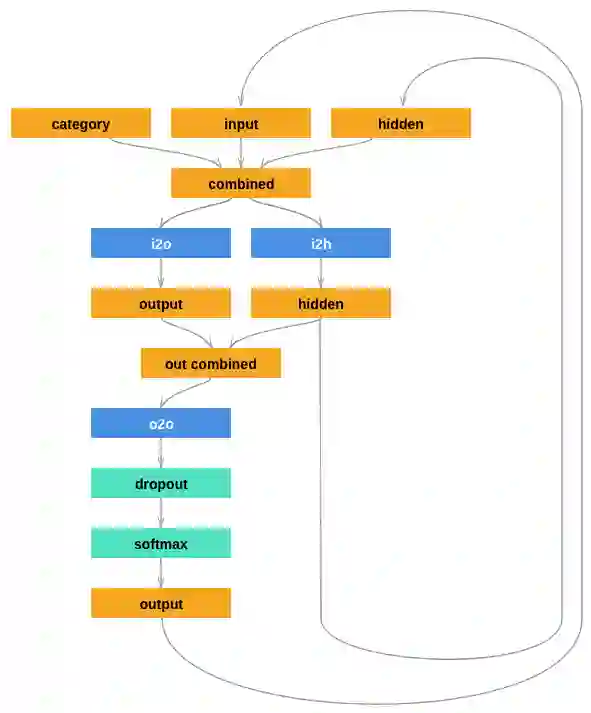

我们将把输出解释为生成某一个字母的概率。当采样时,最可能的输出字母用作下一个输入字母。

这里添加了一个线性层o2o(out combined后面)。和一个dropout层,dropout rate:0.1。

import torch

import torch.nn as nn

from torch.autograd import Variable

class RNN(nn.Module):

def __init__(self, input_size, hidden_size, output_size):

super(RNN, self).__init__()

self.input_size = input_size

self.hidden_size = hidden_size

self.output_size = output_size

self.i2h = nn.Linear(n_categories + input_size + hidden_size, hidden_size)

self.i2o = nn.Linear(n_categories + input_size + hidden_size, output_size)

self.o2o = nn.Linear(hidden_size + output_size, output_size)

self.softmax = nn.LogSoftmax()

def forward(self, category, input, hidden):

input_combined = torch.cat((category, input, hidden), 1)

hidden = self.i2h(input_combined)

output = self.i2o(input_combined)

output_combined = torch.cat((hidden, output), 1)

output = self.o2o(output_combined)

return output, hidden

def init_hidden(self):

return Variable(torch.zeros(1, self.hidden_size))准备训练

首先,我们要得到随机对(category,line):

import random

# Get a random category and random line from that category

def random_training_pair():

category = random.choice(all_categories)

line = random.choice(category_lines[category])

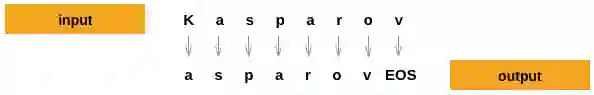

return category, line对于每个时间步(即训练字中的每个字母),网络的输入将为(category, current_letter, hidden_state),输出将为(next_letter, next_hidden_state)。

我们要准备一下训练数据, 训练数据应该是:(category,input,target)

我们在每个时间步上, 根据当前字母, 去预测下一个字母,所以, 对于line, 我们需要切分一下。例如: line=“ABCD <EOS>”,我们将切成(“A”,“B”),(“B”,“C”),(“C”,“D”),(“D”,“EOS”)。

类别是大小为<1 x n_categories>的one-hot向量。训练时,我们每个时间点都会喂给网络, 当然, 这只是一种策略, 你也可以在初始化做了.

# One-hot vector for category

def make_category_input(category):

li = all_categories.index(category)

tensor = torch.zeros(1, n_categories)

tensor[0][li] = 1

return Variable(tensor)

# One-hot matrix of first to last letters (not including EOS) for input

def make_chars_input(chars):

tensor = torch.zeros(len(chars), n_letters)

for ci in range(len(chars)):

char = chars[ci]

tensor[ci][all_letters.find(char)] = 1

tensor = tensor.view(-1, 1, n_letters)

return Variable(tensor)

# LongTensor of second letter to end (EOS) for target

def make_target(line):

letter_indexes = [all_letters.find(line[li]) for li in range(1, len(line))]

letter_indexes.append(n_letters - 1) # EOS

tensor = torch.LongTensor(letter_indexes)

return Variable(tensor)为了方便训练,我们定义一个random_training_set函数,该函数将获取一个随机(category,line)对,并将其转换为所需的(category,input,target)张量。

# Make category, input, and target tensors from a random category, line pair

def random_training_set():

category, line = random_training_pair()

category_input = make_category_input(category)

line_input = make_chars_input(line)

line_target = make_target(line)

return category_input, line_input, line_target训练网络

跟用RNN进行姓名分类不同, 在这里, RNN的每一步output我们都要计算loss, 然后乐累加往回传

def train(category_tensor, input_line_tensor, target_line_tensor):

hidden = rnn.init_hidden()

optimizer.zero_grad()

loss = 0

for i in range(input_line_tensor.size()[0]):

output, hidden = rnn(category_tensor, input_line_tensor[i], hidden)

loss += criterion(output, target_line_tensor[i])

loss.backward()

optimizer.step()

return output, loss.data[0] / input_line_tensor.size()[0]为了看看每一步需要多长, 可以加一个time_since(t)函数:

import time

import math

def time_since(t):

now = time.time()

s = now - t

m = math.floor(s / 60)

s -= m * 60

return '%dm %ds' % (m, s)同时, 我们可以定义一下量, 把他们输出出来

n_epochs = 100000

print_every = 5000

plot_every = 500

all_losses = []

loss_avg = 0 # Zero every plot_every epochs to keep a running average

learning_rate = 0.0005

rnn = RNN(n_letters, 128, n_letters)

optimizer = torch.optim.Adam(rnn.parameters(), lr=learning_rate)

criterion = nn.CrossEntropyLoss()

start = time.time()

for epoch in range(1, n_epochs + 1):

output, loss = train(*random_training_set())

loss_avg += loss

if epoch % print_every == 0:

print('%s (%d %d%%) %.4f' % (time_since(start), epoch, epoch / n_epochs * 100, loss))

if epoch % plot_every == 0:

all_losses.append(loss_avg / plot_every)

loss_avg = 00m 28s (5000 5%) 1.8674

0m 53s (10000 10%) 2.4155

1m 20s (15000 15%) 3.4203

1m 45s (20000 20%) 1.3962

2m 12s (25000 25%) 1.7427

2m 38s (30000 30%) 2.9514

3m 4s (35000 35%) 2.8836

3m 31s (40000 40%) 1.6728

3m 57s (45000 45%) 2.5014

4m 22s (50000 50%) 1.9687

4m 48s (55000 55%) 1.5595

5m 16s (60000 60%) 2.3830

5m 43s (65000 65%) 1.5155

6m 10s (70000 70%) 1.7967

6m 37s (75000 75%) 1.8564

7m 3s (80000 80%) 1.9873

7m 30s (85000 85%) 1.9569

7m 56s (90000 90%) 1.7553

8m 22s (95000 95%) 2.3103

8m 48s (100000 100%) 1.7575

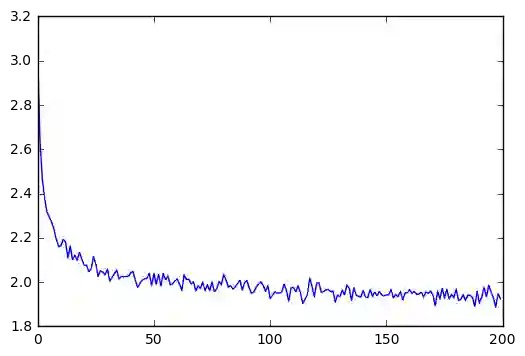

绘制

从 all_losses变量绘制的历史数据图展示网络学习:

import matplotlib.pyplot as plt

import matplotlib.ticker as ticker

%matplotlib inline

plt.figure()

plt.plot(all_losses)测试

测试方法比较简单: 我们给网络一个字母, 等他输出下一个字母, 然后把这个字母作为输入, 喂进去, 直到<EOS>

流程如下:

给定category, 和current_letter, 初始化一个空的hidden_state,(category, current_letter, hidden_state), 喂给网络

创建一个输出output_str, output_str的第一个字母是 起始字母

取决于最大输出长度

将当前的字母喂给网络

从获取下一个字母,和下一个隐藏状态

如果字母是 EOS, 结束

如果是普通字母, 就添加到 output_str 并继续

返回最终的名字

max_length = 20

# Generate given a category and starting letter

def generate_one(category, start_char='A', temperature=0.5):

category_input = make_category_input(category)

chars_input = make_chars_input(start_char)

hidden = rnn.init_hidden()

output_str = start_char

for i in range(max_length):

output, hidden = rnn(category_input, chars_input[0], hidden)

# Sample as a multinomial distribution

output_dist = output.data.view(-1).div(temperature).exp()

top_i = torch.multinomial(output_dist, 1)[0]

# Stop at EOS, or add to output_str

if top_i == EOS:

break

else:

char = all_letters[top_i]

output_str += char

chars_input = make_chars_input(char)

return output_str

# Get multiple samples from one category and multiple starting letters

def generate(category, start_chars='ABC'):

for start_char in start_chars:

print(generate_one(category, start_char)) 比如:

generate('Russian', 'RUS')

'''

output:

Riberkov

Urtherdez

Shimanev

'''generate('German', 'GER')

'''

output:

Gomen

Ester

Ront

'''generate('Spanish', 'SPA')

'''

output:

Sandar

Per

Alvareza

'''generate('Chinese', 'CHI')

'''

output:

Cha

Hang

Ini

'''Practical PyTorch repo的代码分成以下部分:

data.py (loads files)

model.py (defines the RNN)

train.py (runs training)

generate.py (runs generate() with command line arguments)

运行train.py来训练并保存网络。

然后用generate.py来查看生成的名字:

$ python generate.py Russian

Alaskinimhovev

Beranivikh

Chamon专知PyTorch深度学习教程系列, 到此结束,感谢各位专知友的支持,欢迎转发分享和进入群里讨论~

完整系列搜索查看,请PC登录

www.zhuanzhi.ai, 搜索“PyTorch”即可得。

对PyTorch教程感兴趣的同学,欢迎进入我们的专知PyTorch主题群一起交流、学习、讨论,扫一扫如下群二维码即可进入:

群满,请扫描小助手,加入进群~

了解使用专知-获取更多AI知识!

-END-

欢迎使用专知

专知,一个新的认知方式!目前聚焦在人工智能领域为AI从业者提供专业可信的知识分发服务, 包括主题定制、主题链路、搜索发现等服务,帮你又好又快找到所需知识。

使用方法>>访问www.zhuanzhi.ai, 或点击文章下方“阅读原文”即可访问专知

中国科学院自动化研究所专知团队

@2017 专知

专 · 知

关注我们的公众号,获取最新关于专知以及人工智能的资讯、技术、算法、深度干货等内容。扫一扫下方关注我们的微信公众号。

点击“ 阅读原文 ”,使用 专知!