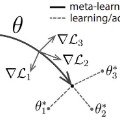

Currently available benchmarks for few-shot learning (machine learning with few training examples) are limited in the domains they cover, primarily focusing on image classification. This work aims to alleviate this reliance on image-based benchmarks by offering the first comprehensive, public and fully reproducible audio based alternative, covering a variety of sound domains and experimental settings. We compare the few-shot classification performance of a variety of techniques on seven audio datasets (spanning environmental sounds to human-speech). Extending this, we carry out in-depth analyses of joint training (where all datasets are used during training) and cross-dataset adaptation protocols, establishing the possibility of a generalised audio few-shot classification algorithm. Our experimentation shows gradient-based meta-learning methods such as MAML and Meta-Curvature consistently outperform both metric and baseline methods. We also demonstrate that the joint training routine helps overall generalisation for the environmental sound databases included, as well as being a somewhat-effective method of tackling the cross-dataset/domain setting.

翻译:这项工作旨在通过提供第一种综合、公开和完全可复制的基于声音的替代方法,包括各种健全的领域和实验环境,减轻对基于图像的基准的依赖。我们比较了七个音频数据集(从环境声音到人类声音)的各种技术的微小分类性能。扩大这一范围,我们深入分析了联合培训(在培训期间使用所有数据集)和交叉数据集适应协议,确定了采用通用的音频点分类算法的可能性。我们的实验展示了基于梯度的元学习方法,例如MAML和Met-Curtuart一贯地优于衡量和基线方法。我们还表明,联合培训程序有助于对包含的环境声音数据库的总体概括化,并且是一种处理交叉数据集/域设置的比较有效的方法。