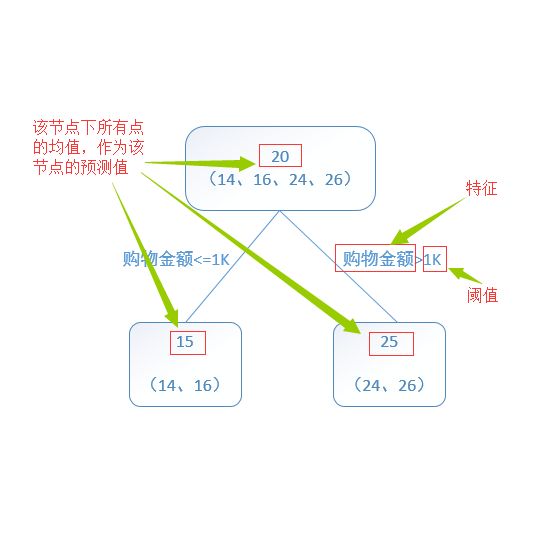

Despite the success of deep learning in computer vision and natural language processing, Gradient Boosted Decision Tree (GBDT) is yet one of the most powerful tools for applications with tabular data such as e-commerce and FinTech. However, applying GBDT to multi-task learning is still a challenge. Unlike deep models that can jointly learn a shared latent representation across multiple tasks, GBDT can hardly learn a shared tree structure. In this paper, we propose Multi-task Gradient Boosting Machine (MT-GBM), a GBDT-based method for multi-task learning. The MT-GBM can find the shared tree structures and split branches according to multi-task losses. First, it assigns multiple outputs to each leaf node. Next, it computes the gradient corresponding to each output (task). Then, we also propose an algorithm to combine the gradients of all tasks and update the tree. Finally, we apply MT-GBM to LightGBM. Experiments show that our MT-GBM improves the performance of the main task significantly, which means the proposed MT-GBM is efficient and effective.

翻译:尽管在计算机视觉和自然语言处理方面的深层学习取得了成功,但渐进推动决策树(GBDT)仍然是使用电子商业和FinTech等表格数据应用的最有力工具之一。然而,将GBDT应用于多任务学习仍然是一个挑战。与能够共同学习多种任务共同潜在代表性的深层模型不同,GBDT很难学到一个共享的树结构。在本文件中,我们提议了多任务渐进推动机(MT-GBM),这是一种基于GBDT的多任务学习方法。MT-GBM可以根据多任务损失找到共享的树结构和分割分支。首先,它给每个叶节分配了多项产出。接着,它计算了与每项产出(任务)相应的梯度。然后,我们又提议了一个算法,将所有任务的梯度结合起来,并更新树木。最后,我们将MT-GBMMM(MBM)应用于LightGBM。实验显示,我们的MT-GBMM大大改进了主要任务的绩效,这意味着拟议的MT-GBM是高效和有效的。