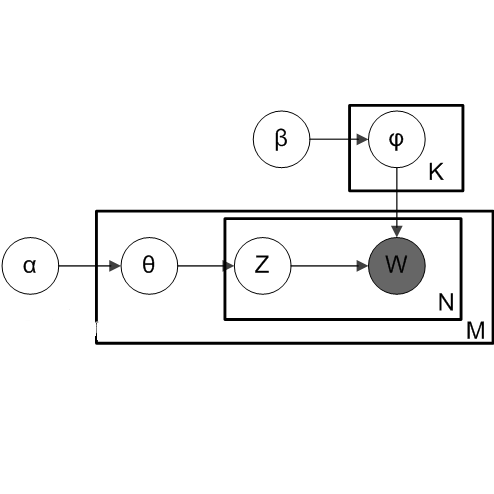

Background: Unstructured and textual data is increasing rapidly and Latent Dirichlet Allocation (LDA) topic modeling is a popular data analysis methods for it. Past work suggests that instability of LDA topics may lead to systematic errors. Aim: We propose a method that relies on replicated LDA runs, clustering, and providing a stability metric for the topics. Method: We generate k LDA topics and replicate this process n times resulting in n*k topics. Then we use K-medioids to cluster the n*k topics to k clusters. The k clusters now represent the original LDA topics and we present them like normal LDA topics showing the ten most probable words. For the clusters, we try multiple stability metrics, out of which we recommend Rank-Biased Overlap, showing the stability of the topics inside the clusters. Results: We provide an initial validation where our method is used for 270,000 Mozilla Firefox commit messages with k=20 and n=20. We show how our topic stability metrics are related to the contents of the topics. Conclusions: Advances in text mining enable us to analyze large masses of text in software engineering but non-deterministic algorithms, such as LDA, may lead to unreplicable conclusions. Our approach makes LDA stability transparent and is also complementary rather than alternative to many prior works that focus on LDA parameter tuning.

翻译:背景:未结构化和文字版数据正在迅速增加,而Litetn Dirichlet分配(LDA)专题模型化(LDA)是一个流行的数据分析方法。过去的工作表明LDA专题的不稳定性可能导致系统性错误。目标:我们提出一种方法,依靠复制LDA运行、集群,并为专题提供一个稳定性衡量标准。方法:我们生成 kLDA 专题,并复制这一过程,从而产生n*k专题。然后,我们用K- Medimoids将n*k专题分组组合成k k组。现在,K类组代表原始LDA专题,我们把它们像普通LDA专题一样,显示10个最有可能的字眼。对于这些组,我们尝试多种稳定性衡量标准,从中我们推荐了Rang-Biased overlap, 显示各组内专题的稳定性。结果:我们提供了初步验证方法用于270,70,000 Mozilla Firefox用 k=20和n=20。我们展示了我们的专题稳定性衡量标准是如何与专题内容相联系的。结论:文本开采的进展使我们能够分析软件工程中的大量文本,但并非DALdeminalforma重点。