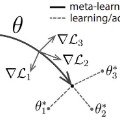

In past years model-agnostic meta-learning (MAML) has been one of the most promising approaches in meta-learning. It can be applied to different kinds of problems, e.g., reinforcement learning, but also shows good results on few-shot learning tasks. Besides their tremendous success in these tasks, it has still not been fully revealed yet, why it works so well. Recent work proposes that MAML rather reuses features than rapidly learns. In this paper, we want to inspire a deeper understanding of this question by analyzing MAML's representation. We apply representation similarity analysis (RSA), a well-established method in neuroscience, to the few-shot learning instantiation of MAML. Although some part of our analysis supports their general results that feature reuse is predominant, we also reveal arguments against their conclusion. The similarity-increase of layers closer to the input layers arises from the learning task itself and not from the model. In addition, the representations after inner gradient steps make a broader change to the representation than the changes during meta-training.

翻译:在过去几年中,模型-不可知元学习(MAML)是元学习中最有希望的方法之一,可以应用于不同种类的问题,例如强化学习,但也可以在微小的学习任务上取得良好结果。除了这些任务的巨大成功之外,它仍未完全被揭示出来,为什么它如此成功。最近的工作建议MAML比再利用的特性要好,而不是迅速学习。在本文件中,我们希望通过分析MAML的代表性来激发对这一问题的更深刻的理解。我们采用代表性分析(RSA),这是神经科学中一种成熟的方法,也是对MAML的微小的即时学习。虽然我们的分析中的一部分支持其一般性结果,以再利用为主,但我们也提出了反对其结论的论点。与投入层相近的层次的类似性增加产生于学习任务本身而不是模型。此外,在内梯级步骤之后的表述对代表性的变化比元培训期间的变化要大得多。